by Sudip Saha

The assumption that “bigger is always better” has shaped an arms race of GPUs and spend and made LLMs a staple in most forward-looking orgs.

But the evidence is more nuanced: many “emergent abilities” look less like magic thresholds and more like artifacts of metrics and evaluation methods; capability generally improves smoothly with scale.

The upshot: size helps but it isn’t the only or even the dominant lever for many real-world problems.

Small language models (SLMs) flip the optimization target. (for the purpose of this article, SLMs = models ≤10B parameters (and “tiny SLMs” ≤1B).

They emphasize efficiency, controllability, and deployability rather than maximal breadth. Done right via careful data selection, distillation, and instruction tuning SLMs can approach, and on specific tasks even match, far larger systems, while unlocking on-device and on-prem deployments.

Cost and environmental consequences

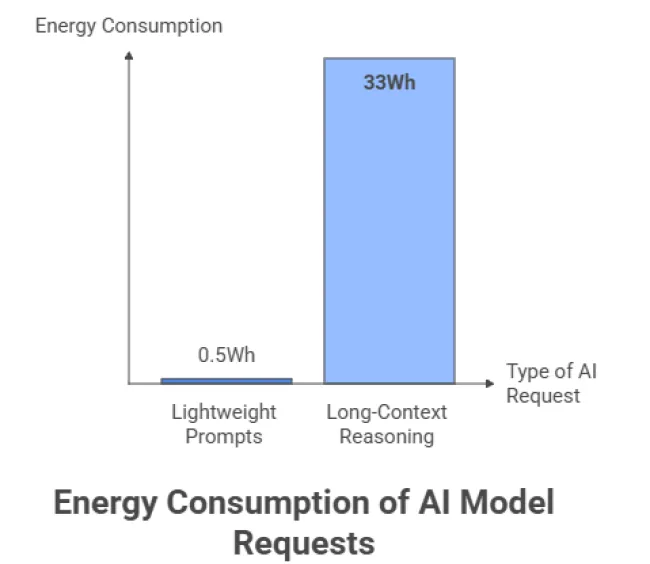

Per-prompt energy varies by model, context length, and serving stack. Recent production data from Google reports a median of 0.24 Wh per Gemini text prompt (and 0.26 mL of water), with large year-over-year efficiency gains.

Conversely, independent benchmarking finds heavy long-context reasoning on certain frontier models can exceed 33 Wh per request. Together, these show a wide band: sub-Wh for typical lightweight prompts up to tens of Wh for long, compute-intensive chains of thought. Plan capacity accordingly.

Training and serving footprints matter. Classic analyses of large-model training (e.g., GPT-3-class systems) show substantial energy and CO₂ impacts, and emphasize how location, data center efficiency, hardware, and sparsity can swing footprints by orders of magnitude.

Water usage is an additional, under-reported dimension: estimates for large-model training and serving include hundreds of thousands of liters directly, with broader system-level impacts depending on grid mix and cooling. Critically, inference tends to dominate lifecycle energy in deployment as usage scales.

Takeaway: per-query energy is not one number; it’s a distribution. Architect for the median and the tail.

SLMs (≤10B) can run on modest servers and, for tiny variants, on edge devices. This cuts network round-trips (latency), limits data egress, and diversifies hosting options beyond a few hyperscalers—useful in settings like realtime voice or offline/spotty connectivity.

Quality beats uninformed quantity.

Phi-2 (2.7B) is a clean example: Microsoft attributes its outsize performance relative to model size to “textbook-quality” data curation and targeted scaling, reporting that on several complex benchmarks it matches or outperforms models up to ~25× larger (context-specific).

Earlier academic work showed smaller models with prompt-based fine-tuning can achieve GPT-3-level few-shot classification on SuperGLUE. The common thread is data discipline and task framing.

High performance at a fraction of the size

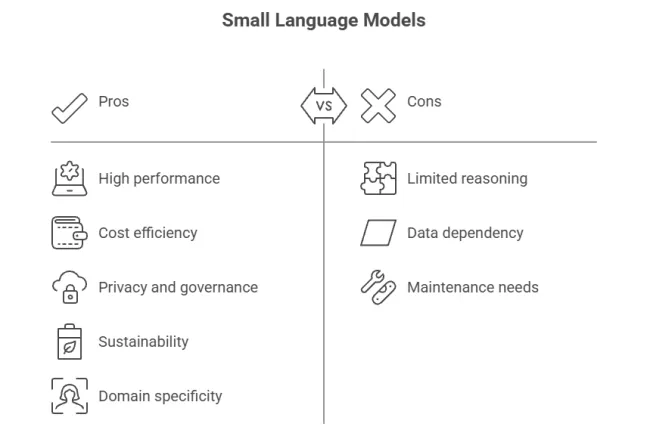

Modern SLMs close much of the task-bounded gap with careful curation, instruction tuning, and (increasingly) inference-time compute techniques. Microsoft’s Phi line documents sustained progress in the ≤10–14B range; the point is not that SLMs beat frontier models in open-ended reasoning, but that for scoped workloads they can be good enough with far lower footprint.

Smaller footprints cut serving costs (memory, bandwidth, accelerators) and make consumer-grade training/fine-tuning feasible for 7–8B-class models. This is where many teams realize 10× speed-of-iteration gains: you can fine-tune, ship, measure, and repeat without burning a hole in your budget.

SLMs enable on-prem/walled-garden deployments, which simplifies governance for regulated data (education, health, finance) and limits data exposure to third-party APIs. Universities and public-sector IT leaders explicitly cite these benefits when choosing smaller, on-prem models

Fewer active parameters typically means lower energy per token and less heat to move. That doesn’t make SLMs “free” but it does give you a lower baseline and more options (edge, duty-cycling, DVFS). The broader literature also shows how prompt length and generation strategy strongly affect energy; SLMs let you control both knobs more tightly.

LLMs are encyclopedic generalists; SLMs shine when context is narrow and stakes are high. Smaller models distilled/fine-tuned on proprietary corpora often reduce off-topic drift and latency while improving task relevance, especially behind guardrails and retrieval. Institutions report success running multiple small, purpose-built models rather than one monolith.

On broad, out-of-domain tasks (multi-hop QA, complex tool orchestration), frontier LLMs still lead. Even strong SLMs like Phi-2 rely on deliberate curation and, for some capabilities, inference-time scaling that narrows but doesn’t erase the gap.

Specialization multiplies artifacts: datasets, evals, safety policies, drift monitors. Without disciplined MLOps, you trade one big model for dozens of small ones and a governance headache. (Solution: a shared eval harness, versioned prompts/checkpoints, and automated regressions.)

Smaller training sets mean higher variance and greater sensitivity to skew. You still need diverse data, red-teaming, and post-training alignment even for tiny models.

SLMs reduce per-task energy, but aggregate usage can still climb (Jevons). Multiple sources suggest inference dominates lifecycle energy in real deployments; unless clean power scales and you cap unnecessary calls, efficiency gains can be overwhelmed by demand.

A healthy stack composes models. Practical patterns: routers (SLM triages which requests escalate), pre/post-processors (SLM compresses/cleans prompts and checks outputs), and evaluators (SLM-based safety/quality filters). Architectures like mixture-of-experts exploit sparse activation to keep compute proportional to task. Surveys of SLM research outline these collaboration patterns in detail.

The evidence to date suggests small language models are not a curiosity; they’re a first-class tool for responsible, accessible AI: good task-specific accuracy, better latency, tighter governance, and markedly lower baseline cost/energy. Use big models where coverage and open-ended reasoning are mission-critical; use small ones whenever the job allows.

That’s not settling, it’s strategy.