AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market

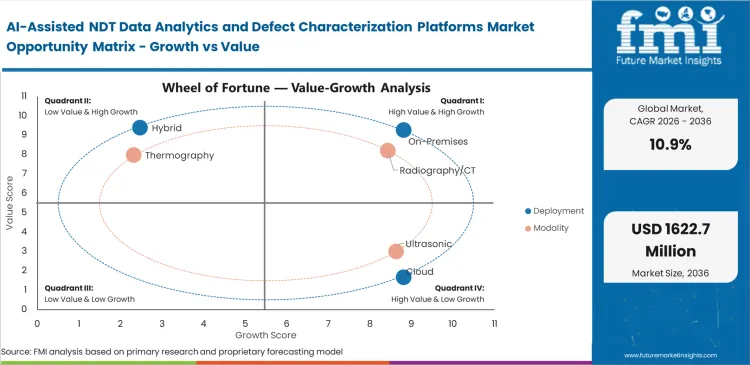

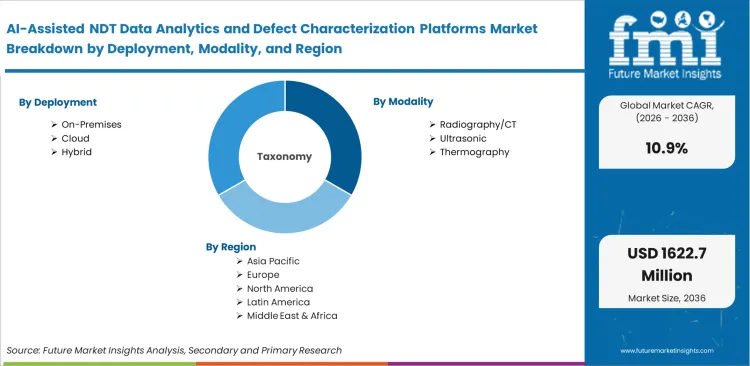

The AI-Assisted NDT Data Analytics and Defect Characterization Platforms market is segmented by Deployment (On-premises, Cloud, Hybrid), Modality (Radiography/CT, Ultrasonic, Eddy Current, Thermography, Visual), Function (Defect Detection, Defect Classification, Defect Sizing, Reporting, Workflow Management), Workflow Stage (Post-inspection, In-line, Near-line), End Use (Oil & Gas, Aerospace, Automotive, Power, Manufacturing), and Region. Forecast for 2026 to 2036.

Historical Data Covered: 2016 to 2024 | Base Year: 2025 | Estimated Year: 2026 | Forecast Period: 2027 to 2036

AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Size, Market Forecast and Outlook By FMI

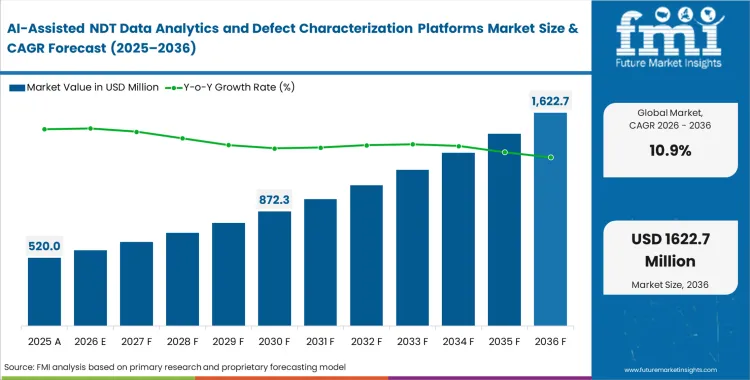

The AI-assisted NDT data analytics market crossed a valuation of USD 470 million in 2025. The industry is expected to reach USD 520 million in 2026 at a CAGR of 10.9% during the forecast period. Demand outlook carries the market valuation to USD 1,460 million by 2036 as asset owners integrate automated defect-recognition capabilities into inspection workflows to reduce interpretive variability in safety-critical assessments.

Aerospace and energy operators face mounting inspection workloads that outstrip manual review capacity. Image queues slow final assembly and extend return‑to‑service timelines, prompting teams to embed analytics software directly into the non-destructive testing workflow. Delays tied to manual interpretation raise daily downtime costs, strengthening the case for platforms that process high‑volume, multidimensional image sets with minimal lag. User priorities consistently revolve around whether the software can integrate with aging inspection hardware fleets, since most facilities operate mixed‑generation equipment. Vendors offering hardware‑agnostic data ingestion gain a clear competitive position, as scalable non-destructive testing equipment deployments depend on seamless interoperability rather than marginal differences in algorithm design.

Summary of AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market

- Market Snapshot

- The AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market is valued at USD 470 million in 2025 and is projected to reach USD 1,460 million by 2036.

- The industry is expected to expand at a 10.9% CAGR from 2026 to 2036, creating an incremental opportunity of USD 940 million over the period.

- This remains a software-led industrial inspection niche where competition depends on defect-recognition accuracy, cross-modality compatibility, validation traceability, and integration with existing NDT workflows.

- Demand is strongest in inspection settings where teams must process large image volumes, reduce interpretation variability, and document defect characterization more consistently for audit-sensitive industries.

- Demand and Growth Drivers

- Demand is rising because AI-assisted interpretation helps automate high-volume NDT data review and supports faster, more consistent defect recognition than purely manual analysis in radiography, ultrasonics, and CT-heavy workflows.

- Adoption is strengthening in CT-centric inspection because suppliers now offer AI-based segmentation, reconstruction, and defect-analysis functions that reduce operator burden and improve interpretation of volumetric datasets.

- Growth is also supported by zero-defect manufacturing programs and digitally enhanced inspection initiatives across Europe and other industrial regions.

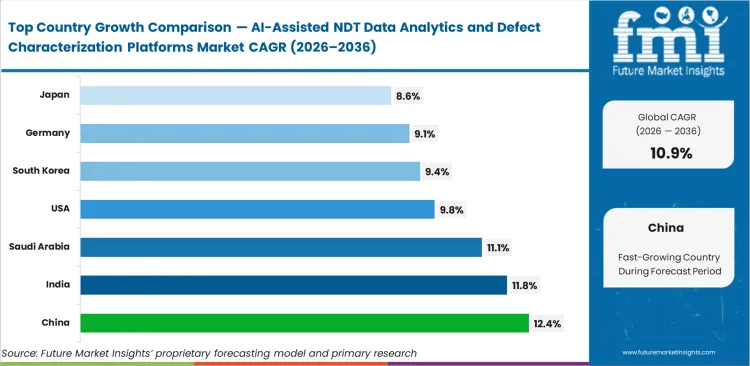

- Among key countries, China is anticipated to grow at 12.4% CAGR, followed by India at 11.8%, Saudi Arabia at 11.1%, the United States at 9.8%, South Korea at 9.4%, Germany at 9.1%, and Japan at 8.6%.

- Expansion is moderated by training-data scarcity, qualification burdens in safety-critical sectors, and the need to validate AI outputs against accepted NDT procedures and code requirements.

- Product and Segment View

- The market covers software platforms for AI-assisted interpretation, defect characterization, analytics, reporting, and inspection workflow management across radiography/CT, ultrasonic, eddy-current, thermography, and visual-testing data.

- These platforms are used across oil and gas, aerospace, automotive, power generation, and broader discrete manufacturing where digital inspection records carry operational importance.

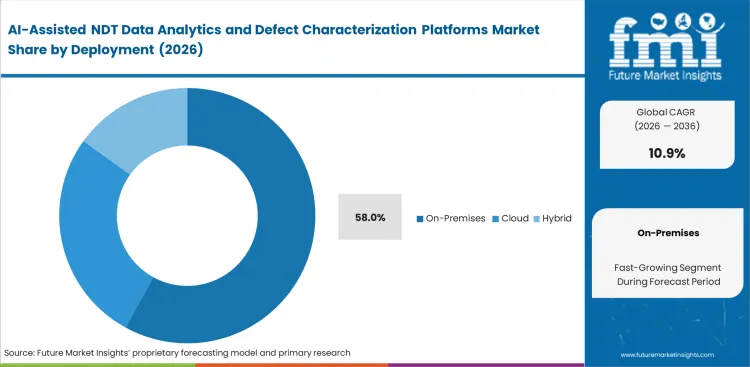

- On-premises is expected to account for 58% share in 2026, reflecting security, latency, and data-governance preferences at industrial sites handling sensitive inspection files.

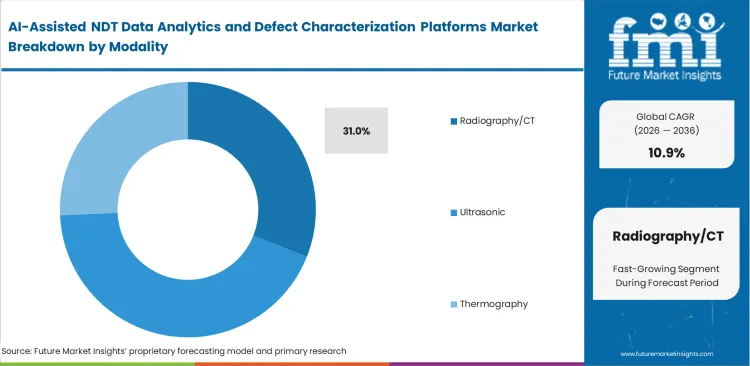

- Radiography/CT is projected to represent 31% share in 2026, supported by image-rich datasets that benefit most from AI-assisted recognition and characterization workflows.

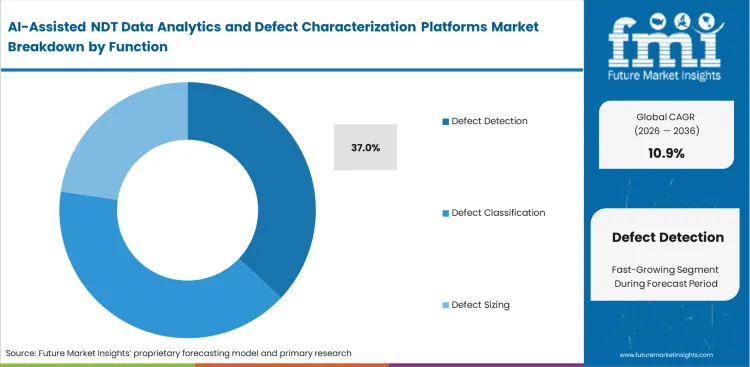

- Defect Detection is forecast to command 37% share in 2026, as buyers usually automate first-pass finding before scaling classification and sizing workflows.

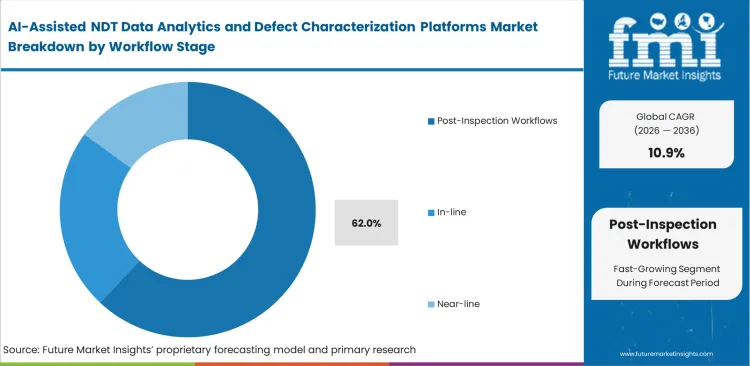

- Post-inspection is likely to account for 62% share in 2026 because most industrial users still layer AI analytics onto existing review routines before moving toward full in-line autonomy.

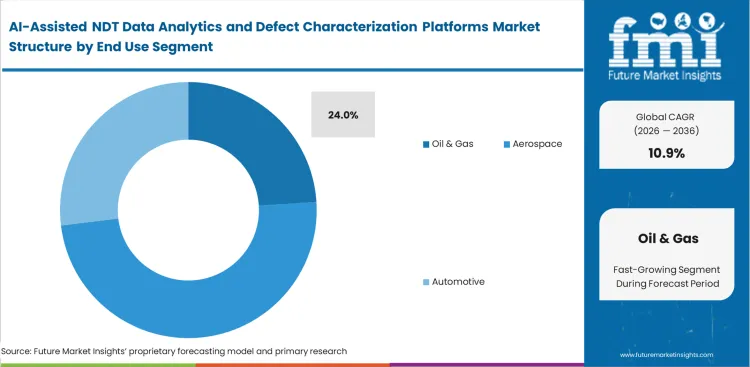

- Oil & Gas is estimated to contribute 24% share in 2026, supported by the need to interpret corrosion, weld, and integrity data across complex asset bases.

- Scope includes commercial licenses, analytics modules, ADR software, CT-analysis software, and workflow tools, but excludes core scanners, probes, detectors, consumables, and pure inspection labor.

- Geography and Competitive Outlook

- China, India, and Saudi Arabia are the fastest-rising markets, while the United States remains the most stable high-value adoption base for enterprise-grade inspection software.

- Competition is being shaped by AI-assisted feature additions to existing NDT software stacks, CT-analysis upgrades, inspection workflow integration, and closer ties between platform vendors and installed hardware ecosystems.

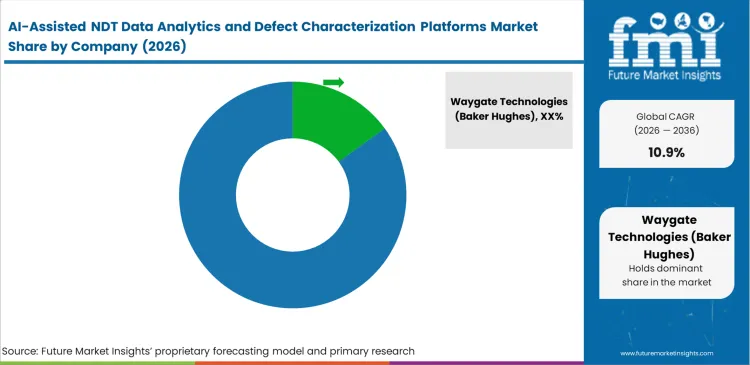

- Key participants include Waygate Technologies, Hexagon VG software, Comet Yxlon, Zetec, MISTRAS Group, and VisiConsult.

- The market is moderately concentrated, with the leading vendor estimated at 15% share, because buyers often prefer vendors already embedded in inspection hardware or established NDT data environments.

Adoption patterns shift once major manufacturers certify a neural-network architecture for safety-critical components. Supplier networks then move to align with the approved analytical standard, as aerospace primes incorporate automated defect-recognition expectations directly into quality manuals. Passing this qualification step repositions the market: buyers transition from isolated algorithm trials to enterprise‑level rollouts across global supply chains.

China is anticipated to expand at 12.4% as industrial digitalization initiatives strengthen quality-control expectations across heavy manufacturing and creates wider integration of AI-enabled non-destructive testing analytics. India is anticipated to expand at 11.8%, supported by rapid scale‑up in electronics and automotive production that depends on high-throughput defect‑characterization workflows. Saudi Arabia is anticipated to expand at 11.1% as energy operators modernize asset-integrity programs and embed analytics‑led inspection practices into routine field operations. The United States is anticipated to expand at 9.8%, reflecting incremental upgrades across a mature installed base rather than first‑time digitization. South Korea is anticipated to expand at 9.4% as advanced manufacturing segments apply machine‑learning models to established inspection processes. Germany is anticipated to expand at 9.1%, with demand concentrated in precision‑engineering applications requiring multimodal data integration. Japan is anticipated to expand at 8.6%, driven by growing reliance on predictive models for long‑serviced industrial assets. Regional divergence aligns with whether markets are building new AI‑native inspection lines or retrofitting legacy non-destructive testing frameworks with algorithmic overlays.

Segmental Analysis

AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Analysis by Deployment

Data sovereignty requirements shape infrastructure choices across heavy industrial inspection environments because facility operators handling sensitive component data keep strict control over where inspection files can reside and who can access them. Defense contractors and nuclear operators rarely permit high-resolution schematics or scan outputs to move outside internal networks, which keeps deployment decisions tied closely to security policy rather than software preference. Security and compliance teams treat that restriction as a standing operating condition, so platform selection often begins with infrastructure limits already in place. The on-premises NDT analytics software architecture is therefore expected to hold 58.0% share in 2026. That preference shifts more of the capital burden to the buyer, since companies evaluating enterprise NDT analytics platforms must also invest in local computing capacity, secure storage, and isolated server environments as part of implementation. Review of inspection-service requirements often makes those costs more visible, especially when software pricing is assessed alongside long-term infrastructure support. On-premises leadership does not persist because cloud architecture is technically inferior, but because compliance rules for critical asset data make external routing difficult to approve regardless of software capability. Vendors pushing cloud-first models into these environments often face resistance at the approval stage, where cybersecurity and compliance teams can block adoption before the engineering case is even reviewed.

- Security validation: Localized processing ensures proprietary component geometries never touch external networks. Facility security directors maintain complete chain-of-custody control over critical infrastructure data.

- Latency elimination: Direct hardware connections bypass internet bandwidth constraints during massive volumetric data transfers. Quality control technicians avoid processing delays that would otherwise stall active production lines.

- Version persistence: Isolated deployments prevent unwanted algorithm updates from altering validated inspection parameters. Compliance managers avoid mandatory software re-qualification cycles that cloud providers often force through automated patching.

AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Analysis by Modality

Complex volumetric data generation systematically lowers manual interpretation capabilities across high-value component manufacturing. Radiography/CT is projected to capture 31.0% share in 2026, as the sheer density of voxel data produced by modern scanning hardware mandates algorithmic assistance for timely review. FMI observes that NDT Level III technicians rely on this modality to uncover internal porosities in additively manufactured parts, deploying industrial CT defect analysis software where traditional surface methods fail completely. Integrating non destructive testing equipment into these workflows requires software capable of handling terabytes of data per shift. What the modality share obscures is that identifying the best software for CT defect analysis requires vastly different neural network architectures than standard 2D radiography defect recognition AI, creating a massive technical moat for specialized software vendors. Failing to deploy automated recognition for CT pipelines results in technicians spending hours reviewing a single complex part, destroying any throughput advantage gained from advanced manufacturing.

- Voxel interpretation: Deep learning models evaluate true three-dimensional geometry rather than two-dimensional projections. NDT technicians identify hidden internal flaws utilizing CT porosity analysis software that overlap and mask each other in standard radiographic views.

- Density mapping: Algorithms highlight subtle material density gradients indicative of incomplete fusion in 3D printed components. Metallurgists prevent failures in critical aerospace brackets by flagging microscopic void clusters using targeted ADR software for radiography inspection.

- Artifact suppression: Software filters actively remove beam hardening and scatter noise from the digital reconstruction. Radiologists avoid false positive classifications caused by complex part geometries scattering the X-ray source.

AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Analysis by Function

Identifying anomalies is the first requirement before any system can reliably move into sizing or classification. Facilities shifting from fully manual inspection usually begin with defect detection because it supports the inspection process without removing operator control. Quality assurance managers use AI-based nondestructive testing tools to flag areas of interest while leaving the final judgment to certified inspectors, which makes adoption easier inside regulated or skill-sensitive environments. Similar phased buying behavior appears in electromagnetic testing analytics, where buyers want proof that baseline detection works consistently before they expand into more advanced functions. Plants rarely commit to full ultrasonic defect classification software until the detection layer shows that it can identify critical flaws under live production conditions with acceptable consistency. Defect Detection is projected to account for 37.0% share in 2026. Operations leaders trying to move directly into automated sizing often face resistance from inspection teams, especially where workforce acceptance and process accountability still depend on human verification.

- Anomaly flagging: Rapid bounding box generation directs human attention to specific regions of massive data sets. Inspection technicians dramatically reduce total review time by focusing only on algorithmically highlighted zones.

- Threshold calibration: Software sensitivity adjustments allow facilities to tune the false positive rate against production speed. Quality managers balance the cost of unnecessary secondary reviews against the risk of escaping defects.

- Baseline comparison: Algorithmic overlays compare current scans against pristine digital master models. Manufacturing engineers instantly detect tooling wear or process drift before defects exceed acceptable tolerances.

AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Analysis by Workflow Stage

Legacy production lines physically separate the inspection process from active manufacturing flow. Post-inspection workflows are anticipated to record 62.0% share in 2026, aligning with how heavy industries currently batch process components through centralized quality laboratories. Facility managers utilize this stage to aggregate data from multiple industrial radiography stations into a single inspection data workflow software queue. The practitioner reality is that post-inspection dominance reflects a limitation in factory floor networking infrastructure rather than a preference for delayed analysis; edge devices simply cannot process complex neural networks fast enough to sit directly on the active line. Plants relying entirely on post-inspection analytics inevitably discover defect trends hours after a bad batch has been produced, resulting in massive scrap costs.

- Batch ingestion: Centralized servers process gigabytes of aggregated inspection data during off-shift hours. IT directors maximize compute hardware utilization by scheduling heavy analytical workloads outside peak production times.

- Comprehensive review: Analysts synthesize multimodal data sets gathered from different inspection stations over several days. Lead quality engineers build complex failure mode profiles that isolated in-line sensors cannot detect.

- Archive alignment: Post-processing software formats and tags analytical results for long-term database storage. Compliance auditors retrieve historical algorithm decisions instantly during regulatory investigations.

AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Analysis by End Use

Extreme operating conditions and the cost of failure shape inspection priorities in the energy sector. Oil and gas operators use inspection analytics platforms to interpret large volumes of pipeline corrosion data collected through automated crawler systems, phased array systems, and digital radiography workflows. Asset integrity teams rely on these platforms to estimate remaining service life by tracking crack development and material degradation through phased array analysis and digital radiography outputs. Oil & Gas is expected to account for 24.0% share in 2026. That share also reflects a change in buying intent, since major operators are no longer investing in software only to identify defects, but to support decisions on extending the life of aging assets beyond original design assumptions where conditions permit. Algorithms are increasingly used to model stress progression over time, giving engineering teams a stronger basis for repair, replacement, or continued operation decisions. Companies that delay this shift often fall back on conservative manual assessments, which can lead to earlier equipment replacement than the asset condition actually warrants.

- Traceability mandates: Aerospace primes demand immutable digital records of every algorithmic decision. Compliance managers utilize aerospace NDT analytics software to automatically generate audit-ready logs that satisfy strict Federal Aviation Administration requirements.

- High-throughput verification: Electric vehicle production lines generate thousands of welds per shift. Plant managers deploy battery CT defect analysis software to evaluate internal anode alignment instantly without stalling the active assembly line.

- Porosity tolerance: Foundries produce massive metal components with acceptable micro-void limits. Lead engineers utilize casting defect characterization software to mathematically prove a flaw remains within safe tolerances rather than immediately scrapping the part.

AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Drivers, Restraints, and Opportunities

Severe inspection bottlenecks created by advanced manufacturing throughput force quality directors to act immediately. Producing complex additively manufactured parts takes hours, but manually reviewing the resulting terabytes of CT scan data can take days, completely negating the speed advantage of modern production. Facility managers cannot physically hire enough certified Level III technicians to process this data volume manually. Delaying the implementation of machine vision and analytical overlays results in finished components sitting in quarantine, stalling revenue realization and severely disrupting downstream assembly schedules.

The requirement for vast, proprietary training datasets creates friction for new algorithmic deployments. Machine learning models require thousands of images of specific, labeled defects to achieve acceptable accuracy. Facilities rarely possess well-organized, historically annotated digital archives of flawed components. Vendors attempt to bridge this gap with synthetic data generation, but regulatory bodies evaluating AI validation for NDT software remain highly skeptical of algorithms trained on simulated defects, forcing buyers into lengthy, expensive internal data collection phases before software can be fully utilized.

Opportunities in the AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market

- Synthetic defect generation: Software developers build physics-based simulation engines to train AI models on rare failure modes. Algorithm engineers bypass the need for physical flawed samples by generating mathematically accurate industrial machine vision training data.

- Edge-native models: Hardware manufacturers compress complex neural networks to run directly on battery-powered handheld inspection devices. Field technicians receive instant algorithmic feedback while hanging from rope access points on offshore platforms.

- Multi-modal synthesis: Analytics platforms fuse radiographic, ultrasonic, and thermographic data into a single unified defect profile. Quality directors eliminate diagnostic uncertainty by cross-referencing anomalies across completely different physical phenomena.

Regional Analysis

Based on regional analysis, AI-Assisted NDT Data Analytics and Defect Characterization Platforms is segmented into Asia Pacific, North America, and Europe & Middle East across 40 plus countries.

.webp)

| Country | CAGR (2026 to 2036) |

|---|---|

| China | 12.4% |

| India | 11.8% |

| Saudi Arabia | 11.1% |

| United States | 9.8% |

| South Korea | 9.4% |

| Germany | 9.1% |

| Japan | 8.6% |

Asia Pacific AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Analysis

Greenfield manufacturing expansion allows facilities in this region to embed analytical software directly into new production architecture rather than fighting legacy integration issues. Operations directors design factory data networks specifically to handle the massive bandwidth requirements of real-time inspection analytics. FMI's analysis indicates this clean-slate approach eliminates the primary friction point plaguing older industrial bases: getting the data out of isolated, proprietary hardware silos. Connecting these new data streams to predictive maintenance frameworks accelerates total plant efficiency.

- China: High-volume electronics and automotive manufacturing mandates sub-second automated defect recognition to maintain production speeds. The AI-assisted NDT data analytics platform industry in China is poised to expand at a CAGR of 12.4% through 2036. Quality control directors actively integrate cloud-based analytics to meet these rigorous throughput demands. Plants achieving this integration secure lucrative export contracts by guaranteeing flawless digital traceability to Western buyers.

- India: Massive infrastructure projects and localized heavy manufacturing require rapid inspection modernization. Facility managers deploy software overlays to amplify the output of a limited certified workforce. Driven by these operational bottlenecks, AI-assisted NDT data analytics adoption in India is likely to advance at an 11.8% CAGR by 2036. This adoption successfully bridges the severe gap between ambitious production targets and quality assurance capacity.

- South Korea: South Korea is projected to witness a 9.4% CAGR in the AI-assisted NDT data analytics industry through 2036. Advanced semiconductor and battery manufacturing sectors rely heavily on microscopic anomaly detection via automated imaging to maintain yield rates. Production engineers continually tune algorithmic sensitivity limits to balance throughput with precision, embedding these platforms deeply into the core architecture of local advanced manufacturing facilities.

- Japan: Aging critical infrastructure demands sophisticated degradation modeling rather than simple defect detection. Asset integrity managers increasingly utilize predictive analytics to safely extend facility lifespans past original design specifications. Japan is forecast to record steady growth in AI-assisted NDT data analytics demand at a CAGR of 8.6% through 2036. These algorithmic overlays prevent premature asset replacement while satisfying strict national safety regulations.

FMI's report includes detailed analysis of emerging industrial hubs across Southeast Asia that are rapidly transitioning from manual sampling to automated 100% inspection mandates.

North America AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Analysis

Stringent aerospace and defense qualification standards dictate the pace and structure of algorithmic adoption across this territory. Compliance managers must prove that software overlays perform equivalently or better than certified human inspectors across thousands of controlled test cases before deployment. This rigorous validation environment slows initial deployment but creates an unshakable, high-value foundation once regulatory approval is achieved. Integrating AI-driven predictive maintenance protocols alongside these platforms becomes the standard operational procedure.

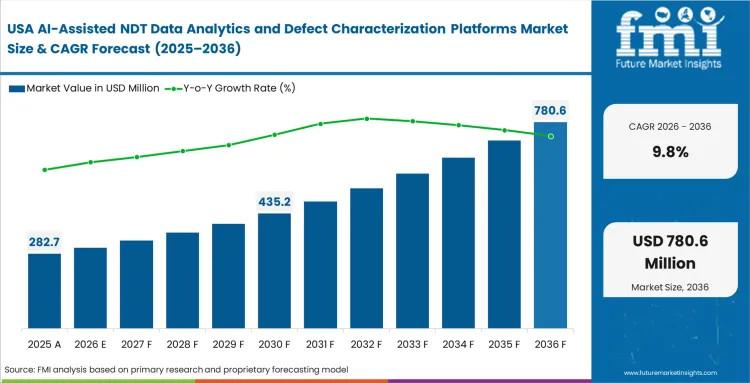

- United States: Deeply entrenched legacy inspection hardware fleets require sophisticated, vendor-agnostic data ingestion tools to function effectively. Sales of AI-assisted NDT data analytics software in the United States are expected to increase at a 9.8% CAGR during the forecast period. NDT department heads prioritize software backwards compatibility over absolute theoretical accuracy. The trajectory points toward massive consolidation of siloed plant data into centralized corporate dashboards.

FMI's report includes Canadian resource extraction sectors utilizing specialized analytics for remote pipeline integrity management.

Europe & Middle East AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Analysis

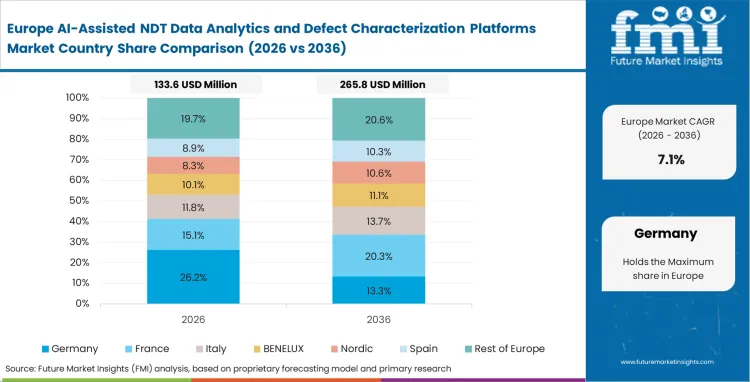

Extreme focus on asset lifespan extension and environmental risk mitigation shapes the analytical requirements in these interconnected zones. Integrity managers utilize deep learning models not just to find flaws, but to prove to environmental regulators that micro-fractures will not propagate into leaks. Based on FMI's assessment, the regulatory environment actively incentivizes operators to deploy advanced analytics by offering reduced insurance premiums and extended operational licenses for facilities demonstrating automated monitoring capabilities.

- Saudi Arabia: Massive downstream petrochemical facilities require constant automated monitoring of high-pressure components to prevent failures. Maintenance directors actively implement algorithmic corrosion mapping to optimize shutdown schedules. Saudi Arabia is set to record an 11.1% CAGR in AI-assisted NDT data analytics demand during the assessment period. This deployment shifts regional maintenance strategies from reactive responses to predictive, data-driven asset management.

- Germany: The AI-assisted NDT data analytics sector in Germany is expected to register a 9.1% CAGR through 2036. Precision automotive and industrial engineering segments demand multimodal data fusion to evaluate complex composite materials. Quality engineers deploy customized neural networks to handle these rigorous requirements. German manufacturers who master this digital quality integration subsequently dominate European supply chains by offering unparalleled defect traceability.

FMI's report includes analysis of Nordic manufacturing sectors focusing heavily on automated reporting and workflow digitization.

Competitive Aligners for Market Players

Software developers in this space compete on data ingestion capabilities rather than raw neural network accuracy. Waygate Technologies dominate early evaluations because their analytics platforms natively read the proprietary file formats generated by their massive global hardware installed base. Hexagon VG software commands significant power in the volumetric space because its rendering engine handles massive CT datasets without crashing standard workstation hardware. Buyers drafting an NDT AI software RFQ actively dismiss standalone algorithms that require complex intermediate data conversion steps or external networking.

Incumbent equipment manufacturers possess an advantage: they control the source data generation. Comet Yxlon and MISTRAS Group leverage their hardware footprint to capture petabytes of annotated inspection data, which they use to train vastly superior foundational models. Pure-play NDT software vendors must scrape together public datasets or beg pilot customers for access to real-world flawed components. Quality and compliance management documentation built over decades gives incumbents an enormous head start in regulatory validation, a barrier that prevents rapid market entry by generic tech startups.

Large aerospace and energy buyers actively resist this hardware-software lock-in by mandating open data architecture in their aquisition contracts. Operations directors refuse to purchase new inspection machines unless the vendor guarantees the raw data can be exported in standardized formats like DICONDE. Zetec and VisiConsult navigate this tension by explicitly marketing their platforms as hardware-agnostic, appealing directly to acquisition managers desperate to figure out how to compare NDT analytics platforms objectively. Transformation hinges on whether independent vendors can build enough traceable defect reporting software utility to overcome the seamless integration offered by combined hardware-software giants.

Key Players in AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market

- Waygate Technologies (Baker Hughes)

- Hexagon VG software

- Comet Yxlon

- Zetec

- MISTRAS Group

- VisiConsult

Scope of the Report

| Metric | Value |

|---|---|

| Quantitative Units | USD 520.0 million to USD 1,460.0 million, at a CAGR of 10.90% |

| Market Definition | Algorithmic software infrastructure designed specifically to ingest, process, and interpret non-destructive testing data to identify, classify, and size material anomalies without human intervention. |

| Segmentation | Deployment, Modality, Function, Workflow Stage, End Use, and Region |

| Regions Covered | North America, Latin America, Europe, East Asia, South Asia & Pacific, Middle East & Africa |

| Countries Covered | China, India, Saudi Arabia, United States, South Korea, Germany, Japan |

| Key Companies Profiled | Waygate Technologies (Baker Hughes), Hexagon VG software, Comet Yxlon, Zetec, MISTRAS Group, VisiConsult |

| Forecast Period | 2026 to 2036 |

| Approach | Annual enterprise software licensing renewals and compute consumption metrics specifically tied to industrial inspection workflows. |

Source: Future Market Insights (FMI) analysis, based on proprietary forecasting model and primary research

AI-Assisted NDT Data Analytics and Defect Characterization Platforms Market Analysis by Segments

Deployment

- On-premises

- Cloud

- Hybrid

Modality

- Radiography/CT

- Ultrasonic

- Eddy Current

- Thermography

- Visual

Function

- Defect Detection

- Defect Classification

- Defect Sizing

- Reporting

- Workflow Management

Workflow Stage

- Post-inspection

- In-line

- Near-line

End Use

- Oil & Gas

- Aerospace

- Automotive

- Power

- Manufacturing

Region:

- North America

- United States

- Canada

- Europe

- Germany

- United Kingdom

- France

- Italy

- Spain

- Asia Pacific

- China

- Japan

- South Korea

- Taiwan

- Singapore

- Latin America

- Brazil

- Mexico

- Argentina

- Middle East & Africa

- GCC Countries

- South Africa

Bibliography

- Donmez, A., Fox, J., Kim, F., Lane, B., Praniewicz, M., Tondare, V., Weaver, J., & Witherell, P. (2024). In-process monitoring and non-destructive evaluation for metal additive manufacturing processes. National Institute of Standards and Technology.

- Tian, Z., Li, J., Li, X., Wang, Z., Zhou, X., Sang, Y., & Zou, C. (2024). A machine learning-assisted nondestructive testing method based on time-domain wave signals. International Journal of Rock Mechanics and Mining Sciences, 177, 105731.

- Vijayalakshmi, S., Mrudhula, S., Ashok Kumar, V., Agastin, Varun, & Mercy Latha, A. (2024). Artificial intelligence-driven timber wood defect characterization from terahertz images. Journal of Nondestructive Evaluation, 43, 116.

- Peng, S., Addepalli, S., & Farsi, M. (2025). Machine learning in thermography non-destructive testing: A systematic review. Applied Sciences, 15(17), 9624.

- Topp, M., Els, C., Strothmann, L., Strothmann, F., & Gombos, A. (2024). Artificial intelligence in NDE – Discussing the infrastructure and application of critical image detection (CiD) and single image detail analysis (SiDA) in manufacturing use cases. Journal of Non-Destructive Testing & Evaluation (JNDE), 21(4), 42–48.

This bibliography is provided for reader reference. The full FMI report contains the complete reference list with primary source documentation.

This Report Addresses

- Identification of specific regulatory thresholds forcing algorithmic overlays into aerospace supplier quality manuals.

- Analysis of data sovereignty requirements driving persistent on-premises deployment dominance in defense and nuclear facilities.

- Assessment of volumetric data complexities that mandate automated interpretation for industrial computed tomography workflows.

- Evaluation of the structural friction caused by legacy proprietary file formats hindering agnostic software integration.

- Examination of the shift from basic defect detection to predictive remaining-useful-life calculations in energy asset integrity programs.

- Breakdown of the exact hardware computing constraints preventing edge-native neural network deployment on active production lines.

- Profiling of key incumbent equipment manufacturers leveraging their massive annotated data archives against pure-play software challengers.

- Mapping of the commercial consequences for tier-two suppliers who fail to align with tier-one automated recognition standards.

Frequently Asked Questions

What are AI-assisted NDT data analytics platforms?What features matter most in enterprise NDT analytics software?

These specialized algorithmic architectures automate the interpretation of complex non-destructive evaluation data. They replace manual technician analysis with machine learning models that rapidly ingest industrial scan files to detect, classify, and size material defects without human intervention.

How does AI improve defect detection in NDT?

Deep learning models evaluate complex part geometries and ignore beam hardening or scatter noise that physically disturbs human eyes. Software overlays locate microscopic internal porosities instantly, clearing inspection bottlenecks and severely reducing false negative rates in high-throughput manufacturing environments.

What is the difference between defect detection and defect characterization?

Detection algorithms simply draw bounding boxes around anomalies, requiring a human technician to verify the flaw. Defect characterization software mathematically categorizes the exact failure mechanism and outputs a deterministic compliance sizing rating, allowing engineers to accept or scrap a part automatically.

Which NDT modalities benefit most from AI analytics?

Volumetric techniques like industrial computed tomography and advanced ultrasonics benefit heavily because they generate massive, dense data sets. The sheer volume of voxel and phase data produced by modern hardware makes algorithmic assistance mandatory for timely review.

Why do CT and radiography workflows adopt AI faster?

Modern industrial CT scanning produces terabytes of raw data per shift that takes hours to analyze manually. Quality directors prioritize these workflows for AI integration because the automated analysis eliminates the primary bottleneck stalling their advanced manufacturing assembly lines.

What validation is required before using AI in safety-critical inspection?

Compliance managers must prove that algorithmic overlays perform equivalently or better than certified human inspectors across thousands of controlled, documented test cases. Regulatory bodies enforce strict validation protocols to ensure software modifications do not alter existing safety baselines.

Should buyers choose cloud or on-prem deployment?

Defense contractors and nuclear operators strictly mandate isolated on-premises architectures to maintain absolute data sovereignty over proprietary component schematics. Buyers lacking these extreme security constraints often favor cloud deployments to leverage external computing power and easier multi-facility data aggregation.

Which vendors lead in AI-assisted NDT software?

Incumbent equipment manufacturers like Waygate Technologies, and Comet Yxlon lead early adoption because their analytics natively integrate with their massive hardware footprint. Independent developers like Zetec and VisiConsult compete by offering completely hardware-agnostic, open-architecture platforms.

What industries are spending most on NDT analytics platforms?

Aerospace and defense sectors drive primary spending to comply with extreme traceability mandates and tight part tolerances. The energy sector follows closely, investing heavily in predictive degradation analytics to extend the lifespan of aging offshore platforms and pipeline infrastructure.

What features matter most in enterprise NDT analytics software?

Acquisition directors prioritize vendor-agnostic data ingestion capabilities above all else, ensuring the platform can read legacy proprietary file formats without complex conversions. Export functions supporting open standards like DICONDE are critical to preventing long-term operational vendor lock-in.

Which AI NDT software works with CT and UT data?

Multi-modal analytics platforms from vendors like Hexagon VG software and VisiConsult fuse radiographic, ultrasonic, and thermographic data into a single unified profile. This allows quality directors to cross-reference anomalies across completely different physical phenomena to eliminate diagnostic uncertainty.

What should I look for in NDT AI software?

Operations directors must ensure the software integrates seamlessly with existing factory floor workflows without forcing technicians into secondary viewing windows. The platform must offer transparent, traceable defect reporting capabilities that satisfy external regulatory audits.

How does cloud vs on-prem NDT analytics platform architecture impact security?

Cloud architectures route massive datasets through external internet infrastructure, creating unacceptable interception risks for classified or highly proprietary manufacturing geometries. On-premises frameworks solve this by physically localizing all data processing, giving security directors complete chain-of-custody control.

Why is explainable AI in industrial inspection critical for adoption?

Certified NDT technicians wield significant operational authority and flatly reject black-box algorithms that offer no mathematical reasoning for a defect classification. Explainable models build trust by visually highlighting the exact density gradients or phase shifts that triggered the automated warning.

What data is needed to train NDT AI models?

Machine learning networks require thousands of accurately labeled, high-resolution images of specific industrial failure modes. Because facilities rarely possess perfectly organized archives of flawed components, developers increasingly rely on physics-based simulation engines to generate synthetic training datasets.

Is AI accepted in safety-critical inspection?

Regulatory acceptance is growing rapidly, provided the software produces immutable digital records of every algorithmic decision. Federal aviation and nuclear authorities accept AI overlays when deployed as assistive tools that highlight anomalies for final human verification.

Can AI classify weld defects automatically?

Advanced models evaluate complex automated ultrasonic data from structural joints, mathematically separating critical fatigue cracks from benign geometric root reflections. Plant managers deploy these tools to evaluate internal alignment instantly without stalling active assembly lines.

What is automated defect recognition in NDT?

This operational standard dictates that machine vision algorithms evaluate integrity directly from the sensor signal. It shifts the burden of initial anomaly detection from fatigued human operators to consistent, high-speed computational models.

How do buyers compare NDT analytics platforms effectively?

Evaluators look past theoretical neural network accuracy and test the software directly against their existing, twenty-year-old hardware fleet. A platform that requires manual file conversion during a live pilot phase is immediately disqualified by the inspection workforce.

What drives the adoption of phased array data analysis software?

Integrity managers face mountains of complex acoustic data generated by automated crawlers on oil pipelines. Algorithmic software interprets these continuous thickness measurements into degradation models, pinpointing critical thinning zones before rupture events occur.

How does casting defect characterization software improve foundry yield?

Foundries produce massive metal components with acceptable micro-void limits. Lead engineers utilize specialized algorithms to mathematically prove a recognized flaw remains within safe tolerances, directly preventing the expensive scrapping of functional parts.

Why is ADR software for radiography inspection mandatory for aerospace?

Aerospace primes embed strict automated defect recognition requirements directly into their supplier quality manuals. Tier-two suppliers must adopt these digital evaluation standards to maintain lucrative contracts, as manual human interpretation is no longer accepted for safety-critical turbine components.

How does battery CT defect analysis software support EV manufacturing?

Electric vehicle production requires 100% inspection of complex internal cell alignments at incredibly high speeds. Operations directors deploy algorithmic volumetric analysis to characterize microscopic anode defects instantly, supporting massive production quotas without compromising strict safety tolerances.

What is the commercial consequence of failing to adopt analytics?

Suppliers relying entirely on manual image interpretation face escalating labor costs and artificial production caps tied directly to technician availability. As competitors integrate algorithmic AI predictive maintenance saas platforms, manually constrained facilities lose major supply contracts because they cannot physically scale their quality control operations.

Table of Content

- Executive Summary

- Global Market Outlook

- Demand to side Trends

- Supply to side Trends

- Technology Roadmap Analysis

- Analysis and Recommendations

- Market Overview

- Market Coverage / Taxonomy

- Market Definition / Scope / Limitations

- Research Methodology

- Chapter Orientation

- Analytical Lens and Working Hypotheses

- Market Structure, Signals, and Trend Drivers

- Benchmarking and Cross-market Comparability

- Market Sizing, Forecasting, and Opportunity Mapping

- Research Design and Evidence Framework

- Desk Research Programme (Secondary Evidence)

- Company Annual and Sustainability Reports

- Peer-reviewed Journals and Academic Literature

- Corporate Websites, Product Literature, and Technical Notes

- Earnings Decks and Investor Briefings

- Statutory Filings and Regulatory Disclosures

- Technical White Papers and Standards Notes

- Trade Journals, Industry Magazines, and Analyst Briefs

- Conference Proceedings, Webinars, and Seminar Materials

- Government Statistics Portals and Public Data Releases

- Press Releases and Reputable Media Coverage

- Specialist Newsletters and Curated Briefings

- Sector Databases and Reference Repositories

- FMI Internal Proprietary Databases and Historical Market Datasets

- Subscription Datasets and Paid Sources

- Social Channels, Communities, and Digital Listening Inputs

- Additional Desk Sources

- Expert Input and Fieldwork (Primary Evidence)

- Primary Modes

- Qualitative Interviews and Expert Elicitation

- Quantitative Surveys and Structured Data Capture

- Blended Approach

- Why Primary Evidence is Used

- Field Techniques

- Interviews

- Surveys

- Focus Groups

- Observational and In-context Research

- Social and Community Interactions

- Stakeholder Universe Engaged

- C-suite Leaders

- Board Members

- Presidents and Vice Presidents

- R&D and Innovation Heads

- Technical Specialists

- Domain Subject-matter Experts

- Scientists

- Physicians and Other Healthcare Professionals

- Governance, Ethics, and Data Stewardship

- Research Ethics

- Data Integrity and Handling

- Primary Modes

- Tooling, Models, and Reference Databases

- Desk Research Programme (Secondary Evidence)

- Data Engineering and Model Build

- Data Acquisition and Ingestion

- Cleaning, Normalisation, and Verification

- Synthesis, Triangulation, and Analysis

- Quality Assurance and Audit Trail

- Market Background

- Market Dynamics

- Drivers

- Restraints

- Opportunity

- Trends

- Scenario Forecast

- Demand in Optimistic Scenario

- Demand in Likely Scenario

- Demand in Conservative Scenario

- Opportunity Map Analysis

- Product Life Cycle Analysis

- Supply Chain Analysis

- Investment Feasibility Matrix

- Value Chain Analysis

- PESTLE and Porter’s Analysis

- Regulatory Landscape

- Regional Parent Market Outlook

- Production and Consumption Statistics

- Import and Export Statistics

- Market Dynamics

- Global Market Analysis 2021 to 2025 and Forecast, 2026 to 2036

- Historical Market Size Value (USD Million) Analysis, 2021 to 2025

- Current and Future Market Size Value (USD Million) Projections, 2026 to 2036

- Y to o to Y Growth Trend Analysis

- Absolute $ Opportunity Analysis

- Global Market Pricing Analysis 2021 to 2025 and Forecast 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Deployment

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Deployment , 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Deployment , 2026 to 2036

- On-Premises

- Cloud

- Hybrid

- On-Premises

- Y to o to Y Growth Trend Analysis By Deployment , 2021 to 2025

- Absolute $ Opportunity Analysis By Deployment , 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Modality

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Modality, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Modality, 2026 to 2036

- Radiography/CT

- Ultrasonic

- Thermography

- Radiography/CT

- Y to o to Y Growth Trend Analysis By Modality, 2021 to 2025

- Absolute $ Opportunity Analysis By Modality, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Function

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Function, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Function, 2026 to 2036

- Defect Detection

- Defect Classification

- Defect Sizing

- Defect Detection

- Y to o to Y Growth Trend Analysis By Function, 2021 to 2025

- Absolute $ Opportunity Analysis By Function, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Workflow Stage

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Workflow Stage, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Workflow Stage, 2026 to 2036

- Post-Inspection Workflows

- In-line

- Near-line

- Post-Inspection Workflows

- Y to o to Y Growth Trend Analysis By Workflow Stage, 2021 to 2025

- Absolute $ Opportunity Analysis By Workflow Stage, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By End Use

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By End Use, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By End Use, 2026 to 2036

- Oil & Gas

- Aerospace

- Automotive

- Oil & Gas

- Y to o to Y Growth Trend Analysis By End Use, 2021 to 2025

- Absolute $ Opportunity Analysis By End Use, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Region

- Introduction

- Historical Market Size Value (USD Million) Analysis By Region, 2021 to 2025

- Current Market Size Value (USD Million) Analysis and Forecast By Region, 2026 to 2036

- North America

- Latin America

- Western Europe

- Eastern Europe

- East Asia

- South Asia and Pacific

- Middle East & Africa

- Market Attractiveness Analysis By Region

- North America Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- USA

- Canada

- Mexico

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Key Takeaways

- Latin America Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Brazil

- Chile

- Rest of Latin America

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Key Takeaways

- Western Europe Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Germany

- UK

- Italy

- Spain

- France

- Nordic

- BENELUX

- Rest of Western Europe

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Key Takeaways

- Eastern Europe Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Russia

- Poland

- Hungary

- Balkan & Baltic

- Rest of Eastern Europe

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Key Takeaways

- East Asia Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- China

- Japan

- South Korea

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Key Takeaways

- South Asia and Pacific Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- India

- ASEAN

- Australia & New Zealand

- Rest of South Asia and Pacific

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Key Takeaways

- Middle East & Africa Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Kingdom of Saudi Arabia

- Other GCC Countries

- Turkiye

- South Africa

- Other African Union

- Rest of Middle East & Africa

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Key Takeaways

- Key Countries Market Analysis

- USA

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Canada

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Mexico

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Brazil

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Chile

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Germany

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- UK

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Italy

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Spain

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- France

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- India

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- ASEAN

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Australia & New Zealand

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- China

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Japan

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- South Korea

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Russia

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Poland

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Hungary

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Kingdom of Saudi Arabia

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Turkiye

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- South Africa

- Pricing Analysis

- Market Share Analysis, 2025

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- USA

- Market Structure Analysis

- Competition Dashboard

- Competition Benchmarking

- Market Share Analysis of Top Players

- By Regional

- By Deployment

- By Modality

- By Function

- By Workflow Stage

- By End Use

- Competition Analysis

- Competition Deep Dive

- Waygate Technologies (Baker Hughes)

- Overview

- Product Portfolio

- Profitability by Market Segments (Product/Age /Sales Channel/Region)

- Sales Footprint

- Strategy Overview

- Marketing Strategy

- Product Strategy

- Channel Strategy

- Hexagon VG software

- Comet Yxlon

- Zetec

- MISTRAS Group

- VisiConsult

- Waygate Technologies (Baker Hughes)

- Competition Deep Dive

- Assumptions & Acronyms Used

List of Tables

- Table 1: Global Market Value (USD Million) Forecast by Region, 2021 to 2036

- Table 2: Global Market Value (USD Million) Forecast by Deployment , 2021 to 2036

- Table 3: Global Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 4: Global Market Value (USD Million) Forecast by Function, 2021 to 2036

- Table 5: Global Market Value (USD Million) Forecast by Workflow Stage, 2021 to 2036

- Table 6: Global Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 7: North America Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 8: North America Market Value (USD Million) Forecast by Deployment , 2021 to 2036

- Table 9: North America Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 10: North America Market Value (USD Million) Forecast by Function, 2021 to 2036

- Table 11: North America Market Value (USD Million) Forecast by Workflow Stage, 2021 to 2036

- Table 12: North America Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 13: Latin America Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 14: Latin America Market Value (USD Million) Forecast by Deployment , 2021 to 2036

- Table 15: Latin America Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 16: Latin America Market Value (USD Million) Forecast by Function, 2021 to 2036

- Table 17: Latin America Market Value (USD Million) Forecast by Workflow Stage, 2021 to 2036

- Table 18: Latin America Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 19: Western Europe Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 20: Western Europe Market Value (USD Million) Forecast by Deployment , 2021 to 2036

- Table 21: Western Europe Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 22: Western Europe Market Value (USD Million) Forecast by Function, 2021 to 2036

- Table 23: Western Europe Market Value (USD Million) Forecast by Workflow Stage, 2021 to 2036

- Table 24: Western Europe Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 25: Eastern Europe Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 26: Eastern Europe Market Value (USD Million) Forecast by Deployment , 2021 to 2036

- Table 27: Eastern Europe Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 28: Eastern Europe Market Value (USD Million) Forecast by Function, 2021 to 2036

- Table 29: Eastern Europe Market Value (USD Million) Forecast by Workflow Stage, 2021 to 2036

- Table 30: Eastern Europe Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 31: East Asia Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 32: East Asia Market Value (USD Million) Forecast by Deployment , 2021 to 2036

- Table 33: East Asia Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 34: East Asia Market Value (USD Million) Forecast by Function, 2021 to 2036

- Table 35: East Asia Market Value (USD Million) Forecast by Workflow Stage, 2021 to 2036

- Table 36: East Asia Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 37: South Asia and Pacific Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 38: South Asia and Pacific Market Value (USD Million) Forecast by Deployment , 2021 to 2036

- Table 39: South Asia and Pacific Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 40: South Asia and Pacific Market Value (USD Million) Forecast by Function, 2021 to 2036

- Table 41: South Asia and Pacific Market Value (USD Million) Forecast by Workflow Stage, 2021 to 2036

- Table 42: South Asia and Pacific Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 43: Middle East & Africa Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 44: Middle East & Africa Market Value (USD Million) Forecast by Deployment , 2021 to 2036

- Table 45: Middle East & Africa Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 46: Middle East & Africa Market Value (USD Million) Forecast by Function, 2021 to 2036

- Table 47: Middle East & Africa Market Value (USD Million) Forecast by Workflow Stage, 2021 to 2036

- Table 48: Middle East & Africa Market Value (USD Million) Forecast by End Use, 2021 to 2036

List of Figures

- Figure 1: Global Market Pricing Analysis

- Figure 2: Global Market Value (USD Million) Forecast 2021-2036

- Figure 3: Global Market Value Share and BPS Analysis by Deployment , 2026 and 2036

- Figure 4: Global Market Y-o-Y Growth Comparison by Deployment , 2026-2036

- Figure 5: Global Market Attractiveness Analysis by Deployment

- Figure 6: Global Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 7: Global Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 8: Global Market Attractiveness Analysis by Modality

- Figure 9: Global Market Value Share and BPS Analysis by Function, 2026 and 2036

- Figure 10: Global Market Y-o-Y Growth Comparison by Function, 2026-2036

- Figure 11: Global Market Attractiveness Analysis by Function

- Figure 12: Global Market Value Share and BPS Analysis by Workflow Stage, 2026 and 2036

- Figure 13: Global Market Y-o-Y Growth Comparison by Workflow Stage, 2026-2036

- Figure 14: Global Market Attractiveness Analysis by Workflow Stage

- Figure 15: Global Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 16: Global Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 17: Global Market Attractiveness Analysis by End Use

- Figure 18: Global Market Value (USD Million) Share and BPS Analysis by Region, 2026 and 2036

- Figure 19: Global Market Y-o-Y Growth Comparison by Region, 2026-2036

- Figure 20: Global Market Attractiveness Analysis by Region

- Figure 21: North America Market Incremental Dollar Opportunity, 2026-2036

- Figure 22: Latin America Market Incremental Dollar Opportunity, 2026-2036

- Figure 23: Western Europe Market Incremental Dollar Opportunity, 2026-2036

- Figure 24: Eastern Europe Market Incremental Dollar Opportunity, 2026-2036

- Figure 25: East Asia Market Incremental Dollar Opportunity, 2026-2036

- Figure 26: South Asia and Pacific Market Incremental Dollar Opportunity, 2026-2036

- Figure 27: Middle East & Africa Market Incremental Dollar Opportunity, 2026-2036

- Figure 28: North America Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 29: North America Market Value Share and BPS Analysis by Deployment , 2026 and 2036

- Figure 30: North America Market Y-o-Y Growth Comparison by Deployment , 2026-2036

- Figure 31: North America Market Attractiveness Analysis by Deployment

- Figure 32: North America Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 33: North America Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 34: North America Market Attractiveness Analysis by Modality

- Figure 35: North America Market Value Share and BPS Analysis by Function, 2026 and 2036

- Figure 36: North America Market Y-o-Y Growth Comparison by Function, 2026-2036

- Figure 37: North America Market Attractiveness Analysis by Function

- Figure 38: North America Market Value Share and BPS Analysis by Workflow Stage, 2026 and 2036

- Figure 39: North America Market Y-o-Y Growth Comparison by Workflow Stage, 2026-2036

- Figure 40: North America Market Attractiveness Analysis by Workflow Stage

- Figure 41: North America Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 42: North America Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 43: North America Market Attractiveness Analysis by End Use

- Figure 44: Latin America Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 45: Latin America Market Value Share and BPS Analysis by Deployment , 2026 and 2036

- Figure 46: Latin America Market Y-o-Y Growth Comparison by Deployment , 2026-2036

- Figure 47: Latin America Market Attractiveness Analysis by Deployment

- Figure 48: Latin America Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 49: Latin America Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 50: Latin America Market Attractiveness Analysis by Modality

- Figure 51: Latin America Market Value Share and BPS Analysis by Function, 2026 and 2036

- Figure 52: Latin America Market Y-o-Y Growth Comparison by Function, 2026-2036

- Figure 53: Latin America Market Attractiveness Analysis by Function

- Figure 54: Latin America Market Value Share and BPS Analysis by Workflow Stage, 2026 and 2036

- Figure 55: Latin America Market Y-o-Y Growth Comparison by Workflow Stage, 2026-2036

- Figure 56: Latin America Market Attractiveness Analysis by Workflow Stage

- Figure 57: Latin America Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 58: Latin America Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 59: Latin America Market Attractiveness Analysis by End Use

- Figure 60: Western Europe Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 61: Western Europe Market Value Share and BPS Analysis by Deployment , 2026 and 2036

- Figure 62: Western Europe Market Y-o-Y Growth Comparison by Deployment , 2026-2036

- Figure 63: Western Europe Market Attractiveness Analysis by Deployment

- Figure 64: Western Europe Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 65: Western Europe Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 66: Western Europe Market Attractiveness Analysis by Modality

- Figure 67: Western Europe Market Value Share and BPS Analysis by Function, 2026 and 2036

- Figure 68: Western Europe Market Y-o-Y Growth Comparison by Function, 2026-2036

- Figure 69: Western Europe Market Attractiveness Analysis by Function

- Figure 70: Western Europe Market Value Share and BPS Analysis by Workflow Stage, 2026 and 2036

- Figure 71: Western Europe Market Y-o-Y Growth Comparison by Workflow Stage, 2026-2036

- Figure 72: Western Europe Market Attractiveness Analysis by Workflow Stage

- Figure 73: Western Europe Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 74: Western Europe Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 75: Western Europe Market Attractiveness Analysis by End Use

- Figure 76: Eastern Europe Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 77: Eastern Europe Market Value Share and BPS Analysis by Deployment , 2026 and 2036

- Figure 78: Eastern Europe Market Y-o-Y Growth Comparison by Deployment , 2026-2036

- Figure 79: Eastern Europe Market Attractiveness Analysis by Deployment

- Figure 80: Eastern Europe Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 81: Eastern Europe Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 82: Eastern Europe Market Attractiveness Analysis by Modality

- Figure 83: Eastern Europe Market Value Share and BPS Analysis by Function, 2026 and 2036

- Figure 84: Eastern Europe Market Y-o-Y Growth Comparison by Function, 2026-2036

- Figure 85: Eastern Europe Market Attractiveness Analysis by Function

- Figure 86: Eastern Europe Market Value Share and BPS Analysis by Workflow Stage, 2026 and 2036

- Figure 87: Eastern Europe Market Y-o-Y Growth Comparison by Workflow Stage, 2026-2036

- Figure 88: Eastern Europe Market Attractiveness Analysis by Workflow Stage

- Figure 89: Eastern Europe Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 90: Eastern Europe Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 91: Eastern Europe Market Attractiveness Analysis by End Use

- Figure 92: East Asia Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 93: East Asia Market Value Share and BPS Analysis by Deployment , 2026 and 2036

- Figure 94: East Asia Market Y-o-Y Growth Comparison by Deployment , 2026-2036

- Figure 95: East Asia Market Attractiveness Analysis by Deployment

- Figure 96: East Asia Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 97: East Asia Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 98: East Asia Market Attractiveness Analysis by Modality

- Figure 99: East Asia Market Value Share and BPS Analysis by Function, 2026 and 2036

- Figure 100: East Asia Market Y-o-Y Growth Comparison by Function, 2026-2036

- Figure 101: East Asia Market Attractiveness Analysis by Function

- Figure 102: East Asia Market Value Share and BPS Analysis by Workflow Stage, 2026 and 2036

- Figure 103: East Asia Market Y-o-Y Growth Comparison by Workflow Stage, 2026-2036

- Figure 104: East Asia Market Attractiveness Analysis by Workflow Stage

- Figure 105: East Asia Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 106: East Asia Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 107: East Asia Market Attractiveness Analysis by End Use

- Figure 108: South Asia and Pacific Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 109: South Asia and Pacific Market Value Share and BPS Analysis by Deployment , 2026 and 2036

- Figure 110: South Asia and Pacific Market Y-o-Y Growth Comparison by Deployment , 2026-2036

- Figure 111: South Asia and Pacific Market Attractiveness Analysis by Deployment

- Figure 112: South Asia and Pacific Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 113: South Asia and Pacific Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 114: South Asia and Pacific Market Attractiveness Analysis by Modality

- Figure 115: South Asia and Pacific Market Value Share and BPS Analysis by Function, 2026 and 2036

- Figure 116: South Asia and Pacific Market Y-o-Y Growth Comparison by Function, 2026-2036

- Figure 117: South Asia and Pacific Market Attractiveness Analysis by Function

- Figure 118: South Asia and Pacific Market Value Share and BPS Analysis by Workflow Stage, 2026 and 2036

- Figure 119: South Asia and Pacific Market Y-o-Y Growth Comparison by Workflow Stage, 2026-2036

- Figure 120: South Asia and Pacific Market Attractiveness Analysis by Workflow Stage

- Figure 121: South Asia and Pacific Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 122: South Asia and Pacific Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 123: South Asia and Pacific Market Attractiveness Analysis by End Use

- Figure 124: Middle East & Africa Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 125: Middle East & Africa Market Value Share and BPS Analysis by Deployment , 2026 and 2036

- Figure 126: Middle East & Africa Market Y-o-Y Growth Comparison by Deployment , 2026-2036

- Figure 127: Middle East & Africa Market Attractiveness Analysis by Deployment

- Figure 128: Middle East & Africa Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 129: Middle East & Africa Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 130: Middle East & Africa Market Attractiveness Analysis by Modality

- Figure 131: Middle East & Africa Market Value Share and BPS Analysis by Function, 2026 and 2036

- Figure 132: Middle East & Africa Market Y-o-Y Growth Comparison by Function, 2026-2036

- Figure 133: Middle East & Africa Market Attractiveness Analysis by Function

- Figure 134: Middle East & Africa Market Value Share and BPS Analysis by Workflow Stage, 2026 and 2036

- Figure 135: Middle East & Africa Market Y-o-Y Growth Comparison by Workflow Stage, 2026-2036

- Figure 136: Middle East & Africa Market Attractiveness Analysis by Workflow Stage

- Figure 137: Middle East & Africa Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 138: Middle East & Africa Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 139: Middle East & Africa Market Attractiveness Analysis by End Use

- Figure 140: Global Market - Tier Structure Analysis

- Figure 141: Global Market - Company Share Analysis