AI-Based Medical Imaging Algorithm Validation Test Platforms Market

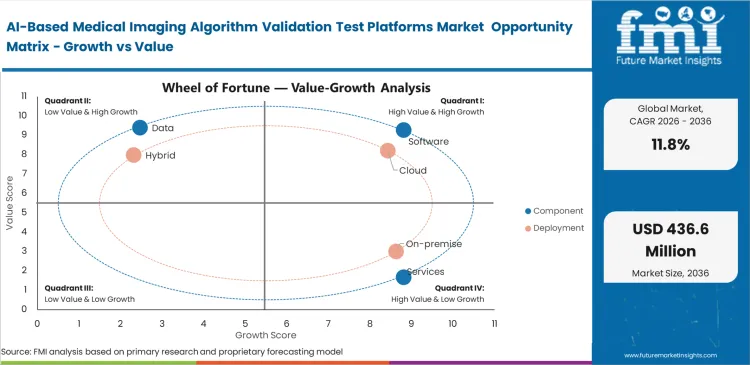

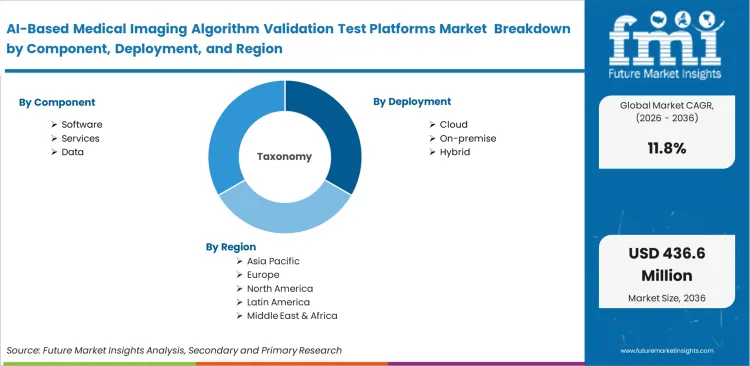

The AI-based medical imaging algorithm validation test platforms market is segmented by Component (Software, Services, Data), Deployment (Cloud, On-premise, Hybrid), Validation Stage (Predeployment, Development, Postdeployment), Modality (X-ray, CT, MRI, Ultrasound, Mammography), End User (Hospitals, AI vendors, Academic centers, Imaging centers, CROs), and Region. Forecast for 2026 to 2036.

Historical Data Covered: 2016 to 2024 | Base Year: 2025 | Estimated Year: 2026 | Forecast Period: 2027 to 2036

AI-Based Medical Imaging Algorithm Validation Test Platforms Market Size, Market Forecast and Outlook By FMI

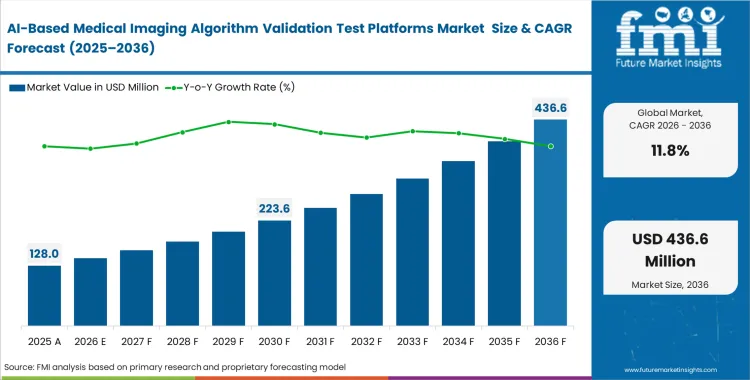

The AI-based medical imaging algorithm validation test platforms market was valued at USD 114.5 million in 2025. Sector is set to cross USD 128.0 million in 2026 at a CAGR of 11.80% during the forecast period. Ongoing investment drives progressive growth to USD 390.5 million by 2036 as hospitals formalize continuous local validation and post-deployment drift monitoring across heterogeneous patient populations.

Summary of AI-Based Medical Imaging Algorithm Validation Test Platforms Market

- Market Snapshot

- The AI-based medical imaging algorithm validation test platforms market is valued at USD 114.5 million in 2025 and is projected to reach USD 390.5 million by 2036.

- Industry is expected to grow at an 11.8% CAGR from 2026 to 2036, creating an incremental opportunity of USD 262.5 million over the period.

- Sector covers software-led platforms used to benchmark, compare, validate, document, and monitor imaging algorithms across development, procurement, and real-world operation.

- The market remains compliance-shaped rather than volume-shaped, because performance documentation, dataset quality, local validation, and auditability matter more than raw image-processing speed.

- Demand and Growth Drivers

- Demand is rising because radiology remains the largest AI-enabled medical-device specialty, which keeps validation workload concentrated in imaging.

- Adoption is also increasing as provider organizations move from isolated pilots to structured implementation programs, with broader use of centralized quality-monitoring frameworks and multi-site imaging deployment programs

- Growth is further supported by tighter lifecycle management, monitoring, data quality, and risk-governance expectations across AI medical software regulation.

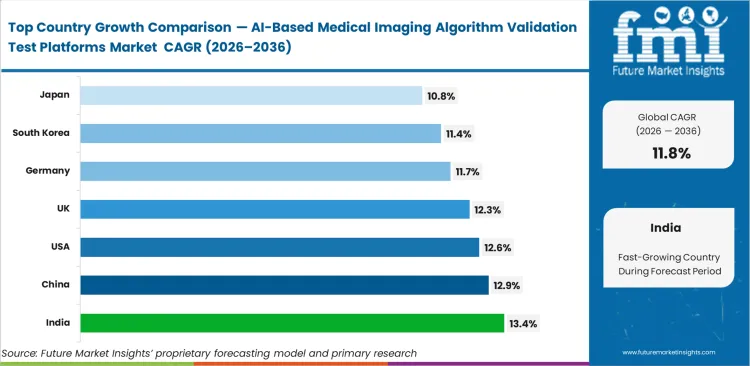

- India leads at an estimated 13.4% CAGR, followed by China at 12.9%, the United States at 12.6%, the United Kingdom at 12.3%, Germany at 11.7%, South Korea at 11.4%, and Japan at 10.8%.

- Growth is moderated by limited outcome-level validation evidence, integration friction inside radiology workflows, and the cost of generating representative benchmark datasets.

- Product and Segment View

- The sector covers validation software, testing services, and curated imaging datasets used across X-ray, CT, MRI, ultrasound, and mammography algorithms.

- These platforms are used in model development, local predeployment comparison, procurement assessment, postdeployment drift monitoring, and multi-reader benchmark studies.

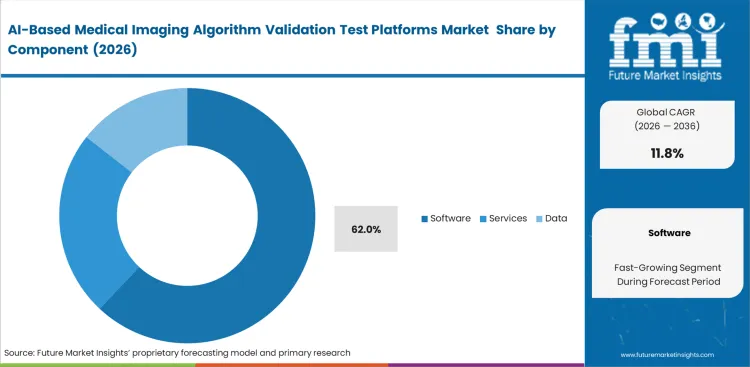

- Software leads the Component segment and is expected to hold 62.0% share, because buyers usually contract for workflow‑native benchmarking, reporting, and monitoring layers before they buy heavy services.

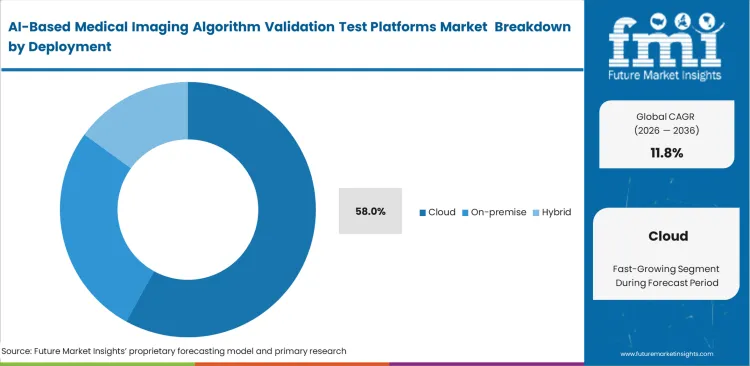

- Cloud leads the Deployment segment and is estimated to hold 58.0% share, reflecting the need for faster collaboration, scalable compute, and easier multi‑site access to annotations, metrics, and audit trails.

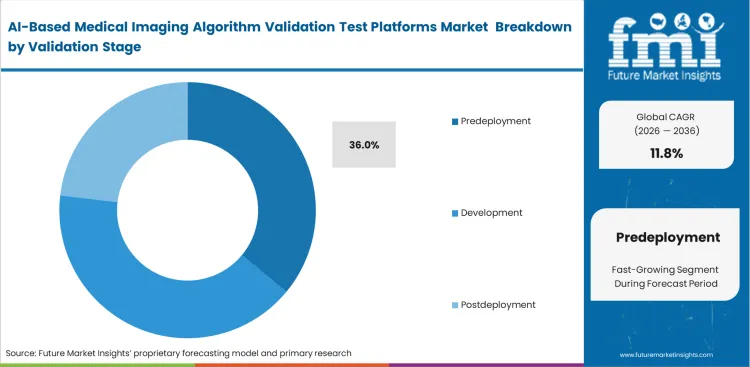

- Predeployment leads the Validation Stage segment and is anticipated to capture 36.0% share, because hospitals and imaging groups increasingly want to test vendor tools on local data before procurement.

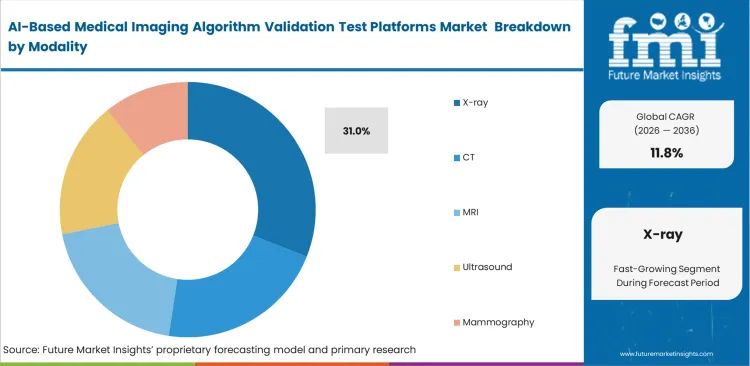

- X‑ray leads the Modality segment and is poised to garner 31.0% share, supported by strong chest‑imaging activity and large‑scale deployment programs in real‑world care settings.

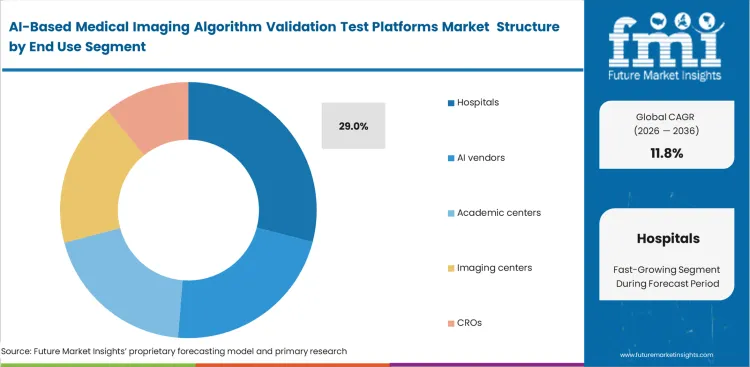

- Hospitals lead the End User segment and is set to record 29.0% share, as they carry the direct burden of acceptance testing, governance, and real‑world performance monitoring.

- Scope includes validation dashboards, benchmark orchestration, test datasets, performance reporting, and monitoring modules, but excludes the diagnostic algorithms themselves, core scanners, and generic non-medical MLOps stacks.

- Geography and Competitive Outlook

- India, China, the United States, and the United Kingdom form the highest-growth country set, while the United States remains the most established high-value demand base.

- Competition is being shaped by platform aggregation, interoperability with PACS/RIS, side-by-side evaluation modules, and the expansion of postdeployment monitoring capabilities.

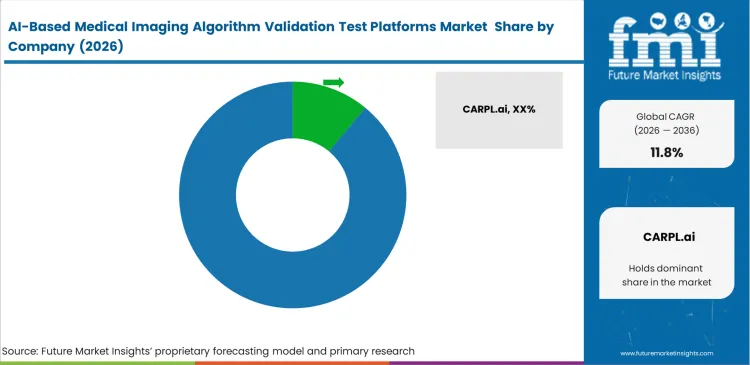

- CARPL.ai, deepc, Blackford, MD.ai, RedBrick AI, V7, and Enlitic are among the active participants, with CARPL.ai currently looking strongest in explicit validation-led positioning.

- The market is fragmented, with no single vendor appearing to control a dominant global share today, which leaves room for specialist platform vendors and enterprise imaging-infrastructure players to compete.

Local benchmarking gaps create a serious barrier when hospitals deploy diagnostic models without testing them first on their own scanners and patient populations. Vendor claims increasingly need to be checked against site-specific imaging environments before commercial rollout decisions are made. General regulatory clearance data on its own is not enough for enterprise AI deployment in clinical settings, because performance can shift once algorithms are used under local operating conditions. Centralized radiology AI benchmarking infrastructure is therefore moving into routine hospital operations rather than remaining confined to research evaluation.

Multi-site health systems are also turning model validation into a repeated governance requirement instead of a one-time pre-purchase exercise. Approval becomes harder to secure when clinical governance committees do not see clear evidence of demographic equity and locally verified baseline performance. Internal audit structures are reinforcing that shift by treating radiology AI validation as part of formal deployment control rather than informal technical review. Early investment in benchmarking infrastructure helps standardize deployment decisions across sites and improves internal discipline around model approval.

India is projected to expand at a CAGR of 13.4% during 2026 to 2036, as localized developer environments build custom evaluation pipelines for regional pathology variants. China is estimated to hold 12.9% CAGR by scaling diagnostic infrastructure across massive untapped patient data pools. The United States adoption is anticipated to capture 12.6% CAGR due to mature quality registry requirements forcing enterprise benchmarking. United Kingdom procurement is poised to garner 12.3% CAGR driven by nationalized multi‑site deployment initiatives. Germany is set to record 11.7% CAGR as clinical oversight boards formalize strict cross‑validation protocols. South Korea and Japan are expected to hold 11.4% CAGR and 10.8% CAGR, where replacement‑driven governance dictates highly structured validation parameters. Geographic divergence ultimately centers on whether a given region prioritizes rapid new‑model deployment or strict post‑market surveillance.

Segmental Analysis

AI-Based Medical Imaging Algorithm Validation Test Platforms Market Analysis by Component

Software architecture determines how hospitals execute benchmarking across fragmented imaging environments and why control over validation workflows remains commercially important. The software segment is expected to hold 62.0% share in 2026 as buyers push for centralized audit trails, structured comparison tools, and coordination across multiple sites. Hospitals favor platforms that ingest outputs from different imaging systems without manual restructuring, since data preparation delays slow down evaluation cycles and increase operational burden. Independent software layers allow clinical teams to compare medical imaging AI validation platforms without disrupting existing PACS infrastructure, which reduces integration risk during testing. Procurement teams also use these layers to maintain vendor neutrality, avoiding lock-in as algorithm portfolios expand over time. Hospitals operating without dedicated validation software remain dependent on vendor-reported performance metrics, limiting visibility into actual accuracy under local operating conditions.

- Standardization engine: Unified software platforms ingest raw diagnostic model outputs and convert them into structured evaluation metrics, presenting results through standardized dashboards that allow radiology IT teams to compare performance across vendors without additional data processing steps.

- Workflow integration: Native APIs connect validation environments directly with existing annotation and labeling tools, allowing clinical evaluators to run parallel testing cycles without manual data transfers or workflow interruptions.

- Audit centralization: Software platforms log every validation parameter, dataset input, and demographic variable used during testing, giving compliance teams complete traceability for internal reviews and regulatory audits.

AI-Based Medical Imaging Algorithm Validation Test Platforms Market Analysis by Deployment

Access to large, diverse datasets and the ability to process them efficiently shape deployment decisions for validation platforms. On-premise infrastructure struggles to support the compute intensity required for large-scale model evaluation, especially when multiple algorithms are tested simultaneously across different datasets. Cloud environments remove these constraints by enabling centralized processing and shared access to anonymized data from multiple facilities. Chief Medical Information Officers use the best radiology AI evaluation platform to benchmark algorithms against broader demographic pools, improving confidence in procurement decisions. Cloud deployment is projected to capture 58.0% share in 2026 as enterprise buyers prioritize seamless integration with external annotation systems and reporting workflows. Hospitals that restrict validation to on-premise systems limit their ability to participate in multi-institution benchmarking programs and collaborative research initiatives.

- Resource scalability: Cloud platforms provide elastic compute capacity that allows evaluators to run large volumes of algorithm tests simultaneously, eliminating the need to invest in dedicated hardware that may remain underutilized outside validation cycles.

- Collaborative pooling: Cloud infrastructure allows multiple regional facilities to contribute anonymized patient datasets into a unified validation environment, enabling faster generation of statistically robust and demographically diverse performance insights.

- Integration speed: Web-based validation platforms connect directly with external reporting and analytics tools, allowing clinical teams to deploy and scale testing workflows without delays tied to internal IT infrastructure approvals.

AI-Based Medical Imaging Algorithm Validation Test Platforms Market Analysis by Validation Stage

Predeployment validation sits at the center of purchasing decisions as hospitals assess clinical risk before introducing diagnostic tools into live workflows. The predeployment stage is estimated to account for 36.0% share in 2026, reflecting the requirement for direct testing on local patient data before system approval. Procurement teams do not rely solely on regulatory summaries, as those reflect controlled testing environments that rarely match actual hospital conditions. Local acceptance testing for imaging AI software reveals performance variation linked to scanner calibration, imaging protocols, and patient demographics, all of which influence radiology structured reporting systems. Sourcing team uses these findings to negotiate pricing and contractual terms when observed performance falls short of vendor claims. Hospitals that bypass structured predeployment validation increase exposure to diagnostic errors, operational disruption, and potential liability once systems are deployed.

- Demographic calibration: Predeployment environments test algorithms against historical local patient scans, allowing clinical governance teams to identify bias and performance gaps before models interact with live patient workflows.

- Vendor benchmarking: Clinical evaluators run competing algorithms simultaneously on identical internal datasets, generating direct performance comparisons that procurement teams use during vendor selection and pricing negotiations.

- Infrastructure compatibility: Sandbox validation environments verify whether algorithm outputs integrate smoothly into existing hospital systems, allowing radiology IT teams to identify formatting conflicts and workflow disruptions before deployment.

AI-Based Medical Imaging Algorithm Validation Test Platforms Market Analysis by Modality

Validation resources are directed toward imaging modalities that handle high patient volumes and deliver measurable operational gains. X-ray remains central to routine diagnostics, particularly in chest imaging workflows, making it the first focus area for validation programs. Hospitals maintain extensive archives of labeled radiographic scans, allowing validation teams to benchmark datasets for imaging AI validation without building new datasets, which shortens testing cycles and reduces preparation effort. Predeployment validation for chest X-ray AI serves as the point where institutions assess real-world performance against local data before rollout decisions. High case volumes allow statistically reliable accuracy measurement within shorter timeframes, giving procurement teams clear evidence during early-stage evaluation. X-ray is anticipated to account for 31.0% share in 2026. Methods established in X-ray validation carry forward as internal standards for more complex imaging modalities. Hospitals that do not build structured validation frameworks at this stage face execution gaps when moving into higher-dimensional imaging environments.

- Archive utilization: Validation teams use large volumes of historical chest radiographs to test new diagnostic models, generating high-confidence performance metrics due to dataset scale.

- Workflow velocity: X-ray validation tools measure time saved during routine screening workflows, allowing department heads to quantify efficiency gains and support procurement decisions.

- Standardization baseline: Validation approaches developed for two-dimensional imaging models serve as internal templates for evaluating more complex tomographic and multi-parametric imaging systems.

AI-Based Medical Imaging Algorithm Validation Test Platforms Market Analysis by End User

Hospitals retain control over validation processes as they carry direct responsibility for diagnostic outcomes and clinical decisions. The hospital segment is estimated to hold 29.0% share in 2026, as validation conditions must reflect how healthcare ai computer vision tools operate within actual clinical environments. Internal validation ensures performance metrics account for local patient demographics, scanner configurations, and workflow conditions within a structured hospital radiology AI governance platform. External validation results rarely capture these variables, which limits their relevance during procurement decisions. Hospitals are also starting to treat internally generated validation data as a commercial asset, using post-deployment monitoring for radiology AI during vendor negotiations. Shifting validation responsibility to external parties reduces oversight and increases exposure when model performance changes during active clinical use.

- Liability localization: Hospital-led validation programs rely on local patient data to confirm that diagnostic models perform safely within specific clinical settings, providing documented assurance for governance and regulatory requirements.

- Operational mirroring: Validation environments replicate actual clinical workflows, including emergency department pacing and reporting timelines, allowing teams to assess both accuracy and turnaround performance.

- Data monetization: Large hospital networks consolidate validation results across diverse patient populations and operating conditions, using these datasets as leverage in vendor discussions and contract negotiations.

AI-Based Medical Imaging Algorithm Validation Test Platforms Market Drivers, Restraints, and Opportunities

Strict FDA guidance for AI-enabled medical imaging software compels clinical organizations to mandate rigorous independent performance benchmarking before permitting any third-party model to access live patient data streams. Hospitals must generate localized equity proofs to satisfy escalating internal liability protocols. Relying on generalized vendor statistics exposes hospital networks to unacceptable malpractice risks if algorithmic drift occurs unnoticed. Standardized validation platforms provide the only scalable infrastructure capable of executing continuous auditing across massive enterprise deployments. Delaying implementation of dedicated testing environments severely bottlenecks safe AI adoption velocity.

Fragmented clinical data architectures create significant challenges in validating medical imaging AI during initial benchmarking platform deployment. Hospital IT departments struggle structurally to aggregate cleanly annotated validation datasets from isolated departmental archives. This lack of unified ground-truth data forces evaluators to spend excessive hours manually cleaning imaging files before functional testing can even begin. Emerging auto-curation tools alleviate minor formatting issues but completely fail to resolve deep semantic inconsistencies hidden within legacy electronic health records.

Opportunities in the AI-Based Medical Imaging Algorithm Validation Test Platforms Market

- Federated validation networks: Secure collaborative environments allow independent hospitals to pool algorithmic performance metrics. Clinical researchers achieve statistically significant bias detection without transferring sensitive raw patient files.

- Automated drift monitoring: Continuous background auditing tools alert ai enabled medical devices administrators when live model accuracy deviates from baseline parameters. Quality assurance teams intercept subtle performance degradation before diagnostic errors multiply.

- Regulatory alignment portals: Standardized testing gateways require commercial developers to prove algorithm efficacy against specific institutional datasets. Standardized performance thresholds can streamline and automate early-stage vendor filtering utilizing rigid platform-enforced performance thresholds aligned with emerging EU AI Act radiology AI compliance standards.

Regional Analysis

Based on regional analysis, AI-Based Medical Imaging Algorithm Validation Test Platforms Market is segmented into North America, Europe, and Asia Pacific across 40 plus countries.

.webp)

| Country | CAGR (2026 to 2036) |

|---|---|

| India | 13.4% |

| China | 12.9% |

| United States | 12.6% |

| United Kingdom | 12.3% |

| Germany | 11.7% |

| South Korea | 11.4% |

| Japan | 10.8% |

Source: Future Market Insights (FMI) analysis, based on proprietary forecasting model and primary research

Asia Pacific AI-Based Medical Imaging Algorithm Validation Test Platforms Market Analysis

Expansion of the regional developer environment is placing direct pressure on clinical benchmarking infrastructure across the Asia Pacific. Hospital networks are moving away from sequential pilot programs and shifting toward enterprise-wide validation platform deployments to manage scale. Underutilized patient datasets require structured curation environments before reliable model testing can begin. Institutional decision-makers prioritize platforms that can detect demographic bias in externally developed diagnostic models, as performance variation across local populations remains a persistent concern. Validation based on Western regulatory datasets does not translate effectively in this region, forcing hospitals to build localized benchmarking capabilities before procurement decisions.

- India: Hospitals are dealing with a growing pipeline of locally developed diagnostic tools that require testing against region-specific disease patterns and imaging variability. Standard validation frameworks often fall short in capturing these nuances, pushing hospitals to build customized evaluation pipelines. Demand for AI-based medical imaging algorithm validation platforms in India is anticipated to rise at a 13.4% CAGR as institutions replace fragmented testing practices with structured governance systems. Validation strength increasingly influences which hospitals qualify for international research collaborations and external funding programs.

- China: Urban hospital clusters operate at high patient volumes, requiring centralized infrastructure to evaluate diagnostic algorithms at scale without slowing clinical workflows. China’s AI‑based medical imaging algorithm validation platforms sector is expected to increase at a CAGR of 12.9% during the forecast period, supported by ongoing digital healthcare programs. Radiology IT teams rely on integrated validation platforms to assess domestically developed models quickly and under consistent conditions. Competitive pressure among leading hospitals to define national benchmarking standards is shaping how these systems are deployed and continuously refined.

- South Korea: Regulatory approach enforces strict validation and continuous monitoring requirements before and after software deployment, leaving limited room for partial compliance. Hospitals integrate post‑market surveillance capabilities directly into validation platforms to meet audit expectations and maintain clinical oversight. Adoption of AI-based medical imaging algorithm validation platforms in South Korea is largely driven by system upgrades and replacement cycles within established hospital networks. Competitive pressure among leading hospitals to define national benchmarking standards is shaping how these systems are deployed and continuously refined. The industry is projected to advance at a CAGR of 11.4%, underscoring why vendors without strong bias detection and audit traceability struggle to meet entry requirements in this environment.

- Japan: Clinical evaluation is closely tied to aging population dynamics, where diagnostic accuracy across geriatric conditions carries a higher risk sensitivity. Hospitals use validation platforms to confirm consistent model performance across long-term care settings and specialized clinical pathways before deployment. Demand for AI-based medical imaging algorithm validation platforms in Japan is anticipated to advance at a 10.8% CAGR, reflecting a controlled adoption pace shaped by regulatory scrutiny. Purchasing team sets strict evaluation criteria that lengthen validation but lower clinical risk.

FMI's report includes detailed assessments of emerging digital infrastructure developments spanning Southeast Asia and Australasia. Advanced data localization mandates across these supplementary territories will radically reshape cross‑border algorithmic validation capabilities moving forward. In addition, India is witnessing rapid investment in hospital IT modernization, creating opportunities for validation platforms to align with national digital health initiatives.

North America AI-Based Medical Imaging Algorithm Validation Test Platforms Market Analysis

Mature quality registry requirements push enterprise hospital networks toward tightly controlled benchmarking protocols across multi-site operations. Clinical governance boards carry direct legal exposure, which makes continuous post-deployment auditing a requirement across large radiology information system installations. Historical imaging archives give hospitals a strong base for testing, allowing validation teams to measure performance against real clinical data instead of controlled trial outputs. Radiology IT directors use these environments during vendor negotiations, relying on locally generated accuracy results to challenge pricing and contract terms. Platforms that fail to integrate with billing systems and reporting workflows face resistance, as they add operational burden without improving decision clarity.

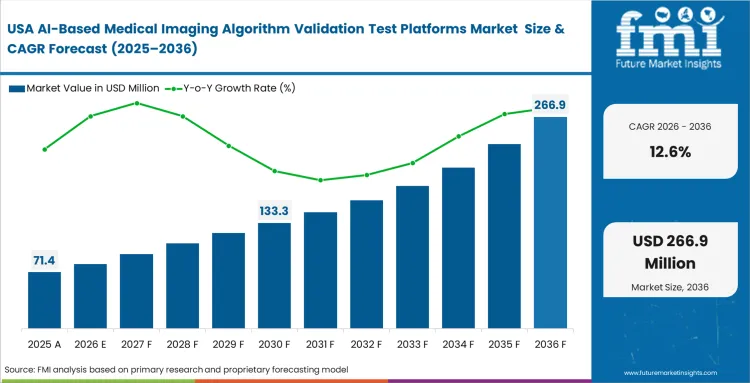

- United States: Regulatory expectations around software traceability require full lifecycle documentation across all approved functions, shaping how validation systems are selected and deployed. Demand for AI-based medical imaging algorithm validation platforms in the United States is anticipated to rise at a 12.6% CAGR through 2036, as hospital consolidation continues to push the need for uniform performance standards across sites. Chief Medical Information Officers implement enterprise-scale benchmarking platforms to meet ongoing FDA audit requirements and maintain consistent validation records across hospital networks. Institutions with well-structured validation datasets are starting to commercialize access, turning internal benchmarking into a secondary revenue stream.

FMI's report includes Canadian market metrics detailing provincial healthcare system validation integration. Centralized provincial procurement models create unique bulk‑licensing opportunities for specialized algorithmic testing platforms. Brazil is also emerging as a growth market, where expanding private healthcare networks are driving demand for scalable validation solutions.

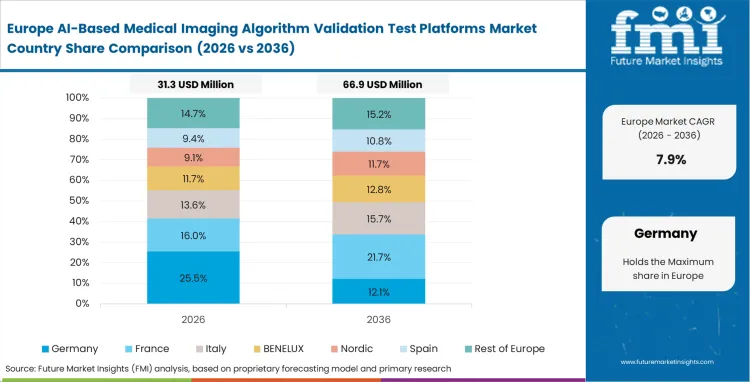

Europe AI-Based Medical Imaging Algorithm Validation Test Platforms Market Analysis

National healthcare systems structure how algorithms are deployed and validated across centralized clinical networks. Procurement bodies require detailed benchmarking of oncology imaging software to maintain consistent diagnostic performance across regional trusts. Data privacy rules prevent raw patient data from leaving institutional boundaries, which forces hospitals to adopt localized validation environments. Hospitals use these platforms to generate the clinical evidence needed for reimbursement approval at the national level. Vendors that cannot demonstrate transparent, locally executed validation are excluded from large-scale public procurement programs.

- United Kingdom: Centralized healthcare delivery requires consistent evaluation standards across multiple regional trusts, which shapes how validation platforms are deployed. Relying on UK medical imaging AI benchmarking software to establish clinical utility before allocating national funding. Demand for AI-based medical imaging algorithm validation platforms in the United Kingdom is expected to scale at a CAGR of 12.3%, aligned with government efforts to reduce diagnostic backlogs. Validation success at leading hospitals often leads to rapid adoption across the wider national network.

- Germany: A strict clinical governance environment requires independent verification of algorithm performance before deployment into routine workflows. Quality assurance teams run continuous validation against live diagnostic outputs to detect performance drift over time. Hospitals are moving away from basic testing scripts toward integrated enterprise benchmarking systems to strengthen oversight and standardization. Platform selection depends heavily on compatibility with existing data privacy infrastructure, which limits vendor access in this market. Adoption of AI-based medical imaging algorithm validation platforms in Germany is estimated to advance at a 11.7% CAGR during the forecast period, reflecting this shift toward structured and compliant validation environments.

FMI's report includes analysis of Nordic region collaborative validation efforts. Cross‑border data sharing agreements enable localized platforms to pool evaluation metrics without compromising individual patient anonymity. Singapore is seeing strong momentum in digital health investments, which is expected to accelerate adoption of validation platforms across its hospital networks.

Competitive Aligners for Market Players

Algorithm testing environments need enough flexibility to handle inconsistent clinical data formats coming from different imaging systems. CARPL.ai tend to offer unified environments where hospitals can evaluate multiple algorithms on the same local datasets under identical conditions. Clinical teams selecting radiology AI validation platform vendors focus on how easily systems connect through APIs rather than raw computing capability. Platforms that fit smoothly into existing annotation and reporting workflows see higher adoption, as they avoid disrupting ongoing computer vision in healthcare diagnostic operations.

Established platform providers benefit from large internal libraries of standardized datasets and predefined evaluation frameworks built over time. Established vendors such as Deepc use structured templates and repeatable evaluation frameworks to shorten validation timelines for an RFP for radiology AI evaluation platform. New entrants find it difficult to match this level of clinical context and operational alignment. Hospitals expect consistent performance monitoring, including the ability to detect subtle model drift without triggering unnecessary alerts, which takes time to prove in real-world settings.

Large hospital networks maintain strict control over validation data to avoid dependency on external vendors. Distributing digital pathology evaluation contracts across multiple platforms to reduce reliance on a single provider. Platform vendors aim to expand across enterprise systems, while hospitals push for modular setups that allow flexibility and control. Competitive positioning increasingly depends on how clearly vendors can demonstrate bias detection, audit transparency, and compliance with tightening regulatory expectations.

Key Players in AI-Based Medical Imaging Algorithm Validation Test Platforms Market

- CARPL.ai

- deepc

- Blackford

- MD.ai

- RedBrick AI

- V7

- Enlitic

Scope of the Report

| Metric | Value |

|---|---|

| Quantitative Units | USD 128.0 Million to USD 390.5 Million, at a CAGR of 11.80% |

| Market Definition | Specialized software environments utilized by clinical institutions and developers to evaluate, benchmark, and monitor diagnostic imaging algorithms against diverse local datasets prior to and during active clinical deployment. |

| Segmentation | By Component, Deployment, Validation Stage, Modality, End User, and Region |

| Regions Covered | North America, Latin America, Europe, Asia Pacific, Middle East and Africa |

| Countries Covered | United States, India, China, United Kingdom, Germany, South Korea, Japan |

| Key Companies Profiled | CARPL.ai, deepc, Blackford, MD.ai, RedBrick AI, V7, Enlitic |

| Forecast Period | 2026 to 2036 |

| Approach | Active hospital AI deployment volumes and quality registry participation |

Source: Future Market Insights (FMI) analysis, based on proprietary forecasting model and primary research

AI-Based Medical Imaging Algorithm Validation Test Platforms Market Analysis by Segments

Component:

- Software

- Services

- Data

Deployment:

- Cloud

- On-premise

- Hybrid

Validation Stage:

- Predeployment

- Development

- Postdeployment

Modality:

- X-ray

- CT

- MRI

- Ultrasound

- Mammography

End User:

- Hospitals

- AI vendors

- Academic centers

- Imaging centers

- CROs

Region:

- North America

- Latin America

- Europe

- Asia Pacific

- Middle East and Africa

Bibliography

- American College of Radiology. (2024, November 18). American College of Radiology launches landmark artificial intelligence quality registry.

- Antonissen, N., Tryfonos, J., Stogiannos, N., et al. (2026). Artificial intelligence in radiology: 173 commercially available products and their scientific evidence. European Radiology.

- European Commission, Directorate-General for Health and Food Safety. (2025, June). MDCG 2025-6: Interplay between the Medical Devices Regulation (MDR) & In vitro Diagnostic Medical Devices Regulation (IVDR) and the Artificial Intelligence Act (AIA).

- Food and Drug Administration. (2025, January 7). Artificial intelligence-enabled device software functions: Lifecycle management and marketing submission recommendations [Draft guidance].

- International Medical Device Regulators Forum. (2025, January 29). Good machine learning practice for medical device development: Guiding principles.

- Ramsay, A. I. G., Fulop, N. J., Deeny, S. R., et al. (2025). Procurement and early deployment of artificial intelligence tools for chest diagnostics in NHS services in England: A rapid mixed-method evaluation. Cell Reports Medicine.

This bibliography is provided for reader reference. The full FMI report contains the complete reference list with primary source documentation.

This Report Addresses

- Chief Medical Information Officer liability protocols dictating post-deployment algorithmic auditing.

- Multi-site hospital standardization requirements forcing centralized side-by-side vendor benchmarking.

- FDA guidance frameworks imposing strict lifecycle monitoring for deployed diagnostic software.

- Cloud architecture scalability driving rapid integration with existing clinical annotation workflows.

- Massive historical X-ray archive utilization accelerating baseline validation metrics.

- India's developer ecosystem expansion creating unique regional pathology evaluation pipelines.

- Proprietary dataset curation capabilities separating dominant platform incumbents from emerging competitors.

- Federated learning architectures enabling secure cross-institutional diagnostic equity evaluations.

Frequently Asked Questions

What is a medical imaging AI validation platform?

Specialized software environments utilized by clinical institutions and developers evaluate, benchmark, and monitor diagnostic imaging algorithms against diverse local datasets prior to and during active clinical deployment.

Why do hospitals need local validation for radiology AI?

Integrated delivery networks mandate standardized local performance equity proofs before authorizing any third-party algorithmic software purchases to prevent misdiagnosis linked to specific regional demographic variations.

How is radiology AI tested before deployment?

Clinical governance boards test proposed models against historical local patient scans in secure sandbox environments to identify unacceptable bias blind spots before live patient interaction occurs.

Which platform is best for validating radiology AI models?

Evaluators reviewing vendors prioritize solutions offering frictionless API connectivity, native structured reporting integration, and extensive proprietary libraries of standardized benchmarking datasets to accelerate institutional validation timelines.

What specific liability forces hospitals to implement continuous auditing?

Unnoticed algorithmic drift caused by subtle scanner recalibrations exposes institutions to severe malpractice risks if automated diagnostic accuracy quietly degrades.

Why do X-ray models represent the largest validation segment?

Massive historical archives of labeled radiographs provide perfect high-volume baseline datasets required for generating statistically significant early validation scores.

How do platforms prevent vendor lock-in?

Independent software layers allow Radiology IT directors to conduct side-by-side performance comparisons across multiple competing commercial models simultaneously.

What structural difference elevates Indian adoption rates?

Expanding localized developer ecosystems actively build custom evaluation pipelines to test tools against unique regional pathology variants ignored by Western datasets.

Why do hospitals retain massive market share?

Ultimate diagnostic liability rests entirely upon the facility executing patient care directives, preventing them from delegating quality assurance back to external developers.

How does USA quality registry participation impact platform usage?

Mature registry requirements force enterprise networks to deploy highly standardized benchmarking protocols to satisfy escalating auditing demands.

What operational friction bottlenecks initial platform deployment?

Fragmented clinical data architectures force evaluators to spend excessive hours manually cleaning imaging files before functional testing can commence.

Why is API integration crucial for competitive platforms?

Clinical researchers require frictionless connectivity with existing annotation tools to avoid manual data transfer delays during rigorous testing cycles.

How do federated networks alter future validation capabilities?

Secure collaborative environments allow independent hospitals to pool algorithmic performance metrics without transferring sensitive raw patient files externally.

What limits rapid scaling in the Japanese market?

Conservative regulatory frameworks dictate highly deliberate adoption pacing centered on extreme accuracy scrutiny for geriatric disease presentations.

Why do platforms command dedicated budget allocations?

Relying solely on generalized vendor-supplied clearance data creates unacceptable clinical risk for enterprise implementations targeting diverse regional demographics.

How does validation software impact vendor pricing negotiations?

Algorithm procurement leads utilize raw comparative performance data from local sandboxes to demand discounts if accuracy scores fall below advertised thresholds.

What distinguishes the UK deployment trajectory?

Nationalized multi-site deployment initiatives require unified evaluation standards across disparate regional trusts before central funding releases occur.

Why do compliance officers demand centralized audit trails?

Software logs every test parameter utilized during validation, providing exact records required during post-deployment regulatory audits.

How does data monetization influence platform utilization?

Enterprise administrators occasionally leverage comprehensive local validation results as a distinct commercial asset during negotiations with external software developers.

What prevents rapid new entrant penetration?

Incumbent platform providers possess massive proprietary libraries of standardized benchmarking datasets that accelerate institutional validation timelines significantly.

Why must platforms integrate with existing structured reporting systems?

Radiology IT directors need to intercept formatting conflicts in sandbox environments before models roll out across enterprise networks.

How do automated drift tools alter post-market surveillance?

Continuous background auditing alerts administrators when live model accuracy deviates from baseline parameters, intercepting errors before they multiply.

What role do clinical oversight boards play in Germany?

These boards formalize strict cross-validation protocols demanding independent accuracy verification integrated directly into existing data privacy architectures.

Table of Content

- Executive Summary

- Global Market Outlook

- Demand to side Trends

- Supply to side Trends

- Technology Roadmap Analysis

- Analysis and Recommendations

- Market Overview

- Market Coverage / Taxonomy

- Market Definition / Scope / Limitations

- Research Methodology

- Chapter Orientation

- Analytical Lens and Working Hypotheses

- Market Structure, Signals, and Trend Drivers

- Benchmarking and Cross-market Comparability

- Market Sizing, Forecasting, and Opportunity Mapping

- Research Design and Evidence Framework

- Desk Research Programme (Secondary Evidence)

- Company Annual and Sustainability Reports

- Peer-reviewed Journals and Academic Literature

- Corporate Websites, Product Literature, and Technical Notes

- Earnings Decks and Investor Briefings

- Statutory Filings and Regulatory Disclosures

- Technical White Papers and Standards Notes

- Trade Journals, Industry Magazines, and Analyst Briefs

- Conference Proceedings, Webinars, and Seminar Materials

- Government Statistics Portals and Public Data Releases

- Press Releases and Reputable Media Coverage

- Specialist Newsletters and Curated Briefings

- Sector Databases and Reference Repositories

- FMI Internal Proprietary Databases and Historical Market Datasets

- Subscription Datasets and Paid Sources

- Social Channels, Communities, and Digital Listening Inputs

- Additional Desk Sources

- Expert Input and Fieldwork (Primary Evidence)

- Primary Modes

- Qualitative Interviews and Expert Elicitation

- Quantitative Surveys and Structured Data Capture

- Blended Approach

- Why Primary Evidence is Used

- Field Techniques

- Interviews

- Surveys

- Focus Groups

- Observational and In-context Research

- Social and Community Interactions

- Stakeholder Universe Engaged

- C-suite Leaders

- Board Members

- Presidents and Vice Presidents

- R&D and Innovation Heads

- Technical Specialists

- Domain Subject-matter Experts

- Scientists

- Physicians and Other Healthcare Professionals

- Governance, Ethics, and Data Stewardship

- Research Ethics

- Data Integrity and Handling

- Primary Modes

- Tooling, Models, and Reference Databases

- Desk Research Programme (Secondary Evidence)

- Data Engineering and Model Build

- Data Acquisition and Ingestion

- Cleaning, Normalisation, and Verification

- Synthesis, Triangulation, and Analysis

- Quality Assurance and Audit Trail

- Market Background

- Market Dynamics

- Drivers

- Restraints

- Opportunity

- Trends

- Scenario Forecast

- Demand in Optimistic Scenario

- Demand in Likely Scenario

- Demand in Conservative Scenario

- Opportunity Map Analysis

- Product Life Cycle Analysis

- Supply Chain Analysis

- Investment Feasibility Matrix

- Value Chain Analysis

- PESTLE and Porter’s Analysis

- Regulatory Landscape

- Regional Parent Market Outlook

- Production and Consumption Statistics

- Import and Export Statistics

- Market Dynamics

- Global Market Analysis 2021 to 2025 and Forecast, 2026 to 2036

- Historical Market Size Value (USD Million) Analysis, 2021 to 2025

- Current and Future Market Size Value (USD Million) Projections, 2026 to 2036

- Y to o to Y Growth Trend Analysis

- Absolute $ Opportunity Analysis

- Global Market Pricing Analysis 2021 to 2025 and Forecast 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Component

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Component , 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Component , 2026 to 2036

- Software

- Services

- Data

- Software

- Y to o to Y Growth Trend Analysis By Component , 2021 to 2025

- Absolute $ Opportunity Analysis By Component , 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Deployment

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Deployment, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Deployment, 2026 to 2036

- Cloud

- On-premise

- Hybrid

- Cloud

- Y to o to Y Growth Trend Analysis By Deployment, 2021 to 2025

- Absolute $ Opportunity Analysis By Deployment, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Validation Stage

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Validation Stage, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Validation Stage, 2026 to 2036

- Predeployment

- Development

- Postdeployment

- Predeployment

- Y to o to Y Growth Trend Analysis By Validation Stage, 2021 to 2025

- Absolute $ Opportunity Analysis By Validation Stage, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Modality

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Modality, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Modality, 2026 to 2036

- X-ray

- CT

- MRI

- X-ray

- Y to o to Y Growth Trend Analysis By Modality, 2021 to 2025

- Absolute $ Opportunity Analysis By Modality, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By End User

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By End User, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By End User, 2026 to 2036

- Hospital

- Hospital

- Y to o to Y Growth Trend Analysis By End User, 2021 to 2025

- Absolute $ Opportunity Analysis By End User, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Region

- Introduction

- Historical Market Size Value (USD Million) Analysis By Region, 2021 to 2025

- Current Market Size Value (USD Million) Analysis and Forecast By Region, 2026 to 2036

- North America

- Latin America

- Western Europe

- Eastern Europe

- East Asia

- South Asia and Pacific

- Middle East & Africa

- Market Attractiveness Analysis By Region

- North America Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- USA

- Canada

- Mexico

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- By Country

- Market Attractiveness Analysis

- By Country

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Key Takeaways

- Latin America Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Brazil

- Chile

- Rest of Latin America

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- By Country

- Market Attractiveness Analysis

- By Country

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Key Takeaways

- Western Europe Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Germany

- UK

- Italy

- Spain

- France

- Nordic

- BENELUX

- Rest of Western Europe

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- By Country

- Market Attractiveness Analysis

- By Country

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Key Takeaways

- Eastern Europe Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Russia

- Poland

- Hungary

- Balkan & Baltic

- Rest of Eastern Europe

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- By Country

- Market Attractiveness Analysis

- By Country

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Key Takeaways

- East Asia Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- China

- Japan

- South Korea

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- By Country

- Market Attractiveness Analysis

- By Country

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Key Takeaways

- South Asia and Pacific Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- India

- ASEAN

- Australia & New Zealand

- Rest of South Asia and Pacific

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- By Country

- Market Attractiveness Analysis

- By Country

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Key Takeaways

- Middle East & Africa Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Kingdom of Saudi Arabia

- Other GCC Countries

- Turkiye

- South Africa

- Other African Union

- Rest of Middle East & Africa

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- By Country

- Market Attractiveness Analysis

- By Country

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Key Takeaways

- Key Countries Market Analysis

- USA

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Canada

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Mexico

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Brazil

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Chile

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Germany

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- UK

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Italy

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Spain

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- France

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- India

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- ASEAN

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Australia & New Zealand

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- China

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Japan

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- South Korea

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Russia

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Poland

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Hungary

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Kingdom of Saudi Arabia

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Turkiye

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- South Africa

- Pricing Analysis

- Market Share Analysis, 2025

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- USA

- Market Structure Analysis

- Competition Dashboard

- Competition Benchmarking

- Market Share Analysis of Top Players

- By Regional

- By Component

- By Deployment

- By Validation Stage

- By Modality

- By End User

- Competition Analysis

- Competition Deep Dive

- CARPL.ai

- Overview

- Product Portfolio

- Profitability by Market Segments (Product/Age /Sales Channel/Region)

- Sales Footprint

- Strategy Overview

- Marketing Strategy

- Product Strategy

- Channel Strategy

- deepc

- Blackford

- MD.ai

- RedBrick AI

- V7

- CARPL.ai

- Competition Deep Dive

- Assumptions & Acronyms Used

List of Tables

- Table 1: Global Market Value (USD Million) Forecast by Region, 2021 to 2036

- Table 2: Global Market Value (USD Million) Forecast by Component , 2021 to 2036

- Table 3: Global Market Value (USD Million) Forecast by Deployment, 2021 to 2036

- Table 4: Global Market Value (USD Million) Forecast by Validation Stage, 2021 to 2036

- Table 5: Global Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 6: Global Market Value (USD Million) Forecast by End User, 2021 to 2036

- Table 7: North America Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 8: North America Market Value (USD Million) Forecast by Component , 2021 to 2036

- Table 9: North America Market Value (USD Million) Forecast by Deployment, 2021 to 2036

- Table 10: North America Market Value (USD Million) Forecast by Validation Stage, 2021 to 2036

- Table 11: North America Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 12: North America Market Value (USD Million) Forecast by End User, 2021 to 2036

- Table 13: Latin America Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 14: Latin America Market Value (USD Million) Forecast by Component , 2021 to 2036

- Table 15: Latin America Market Value (USD Million) Forecast by Deployment, 2021 to 2036

- Table 16: Latin America Market Value (USD Million) Forecast by Validation Stage, 2021 to 2036

- Table 17: Latin America Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 18: Latin America Market Value (USD Million) Forecast by End User, 2021 to 2036

- Table 19: Western Europe Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 20: Western Europe Market Value (USD Million) Forecast by Component , 2021 to 2036

- Table 21: Western Europe Market Value (USD Million) Forecast by Deployment, 2021 to 2036

- Table 22: Western Europe Market Value (USD Million) Forecast by Validation Stage, 2021 to 2036

- Table 23: Western Europe Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 24: Western Europe Market Value (USD Million) Forecast by End User, 2021 to 2036

- Table 25: Eastern Europe Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 26: Eastern Europe Market Value (USD Million) Forecast by Component , 2021 to 2036

- Table 27: Eastern Europe Market Value (USD Million) Forecast by Deployment, 2021 to 2036

- Table 28: Eastern Europe Market Value (USD Million) Forecast by Validation Stage, 2021 to 2036

- Table 29: Eastern Europe Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 30: Eastern Europe Market Value (USD Million) Forecast by End User, 2021 to 2036

- Table 31: East Asia Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 32: East Asia Market Value (USD Million) Forecast by Component , 2021 to 2036

- Table 33: East Asia Market Value (USD Million) Forecast by Deployment, 2021 to 2036

- Table 34: East Asia Market Value (USD Million) Forecast by Validation Stage, 2021 to 2036

- Table 35: East Asia Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 36: East Asia Market Value (USD Million) Forecast by End User, 2021 to 2036

- Table 37: South Asia and Pacific Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 38: South Asia and Pacific Market Value (USD Million) Forecast by Component , 2021 to 2036

- Table 39: South Asia and Pacific Market Value (USD Million) Forecast by Deployment, 2021 to 2036

- Table 40: South Asia and Pacific Market Value (USD Million) Forecast by Validation Stage, 2021 to 2036

- Table 41: South Asia and Pacific Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 42: South Asia and Pacific Market Value (USD Million) Forecast by End User, 2021 to 2036

- Table 43: Middle East & Africa Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 44: Middle East & Africa Market Value (USD Million) Forecast by Component , 2021 to 2036

- Table 45: Middle East & Africa Market Value (USD Million) Forecast by Deployment, 2021 to 2036

- Table 46: Middle East & Africa Market Value (USD Million) Forecast by Validation Stage, 2021 to 2036

- Table 47: Middle East & Africa Market Value (USD Million) Forecast by Modality, 2021 to 2036

- Table 48: Middle East & Africa Market Value (USD Million) Forecast by End User, 2021 to 2036

List of Figures

- Figure 1: Global Market Pricing Analysis

- Figure 2: Global Market Value (USD Million) Forecast 2021-2036

- Figure 3: Global Market Value Share and BPS Analysis by Component , 2026 and 2036

- Figure 4: Global Market Y-o-Y Growth Comparison by Component , 2026-2036

- Figure 5: Global Market Attractiveness Analysis by Component

- Figure 6: Global Market Value Share and BPS Analysis by Deployment, 2026 and 2036

- Figure 7: Global Market Y-o-Y Growth Comparison by Deployment, 2026-2036

- Figure 8: Global Market Attractiveness Analysis by Deployment

- Figure 9: Global Market Value Share and BPS Analysis by Validation Stage, 2026 and 2036

- Figure 10: Global Market Y-o-Y Growth Comparison by Validation Stage, 2026-2036

- Figure 11: Global Market Attractiveness Analysis by Validation Stage

- Figure 12: Global Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 13: Global Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 14: Global Market Attractiveness Analysis by Modality

- Figure 15: Global Market Value Share and BPS Analysis by End User, 2026 and 2036

- Figure 16: Global Market Y-o-Y Growth Comparison by End User, 2026-2036

- Figure 17: Global Market Attractiveness Analysis by End User

- Figure 18: Global Market Value (USD Million) Share and BPS Analysis by Region, 2026 and 2036

- Figure 19: Global Market Y-o-Y Growth Comparison by Region, 2026-2036

- Figure 20: Global Market Attractiveness Analysis by Region

- Figure 21: North America Market Incremental Dollar Opportunity, 2026-2036

- Figure 22: Latin America Market Incremental Dollar Opportunity, 2026-2036

- Figure 23: Western Europe Market Incremental Dollar Opportunity, 2026-2036

- Figure 24: Eastern Europe Market Incremental Dollar Opportunity, 2026-2036

- Figure 25: East Asia Market Incremental Dollar Opportunity, 2026-2036

- Figure 26: South Asia and Pacific Market Incremental Dollar Opportunity, 2026-2036

- Figure 27: Middle East & Africa Market Incremental Dollar Opportunity, 2026-2036

- Figure 28: North America Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 29: North America Market Value Share and BPS Analysis by Component , 2026 and 2036

- Figure 30: North America Market Y-o-Y Growth Comparison by Component , 2026-2036

- Figure 31: North America Market Attractiveness Analysis by Component

- Figure 32: North America Market Value Share and BPS Analysis by Deployment, 2026 and 2036

- Figure 33: North America Market Y-o-Y Growth Comparison by Deployment, 2026-2036

- Figure 34: North America Market Attractiveness Analysis by Deployment

- Figure 35: North America Market Value Share and BPS Analysis by Validation Stage, 2026 and 2036

- Figure 36: North America Market Y-o-Y Growth Comparison by Validation Stage, 2026-2036

- Figure 37: North America Market Attractiveness Analysis by Validation Stage

- Figure 38: North America Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 39: North America Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 40: North America Market Attractiveness Analysis by Modality

- Figure 41: North America Market Value Share and BPS Analysis by End User, 2026 and 2036

- Figure 42: North America Market Y-o-Y Growth Comparison by End User, 2026-2036

- Figure 43: North America Market Attractiveness Analysis by End User

- Figure 44: Latin America Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 45: Latin America Market Value Share and BPS Analysis by Component , 2026 and 2036

- Figure 46: Latin America Market Y-o-Y Growth Comparison by Component , 2026-2036

- Figure 47: Latin America Market Attractiveness Analysis by Component

- Figure 48: Latin America Market Value Share and BPS Analysis by Deployment, 2026 and 2036

- Figure 49: Latin America Market Y-o-Y Growth Comparison by Deployment, 2026-2036

- Figure 50: Latin America Market Attractiveness Analysis by Deployment

- Figure 51: Latin America Market Value Share and BPS Analysis by Validation Stage, 2026 and 2036

- Figure 52: Latin America Market Y-o-Y Growth Comparison by Validation Stage, 2026-2036

- Figure 53: Latin America Market Attractiveness Analysis by Validation Stage

- Figure 54: Latin America Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 55: Latin America Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 56: Latin America Market Attractiveness Analysis by Modality

- Figure 57: Latin America Market Value Share and BPS Analysis by End User, 2026 and 2036

- Figure 58: Latin America Market Y-o-Y Growth Comparison by End User, 2026-2036

- Figure 59: Latin America Market Attractiveness Analysis by End User

- Figure 60: Western Europe Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 61: Western Europe Market Value Share and BPS Analysis by Component , 2026 and 2036

- Figure 62: Western Europe Market Y-o-Y Growth Comparison by Component , 2026-2036

- Figure 63: Western Europe Market Attractiveness Analysis by Component

- Figure 64: Western Europe Market Value Share and BPS Analysis by Deployment, 2026 and 2036

- Figure 65: Western Europe Market Y-o-Y Growth Comparison by Deployment, 2026-2036

- Figure 66: Western Europe Market Attractiveness Analysis by Deployment

- Figure 67: Western Europe Market Value Share and BPS Analysis by Validation Stage, 2026 and 2036

- Figure 68: Western Europe Market Y-o-Y Growth Comparison by Validation Stage, 2026-2036

- Figure 69: Western Europe Market Attractiveness Analysis by Validation Stage

- Figure 70: Western Europe Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 71: Western Europe Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 72: Western Europe Market Attractiveness Analysis by Modality

- Figure 73: Western Europe Market Value Share and BPS Analysis by End User, 2026 and 2036

- Figure 74: Western Europe Market Y-o-Y Growth Comparison by End User, 2026-2036

- Figure 75: Western Europe Market Attractiveness Analysis by End User

- Figure 76: Eastern Europe Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 77: Eastern Europe Market Value Share and BPS Analysis by Component , 2026 and 2036

- Figure 78: Eastern Europe Market Y-o-Y Growth Comparison by Component , 2026-2036

- Figure 79: Eastern Europe Market Attractiveness Analysis by Component

- Figure 80: Eastern Europe Market Value Share and BPS Analysis by Deployment, 2026 and 2036

- Figure 81: Eastern Europe Market Y-o-Y Growth Comparison by Deployment, 2026-2036

- Figure 82: Eastern Europe Market Attractiveness Analysis by Deployment

- Figure 83: Eastern Europe Market Value Share and BPS Analysis by Validation Stage, 2026 and 2036

- Figure 84: Eastern Europe Market Y-o-Y Growth Comparison by Validation Stage, 2026-2036

- Figure 85: Eastern Europe Market Attractiveness Analysis by Validation Stage

- Figure 86: Eastern Europe Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 87: Eastern Europe Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 88: Eastern Europe Market Attractiveness Analysis by Modality

- Figure 89: Eastern Europe Market Value Share and BPS Analysis by End User, 2026 and 2036

- Figure 90: Eastern Europe Market Y-o-Y Growth Comparison by End User, 2026-2036

- Figure 91: Eastern Europe Market Attractiveness Analysis by End User

- Figure 92: East Asia Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 93: East Asia Market Value Share and BPS Analysis by Component , 2026 and 2036

- Figure 94: East Asia Market Y-o-Y Growth Comparison by Component , 2026-2036

- Figure 95: East Asia Market Attractiveness Analysis by Component

- Figure 96: East Asia Market Value Share and BPS Analysis by Deployment, 2026 and 2036

- Figure 97: East Asia Market Y-o-Y Growth Comparison by Deployment, 2026-2036

- Figure 98: East Asia Market Attractiveness Analysis by Deployment

- Figure 99: East Asia Market Value Share and BPS Analysis by Validation Stage, 2026 and 2036

- Figure 100: East Asia Market Y-o-Y Growth Comparison by Validation Stage, 2026-2036

- Figure 101: East Asia Market Attractiveness Analysis by Validation Stage

- Figure 102: East Asia Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 103: East Asia Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 104: East Asia Market Attractiveness Analysis by Modality

- Figure 105: East Asia Market Value Share and BPS Analysis by End User, 2026 and 2036

- Figure 106: East Asia Market Y-o-Y Growth Comparison by End User, 2026-2036

- Figure 107: East Asia Market Attractiveness Analysis by End User

- Figure 108: South Asia and Pacific Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 109: South Asia and Pacific Market Value Share and BPS Analysis by Component , 2026 and 2036

- Figure 110: South Asia and Pacific Market Y-o-Y Growth Comparison by Component , 2026-2036

- Figure 111: South Asia and Pacific Market Attractiveness Analysis by Component

- Figure 112: South Asia and Pacific Market Value Share and BPS Analysis by Deployment, 2026 and 2036

- Figure 113: South Asia and Pacific Market Y-o-Y Growth Comparison by Deployment, 2026-2036

- Figure 114: South Asia and Pacific Market Attractiveness Analysis by Deployment

- Figure 115: South Asia and Pacific Market Value Share and BPS Analysis by Validation Stage, 2026 and 2036

- Figure 116: South Asia and Pacific Market Y-o-Y Growth Comparison by Validation Stage, 2026-2036

- Figure 117: South Asia and Pacific Market Attractiveness Analysis by Validation Stage

- Figure 118: South Asia and Pacific Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 119: South Asia and Pacific Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 120: South Asia and Pacific Market Attractiveness Analysis by Modality

- Figure 121: South Asia and Pacific Market Value Share and BPS Analysis by End User, 2026 and 2036

- Figure 122: South Asia and Pacific Market Y-o-Y Growth Comparison by End User, 2026-2036

- Figure 123: South Asia and Pacific Market Attractiveness Analysis by End User

- Figure 124: Middle East & Africa Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 125: Middle East & Africa Market Value Share and BPS Analysis by Component , 2026 and 2036

- Figure 126: Middle East & Africa Market Y-o-Y Growth Comparison by Component , 2026-2036

- Figure 127: Middle East & Africa Market Attractiveness Analysis by Component

- Figure 128: Middle East & Africa Market Value Share and BPS Analysis by Deployment, 2026 and 2036

- Figure 129: Middle East & Africa Market Y-o-Y Growth Comparison by Deployment, 2026-2036

- Figure 130: Middle East & Africa Market Attractiveness Analysis by Deployment

- Figure 131: Middle East & Africa Market Value Share and BPS Analysis by Validation Stage, 2026 and 2036

- Figure 132: Middle East & Africa Market Y-o-Y Growth Comparison by Validation Stage, 2026-2036

- Figure 133: Middle East & Africa Market Attractiveness Analysis by Validation Stage

- Figure 134: Middle East & Africa Market Value Share and BPS Analysis by Modality, 2026 and 2036

- Figure 135: Middle East & Africa Market Y-o-Y Growth Comparison by Modality, 2026-2036

- Figure 136: Middle East & Africa Market Attractiveness Analysis by Modality

- Figure 137: Middle East & Africa Market Value Share and BPS Analysis by End User, 2026 and 2036

- Figure 138: Middle East & Africa Market Y-o-Y Growth Comparison by End User, 2026-2036

- Figure 139: Middle East & Africa Market Attractiveness Analysis by End User

- Figure 140: Global Market - Tier Structure Analysis

- Figure 141: Global Market - Company Share Analysis