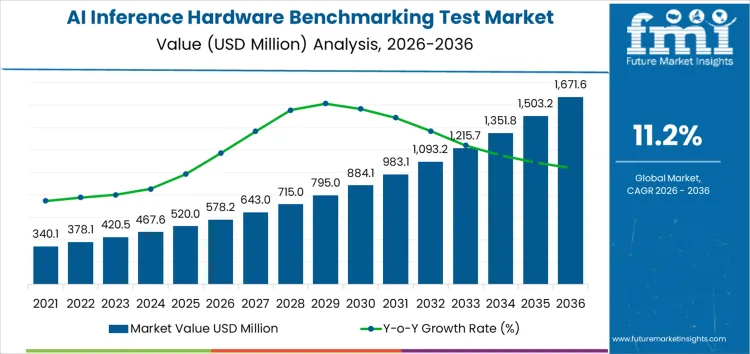

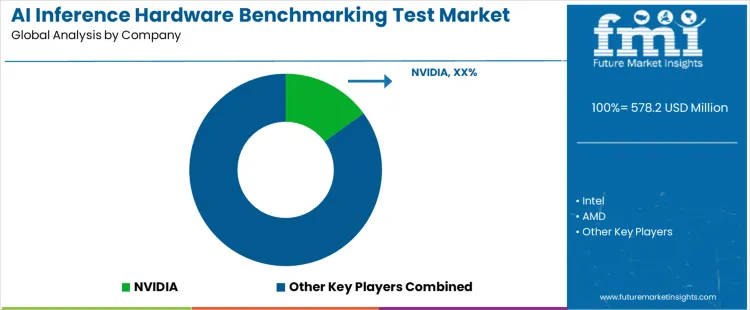

The AI inference hardware benchmarking test market is valued at USD 578.2 million in 2026 and is projected to reach USD 1,672.1 million by 2036, expanding at a CAGR of 11.2%. Growth is driven by the rapid deployment of inference workloads across data centers, edge nodes, and embedded systems supporting real-time decision-making. Demand reflects the requirement for objective performance comparison across GPUs, CPUs, NPUs, and custom accelerators, with a focus on latency, throughput, power efficiency, and cost per inference under realistic workloads.

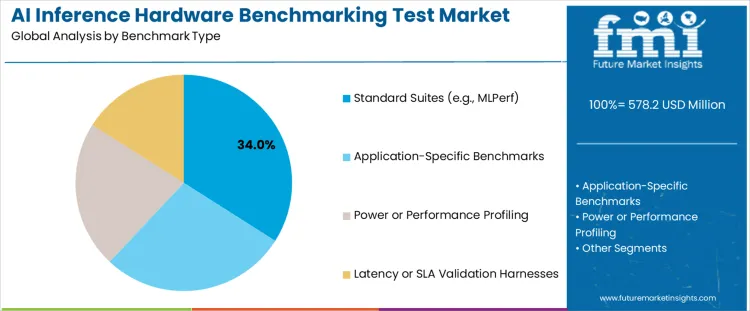

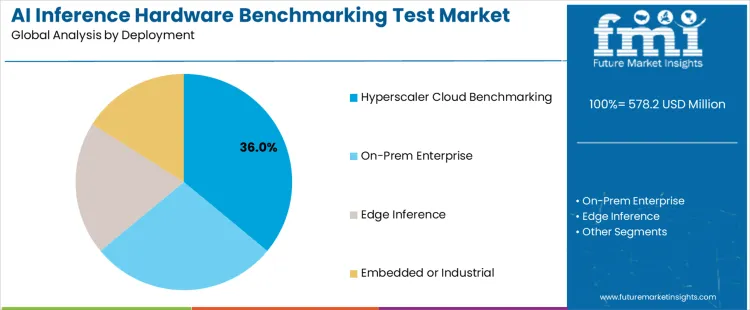

Standard benchmark suites represent leading benchmark type globally, as enterprises and developers prioritize comparability, repeatability, and ecosystem-wide acceptance. These suites enable consistent evaluation across architectures, software stacks, and optimization frameworks. Hyperscaler cloud benchmarking represents leading deployment environment, reflecting reliance on cloud-based AI inference services for scalability testing, workload simulation, and performance transparency. Segment structure highlights preference for vendor-neutral metrics aligned with production inference scenarios rather than synthetic or isolated testing.

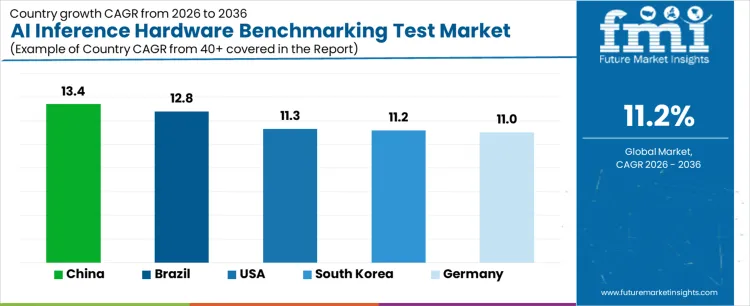

China, Brazil, USA, South Korea, and Germany rank among fastest-growing countries, supported by AI infrastructure expansion and public-private investment in compute capacity. Competitive landscape includes NVIDIA, Intel, AMD, Qualcomm, and Arm, alongside cloud platforms such as Google Cloud, Amazon Web Services, and Microsoft Azure. Competitive intensity reflects convergence of silicon performance and cloud-scale benchmarking transparency.

| Metric | Value |

|---|---|

| Market Value (2026) | USD 578.2 million |

| Market Forecast Value (2036) | USD 1,672.1 million |

| Forecast CAGR (2026 to 2036) | 11.2% |

Demand for AI inference hardware benchmarking test solutions is growing globally due to rapid adoption of artificial intelligence across cloud, edge, autonomous systems, and consumer electronics. AI inference workloads require specialized hardware accelerators, including GPUs, TPUs, NPUs, and custom ASICs, which must be evaluated for performance, power efficiency, latency, and accuracy before deployment. Benchmarking test platforms enable objective comparison of hardware capabilities across diverse model architectures and real world use cases. Growth in AI driven applications such as natural language processing, computer vision, robotics, and real time analytics increases pressure on manufacturers and system integrators to verify hardware suitability under varied operating conditions.

Standardized benchmarking supports procurement decisions, design optimization, and performance tuning within competitive global markets. Semiconductor vendors, hyperscale cloud providers, and enterprise IT organizations adopt test systems to validate inference throughput and scalability at scale. Research institutions and industry consortia contribute to benchmarking frameworks that align cross vendor expectations and interoperability. Expansion of edge AI nodes amplifies need for lightweight, energy efficient benchmarking tools that reflect field conditions. Ongoing emphasis on reliability, model accuracy, and hardware acceleration performance reinforces structured evaluation across AI inference ecosystems worldwide.

Demand for AI inference hardware benchmarking test solutions globally is shaped by deployment-scale performance validation, workload diversity, and energy-efficiency accountability. Buyers assess reproducibility, workload representativeness, power measurement fidelity, and comparability across architectures. Adoption patterns reflect benchmarking needs across cloud, enterprise, edge, and embedded deployments, with emphasis on aligning measured results to real inference latency, throughput, and service-level expectations.

Standard benchmark suites hold 34.0%, representing the largest share of global demand. These suites provide comparable, repeatable measurements across vendors and platforms, supporting procurement and public disclosure workflows. Application-specific benchmarks hold 28.0%, enabling tuning to domain workloads and model characteristics that generic suites cannot capture. Power or performance profiling holds 22.0%, supporting evaluation of efficiency, thermal behavior, and cost-per-inference metrics. Latency or SLA validation harnesses hold 16.0%, addressing tail latency and determinism requirements for real-time services. Benchmark-type distribution reflects preference for standardized comparability complemented by workload-specific realism.

Key Points:

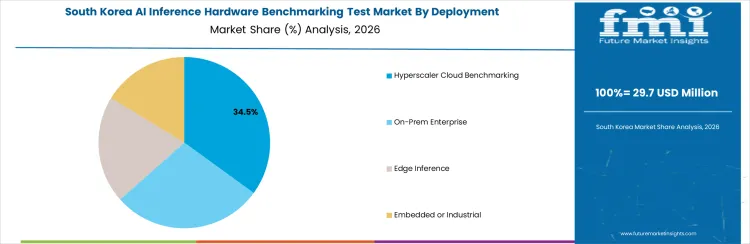

Hyperscaler cloud benchmarking holds 36.0%, driving the highest share of global activity. Large-scale deployments require rigorous comparison across accelerators to optimize fleet efficiency and service consistency. On-prem enterprise environments hold 28.0%, validating inference performance within controlled infrastructure and security constraints. Edge inference accounts for 20.0%, emphasizing latency, power limits, and environmental variability. Embedded or industrial deployments hold 16.0%, focusing on determinism and long-lifecycle operation. Deployment distribution reflects concentration of benchmarking intensity where scale and cost sensitivity are highest.

Key Points:

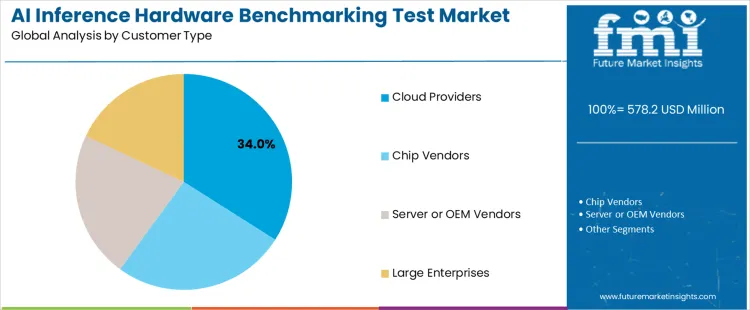

Cloud providers hold 34.0%, accounting for the largest share of global demand. Providers benchmark hardware to guide capacity planning, instance design, and customer transparency. Chip vendors hold 26.0%, validating architectural gains and competitive positioning across workloads. Server or OEM vendors account for 22.0%, supporting platform qualification and customer acceptance. Large enterprises hold 18.0%, benchmarking inference hardware for internal deployments and procurement decisions. Customer distribution reflects benchmarking ownership concentrated among entities responsible for platform performance and large-scale deployment outcomes.

Key Points:

Global demand rises as hardware developers, cloud providers, and enterprise IT organizations adopt benchmarking test solutions to evaluate performance, efficiency, and reliability of AI inference accelerators. Benchmarking platforms measure throughput, latency, power consumption, and model accuracy under representative workloads. Adoption aligns with proliferation of edge AI devices, data center scale-out, and diverse inference architectures across CPUs, GPUs, TPUs, FPGAs, and purpose-built ASICs. Usage spans research labs, system integrators, and hyperscale cloud providers requiring transparent performance assessment.

Developers of AI hardware require benchmarking to compare inference throughput and energy efficiency across models and frameworks such as CNNs, transformers, and quantized networks. Test solutions capture metrics under real-world conditions, supporting optimization of memory hierarchy, instruction scheduling, and hardware–software co-design. Cloud providers benchmark configurations to inform instance offerings and pricing tiers. Edge computing vendors assess performance under constrained power and thermal envelopes, balancing latency and accuracy. Standardized benchmark suites and open datasets support comparability across architectures and generational improvements. Demand grows where performance differentiation influences procurement decisions in autonomous systems, healthcare imaging, natural language processing, and recommendation engines.

AI inference benchmarking platforms involve investment in test harnesses, performance counters, and environment control to replicate operational conditions. Fragmentation of frameworks, precision formats, and workload diversity necessitates adaptable test tooling, increasing engineering complexity. Lack of universal standards for inference benchmarking complicates cross-platform comparisons and requires ongoing maintenance of test suites as architectures evolve. Skilled personnel are necessary to design, execute, and interpret benchmark campaigns. Regional disparities in access to cutting-edge hardware concentrate advanced benchmarking capability within major technology clusters, while smaller developers rely on shared resources or benchmarking-as-a-service offerings. Global growth depends on clearer benchmarking standards, modular test solutions, and community-supported frameworks that lower barriers to comprehensive performance evaluation across the expanding AI inference hardware landscape.

Demand for AI inference hardware benchmarking test systems is increasing globally due to rapid deployment of edge and data center inference, heterogeneous accelerator adoption, and procurement decisions driven by performance per watt. China leads with a 13.4% CAGR, supported by domestic accelerator programs and large-scale deployments. Brazil follows at 12.8%, driven by applied research and pilot production. USA records an 11.3% CAGR, shaped by platform refresh cycles and competitive vendor evaluation. South Korea posts 11.2%, reflecting electronics validation rigor and telecom inference use. Germany records 11.0%, supported by industrial AI and compliance-driven testing. Growth reflects need for standardized, workload-representative, and repeatable benchmarking worldwide.

| Country | CAGR (%) |

|---|---|

| China | 13.4% |

| Brazil | 12.8% |

| USA | 11.3% |

| South Korea | 11.2% |

| Germany | 11.0% |

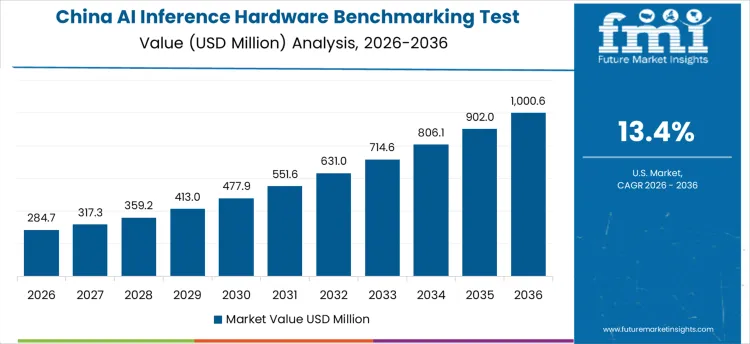

China drives demand through rapid rollout of domestic AI accelerators across cloud, edge, and embedded deployments. Country’s CAGR of 13.4% reflects extensive benchmarking of throughput, latency, accuracy retention, and power efficiency under representative workloads. Organizations compare architectures across CV, NLP, and recommender inference. In-house labs shorten procurement cycles and protect IP. Platforms emphasize automation, dataset management, and reproducibility at scale. Integration with thermal and power telemetry enables performance per watt analysis. Growth remains scale-driven and deployment-aligned, supported by national compute initiatives and vendor competition.

Brazil demand is shaped by applied AI programs, pilot manufacturing, and growing enterprise inference adoption. Country’s CAGR of 12.8% reflects investment in benchmarking to select cost-effective hardware for edge and private cloud deployments. Shared laboratories provide access to standardized suites and datasets. Collaboration with global vendors supports adoption of common metrics and methodologies. Demand favors modular platforms supporting mixed workloads and constrained footprints. Growth remains capability-building focused, aligned with gradual scaling from pilots to production inference.

USA demand reflects frequent platform refreshes, heterogeneous accelerator landscapes, and competitive vendor selection. Country’s CAGR of 11.3% is supported by benchmarking to compare GPUs, ASICs, and NPUs across standardized inference suites. Operators assess latency tail behavior, batching efficiency, and accuracy drift. Platforms integrate CI pipelines for regression tracking and procurement decisions. Emphasis remains on transparency, reproducibility, and correlation with production telemetry. Growth remains lifecycle-driven, aligned with refresh cadence and rapid model evolution.

South Korea demand reflects precision electronics validation, telecom inference workloads, and edge deployments. Country’s CAGR of 11.2% is supported by benchmarking focused on determinism, power stability, and thermal headroom. Organizations validate inference under constrained envelopes and real-time requirements. Government-backed programs encourage standardized metrics. Facilities prioritize repeatability and high-fidelity measurement. Growth remains technology-driven, aligned with electronics reliability standards and network-centric inference use.

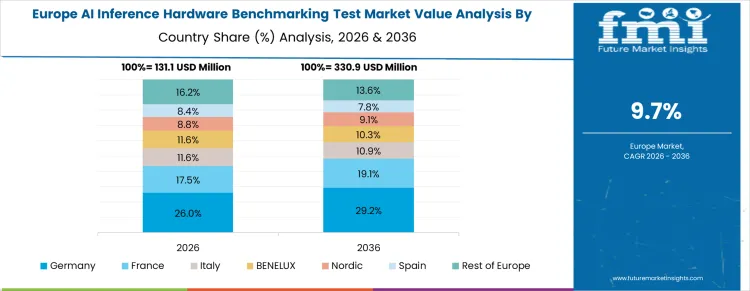

Germany demand reflects industrial AI, automotive perception, and strong compliance culture. Country’s CAGR of 11.0% is supported by benchmarking for safety, robustness, and efficiency across factory and vehicle inference. Platforms test under combined stresses and duty cycles. Shared institutes expand access to standardized suites. Procurement emphasizes documentation, traceability, and standards alignment. Growth remains engineering-led, aligned with reliability, safety, and long-term operability requirements.

Demand for AI inference hardware benchmarking test platforms is driven by deployment of AI workloads across data centers, edge devices, and embedded systems. Validation focuses on latency, throughput, power efficiency, model accuracy retention, and performance consistency under real-world inference loads. Buyers require standardized benchmarks, reproducible test environments, and coverage across vision, language, and recommendation workloads. Procurement teams assess software tooling maturity, framework compatibility, transparency of benchmark methodology, and ability to reflect production inference scenarios. Trend in the global market reflects growing emphasis on energy efficiency, mixed-precision inference, and workload-specific optimization rather than peak theoretical performance.

NVIDIA holds strong positioning through vertically integrated GPUs, inference software stacks, and widely adopted benchmarking frameworks used across industries. Intel and AMD compete through CPU and accelerator platforms evaluated using standardized inference suites and enterprise deployment metrics. Qualcomm and Arm support mobile and edge inference benchmarking through power-efficient architectures and ecosystem-aligned test methodologies. Cloud providers including Google Cloud, AWS, and Microsoft Azure influence benchmarking practices through managed inference services and published performance data. Dell Technologies and Supermicro support benchmarking through reference server platforms optimized for accelerator and CPU-based inference. Competitive differentiation depends on benchmark credibility, software ecosystem depth, hardware-software co-optimization, and relevance to production inference workloads.

| Items | Values |

|---|---|

| Quantitative Units | USD million |

| Benchmark Type | Standard Benchmark Suites including MLPerf; Application-Specific Inference Benchmarks; Power and Performance Profiling Frameworks; Latency and SLA Validation Harnesses |

| Deployment | Hyperscaler Cloud Benchmarking Environments; On-Prem Enterprise Test Labs; Edge Inference Validation; Embedded or Industrial Inference Platforms |

| Customer Type | Cloud Service Providers; AI Chip Vendors; Server or OEM Vendors; Large Enterprises with Internal AI Infrastructure |

| Regions Covered | Asia Pacific; Europe; North America; Latin America; Middle East & Africa |

| Countries Covered | China; USA; Brazil; Germany; South Korea; and 40+ countries |

| Key Companies Profiled | NVIDIA; Intel; AMD; Qualcomm; Arm; Google Cloud; AWS; Microsoft Azure; Dell Technologies; Supermicro |

| Additional Attributes | Dollar sales by benchmark type and deployment model; regional adoption patterns across cloud, enterprise, and edge inference; benchmarking demand for power efficiency and latency compliance; competitive positioning of AI accelerators; validation requirements for SLA-driven, production-scale inference workloads. |

How big is the AI inference hardware benchmarking test market in 2026?

The global AI inference hardware benchmarking test market is estimated to be valued at USD 578.2 million in 2026.

What will be the size of AI inference hardware benchmarking test market in 2036?

The market size for the AI inference hardware benchmarking test market is projected to reach USD 1,671.6 million by 2036.

How much will be the AI inference hardware benchmarking test market growth between 2026 and 2036?

The AI inference hardware benchmarking test market is expected to grow at a 11.2% CAGR between 2026 and 2036.

What are the key product types in the AI inference hardware benchmarking test market?

The key product types in AI inference hardware benchmarking test market are standard suites (e.g., mlperf), application-specific benchmarks, power or performance profiling and latency or sla validation harnesses.

Which deployment segment to contribute significant share in the AI inference hardware benchmarking test market in 2026?

In terms of deployment, hyperscaler cloud benchmarking segment to command 36.0% share in the ai inference hardware benchmarking test market in 2026.

Full Research Suite comprises of:

Market outlook & trends analysis

Interviews & case studies

Strategic recommendations

Vendor profiles & capabilities analysis

5-year forecasts

8 regions and 60+ country-level data splits

Market segment data splits

12 months of continuous data updates

DELIVERED AS:

PDF EXCEL ONLINE

Thank you!

You will receive an email from our Business Development Manager. Please be sure to check your SPAM/JUNK folder too.