Logistics Data Quality and Normalization Services Market

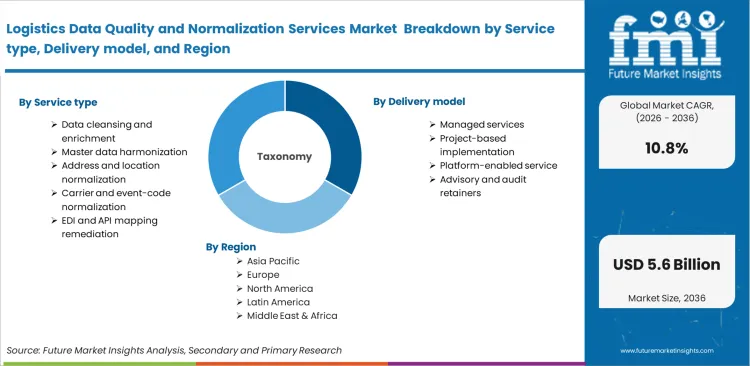

The Logistics Data Quality and Normalization Services is segmented by Service type (Data Cleansing, Master Data Harmonization, Address Normalization, Carrier Normalization, EDI Mapping), Delivery model (Managed Services, Project Implementation, Platforms, Advisory), Data domain (Shipment Tracking, Product SKU, Supplier, Location, Customs), Client type (Large Enterprises, Mid-Market, 3PLs, SMEs), End-use focus (Retail, Manufacturing, Automotive, Life Sciences, Cross-Border Trade), and Region. Forecast for 2026 to 2036.

Historical Data Covered: 2016 to 2024 | Base Year: 2025 | Estimated Year: 2026 | Forecast Period: 2027 to 2036

Logistics Data Quality and Normalization Services Market Size, Market Forecast and Outlook By FMI

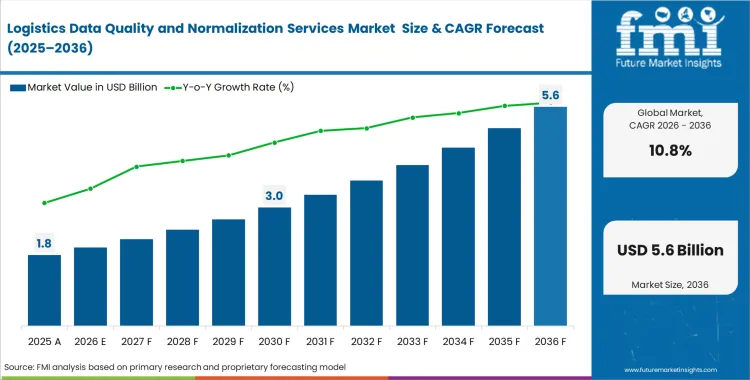

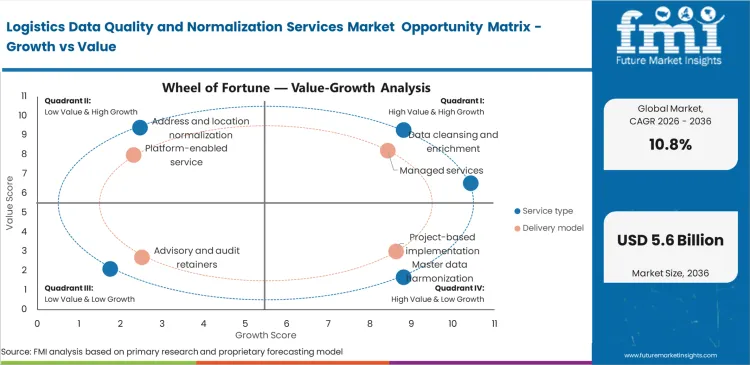

The logistics data quality and normalization services market recorded a valuation of USD 1.63 billion in 2025. Revenue is projected to reach USD 1.8 billion in 2026 and advance at a CAGR of 10.8% through 2036, taking total market value to USD 4.9 billion. The upward trajectory reflects steady spending by logistics operators that need consistent data standards across fragmented carrier, shipment, and partner networks.

Summary of Logistics Data Quality and Normalization Services Market

- Market Snapshot

- The logistics data quality and normalization services market is valued at USD 1.60 billion in 2025 and is projected to reach USD 4.9 billion by 2036.

- The industry is projected to expand at a CAGR of 10.8% from 2026 to 2036, creating an incremental opportunity of USD 3.17 billion over the forecast period.

- This market is a compliance-driven and integration-intensive logistics software services niche, where commercial value comes from cleansing, mapping, validating, and harmonizing shipment and partner data across fragmented operating environments.

- Vendors with logistics domain knowledge and the ability to maintain rules continuously are better placed than providers built around one-time migration assignments.

- Demand and Growth Drivers

- European freight digitization requirements are pushing operators away from paper-based workflows and toward standardized electronic freight information.

- DCSA standards are also improving the practicality of interoperable logistics events, definitions, and APIs for cross-carrier visibility and data exchange.

- Spending remains supported by the operating cost of poor master data, which can delay picking activity, interrupt process flow, and reduce logistics efficiency.

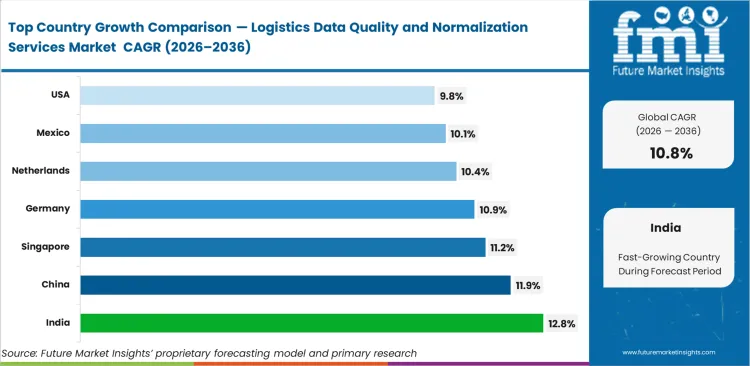

- Among key countries, India is poised to record at 12.8% CAGR, followed by China at 11.9%, Singapore at 11.2%, Germany at 10.9%, the Netherlands at 10.4%, Mexico at 10.1%, and the United States at 9.8%.

- Adoption still faces limits from long-tail partner onboarding, inconsistent reference formats, and the absence of common national or cross-platform access points for standardized exchange in many freight networks.

- Product and Segment View

- The market includes normalization, validation, matching, and mapping services applied to shipment data, product master records, partner records, carrier events, and customs information across logistics software environments.

- These services support transportation management, warehouse execution, customs compliance, real-time visibility, and trading-partner integration.

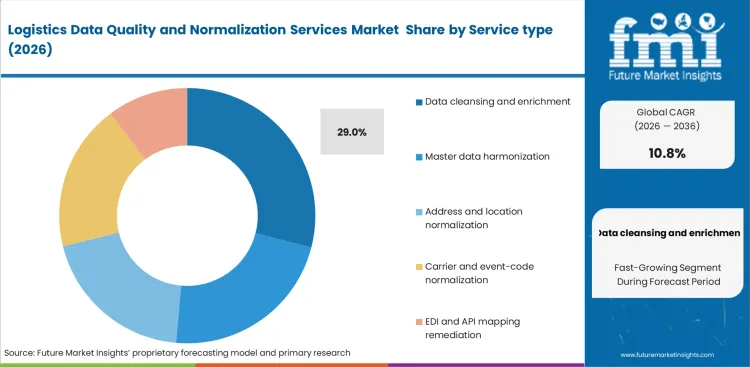

- Data cleansing is projected to represent 31.0% share of the service type segment in 2026 because most engagements begin with correcting incomplete, duplicate, and inconsistent records before automation can function reliably.

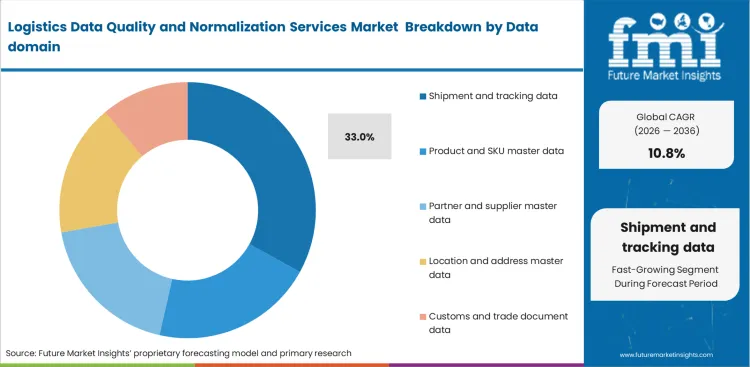

- Shipment data is expected to represent 34.0% share of the data domain segment in 2026, as milestone reconciliation, ETA logic, and carrier event alignment remain the most frequent operating pain points.

- Cloud is likely to hold 68.0% share of the deployment segment in 2026, reflecting the shift toward API-connected and multi-party logistics environments.

- 3PLs are projected to contribute 29.0% share of the end user segment in 2026 because they manage the widest mix of shipper, carrier, and platform data formats.

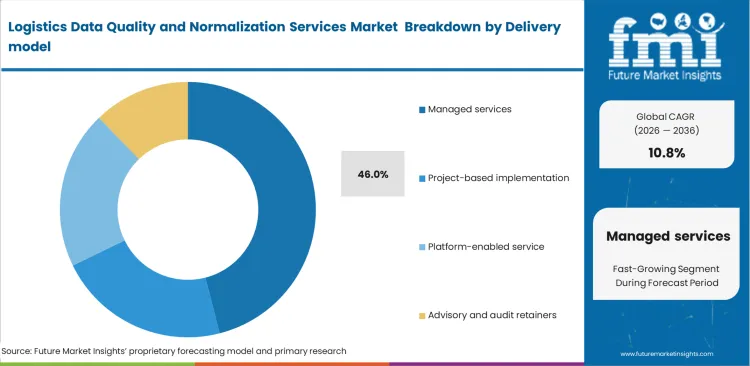

- Managed services are expected to account for 61.0% share of the delivery model segment in 2026, as freight data rules require ongoing maintenance rather than a single implementation cycle.

- The scope covers normalization services tied directly to logistics data flows, while excluding standalone TMS or WMS licenses, telematics hardware, and general consulting work without a data-remediation component.

- Geography and Competitive Outlook

- India, China, and Singapore remain the fastest-rising demand centers, while the United States continues to provide the largest stable commercial base for enterprise logistics data services.

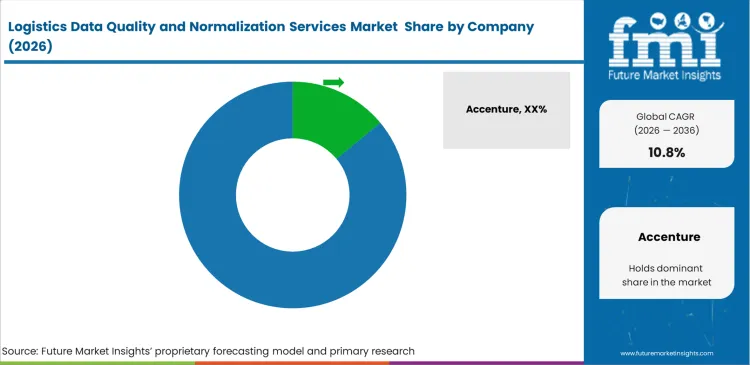

- The market remains fragmented, with the leading player estimated near 14.0% share, as buyers continue to assemble multi-vendor stacks instead of relying on a single normalization platform.

Logistics Data Quality and Normalization Services Market Key Segments

| Metric | Details |

|---|---|

| Industry Size (2026) | USD 1.8 billion |

| Industry Value (2036) | USD 4.9 billion |

| CAGR (2026 to 2036) | 10.8% |

Source: Future Market Insights (FMI) analysis, based on proprietary forecasting model and primary research

Commercial exposure becomes immediate when transport providers run billing, routing, and exception management on inconsistent data fields. Procurement teams at global third-party logistics firms absorb avoidable leakage when non-standard codes disrupt automated invoicing or force invoice disputes back into manual review. Postponing investment in logistics data quality services extends this inefficiency, pulling operating staff into repetitive reconciliation work that weakens profitability on already compressed freight lanes. The mid-sized freight forwarders are placing greater weight on clean master data because pricing engines, carrier allocation tools, and execution workflows lose reliability when address records, shipment references, and event definitions are inconsistent.

The commercial case strengthens further once major shippers require API-based tracking updates across their vendor base. At that point, manual EDI handling is no longer just inefficient; it becomes a constraint on scale, speed, and service consistency. Logistics data normalization services gain importance because pricing logic, milestone visibility, and exception handling depend on standardized location identifiers and aligned event formats. As a result, interoperability is moving from a technical preference to a procurement condition in carrier selection.

India is projected to register 12.8% CAGR through 2036 as domestic 3PLs consolidate fragmented regional transport databases into more unified digital operating models. Port automation and denser freight activity keep China close behind at 11.9%, where cleaner data exchange is becoming more important across high-volume logistics networks. Customs precision and tighter trade-flow coordination support 11.2% CAGR in Singapore during the forecast period. Legacy ERP-linked environments are being modernized across Germany, which is expected to record 10.9% CAGR over the same period. Cross-border freight intensity leaves little room for format inconsistency in the Netherlands, supporting 10.4% CAGR through 2036. Mexico is estimated at 10.1% as trade complexity and deeper North American integration increase the need for harmonized logistics data. Distribution network consolidation and stronger performance control requirements are keeping the United States on a 9.8% growth path.

Segmental Analysis

Logistics Data Quality and Normalization Services Market Analysis by Service type

Carrier data starts to break down when legacy translation scripts fail to interpret new tracking syntax introduced by global logistics partners. That risk reaches directly into routing, billing, and exception management, which is why data cleansing and enrichment is likely to represent 29.0% share of the service type segment in 2026. The operations teams at large 3PLs do not widen deployment of supply chain visibility software until baseline formatting, field logic, and reference data are consistent enough to support reliable downstream execution. Shipment data cleansing services reduce manual reconciliation work, improve event accuracy, and make broader platform investments usable at scale. Deep enrichment programs also expose weaknesses in historical performance records, forcing logistics providers to reassess long-held assumptions about carrier reliability and partner scorecards. Delayed standardization only adds to the administrative burden as vendor networks widen and data exceptions accumulate.

- Initial validation: Syntax screening filters out malformed carrier messages before they enter production data environments. This keeps reporting logic intact and prevents avoidable disruption during heavy shipping periods.

- Continuous enrichment: Machine learning models add missing geographic coordinates to incomplete bill-of-lading records. Logistics planners gain clearer visibility into secondary transit points across less transparent routes.

- Exception auditing: Irregular inputs are isolated for focused review instead of being passed through normal processing. Teams can then trace repeated file-quality failures back to individual transport partners and tighten compliance expectations accordingly.

Logistics Data Quality and Normalization Services Market Analysis by Delivery model

Internal IT teams often underestimate what it takes to maintain custom EDI mappings across a large and unstable carrier base. Every new endpoint, status code revision, or format exception adds work that does not stay fixed after implementation. Such ongoing burden is one primary reason why managed services is estimated to account for 46.0% share of the delivery model segment in 2026. Freight forwarders lean on external specialists in logistics master data because translation changes at regional carriers can disrupt billing, milestone tracking, and exception handling without much notice. A dedicated transport management system partner also shifts spend from irregular repair work into a more predictable operating budget. The irony is that outsourced teams frequently run on software very similar to what internal teams already own, but they apply it with tighter process discipline, better rule maintenance, and more consistent documentation. Legacy on-premise configurations make that gap wider, especially after acquisitions add new carrier feeds and incompatible data logic.

- Procurement savings: Consolidated maintenance contracts can lower translation cost per endpoint compared with carrying the same work through internal developer teams. Finance managers gain better control over technology spend that would otherwise move unpredictably with partner changes.

- Hidden complexities: External providers can still struggle when cold-chain operators or niche carriers rely on deeply customized tracking logic. Transition periods work better when internal teams document local process knowledge before the handover begins.

- Lifecycle economics: Managed solutions become more cost-effective once carrier connections rise beyond a certain volume threshold. Below that level, the savings case is less clear and depends heavily on internal support capability.

Logistics Data Quality and Normalization Services Market Analysis by Data domain

Retailers that promise narrow delivery windows treat shipment and tracking data as a commercial control point, not just an operating record. Predictive arrival models lose credibility quickly when timestamps, event definitions, and location references do not align across carriers. Shipment and tracking data is expected to represent 33.0% share of the data domain segment in 2026. The route optimization tools become less reliable when users fail to normalize shipment tracking data across carrier systems. A freight transport management tool can protect downstream dashboards, but only when the underlying event stream has already been cleaned and aligned. The bigger issue is that many advertised API connections still carry inconsistent field logic, incomplete telemetry, or sensor errors that require correction before the data becomes useful. Logistics providers that fail to stabilize this domain place e-commerce contracts at risk because customer-facing visibility depends on it.

- Predictive failure prevention: Retail delivery models break down when carrier timestamps arrive in conflicting formats. Clean time-sequencing keeps planning logic stable and prevents false delay signals from reaching dispatch and customer commitments.

- Hardware compensation: GPS feeds are not consistently reliable across dense city routes, indoor transfer points, or weaker network zones. Normalization layers fill these gaps well enough to preserve shipment continuity without forcing planners to work from broken event trails.

- Customer retention: Shippers notice data quality when status flows remain coherent across the full journey, especially during exception events. Consistent tracking records strengthen service reviews and give account teams firmer ground during renewal negotiations.

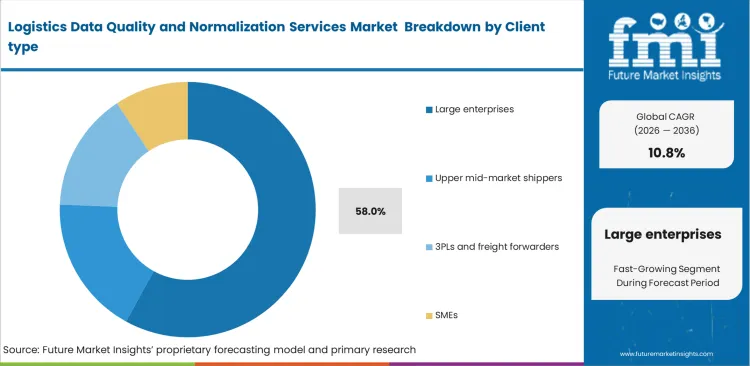

Logistics Data Quality and Normalization Services Market Analysis by Client type

Large enterprises carry the heaviest normalization burden because years of acquisitions, regional system customization, and platform overlap leave them with fragmented transport data environments. Large enterprises is set to hold around 58.0% share of the client type segment in 2026. Global supply chain leaders invest in data quality services to make centralized freight auditing, carrier comparison, and network governance workable across regions. Enterprise-grade normalization tied to freight management software also gives procurement teams a better basis for negotiating volume contracts across divisions that previously operated in silos. There is a common assumption that multinational shippers already run clean data environments. In practice, many of them manage some of the most contaminated legacy databases in the market and require substantial remediation before analytics or automation can be trusted. Mid-sized competitors that standardize earlier often gain speed and operating flexibility while larger organizations are still cleaning historical records.

- Global integration: Carrier scorecards lose value when each region reports through different field logic and naming conventions. Common data standards give central teams a cleaner basis for comparing service levels, cost performance, and exception rates across markets.

- Acquisition onboarding: Newly acquired operations rarely inherit systems that align neatly with corporate data rules. Faster mapping into group-wide formats reduces integration delays and shortens the period in which transport data remains fragmented and hard to govern.

- Auditing centralization: Freight bill controls work far better once rate cards, shipment records, and carrier references follow the same logic across countries. Clean master data makes overcharge detection more reliable and reduces the amount of invoice checking pushed back to finance teams.

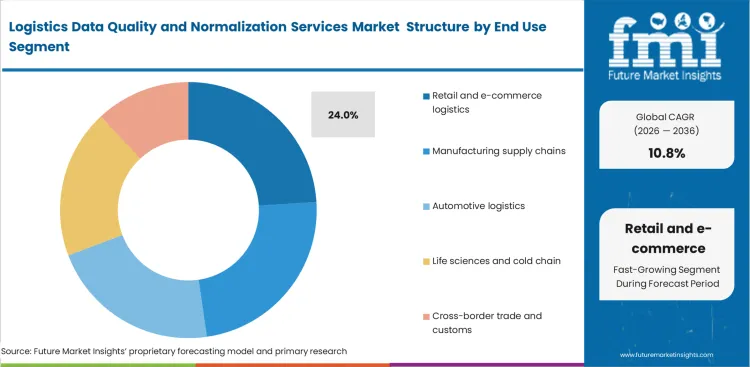

Logistics Data Quality and Normalization Services Market Analysis by End-use focus

Retail and e-commerce logistics remains especially sensitive to translation delays because parcel networks run on tight delivery windows and high event frequency. This enables retail and e-commerce logistics to garner an estimated at 24.0% share of the end-use focus segment in 2026. Distribution center teams rely on digital intake controls to normalize inbound carrier updates as they arrive, since delayed correction can spill into receiving schedules, sorting logic, and customer notifications. Much of the performance gain in this part of the market comes from middleware that standardizes shipment files before they move deeper into fulfillment systems. Firms that lack disciplined SKU master data and shipment-data controls often compensate with extra labor, more manual checking, and wider service buffers than the network should actually require.

- Inbound synchronization: Receiving schedules become unreliable when advance shipping notices arrive late, incomplete, or in mismatched formats. Clean inbound data helps warehouse teams plan dock activity with fewer surprises and reduces avoidable congestion before unloading begins.

- Sorting automation: Conveyor performance depends on package records that match the logic of the sorting system. When barcode fields or parcel attributes are inconsistent, manual intervention rises and throughput drops at the exact point volume pressure is highest.

- Consumer visibility: Customer-facing tracking loses credibility when carrier updates are translated inconsistently across the journey. Clear status logic improves what end users see, while service teams spend less time resolving calls caused by confusing or contradictory shipment messages.

Logistics Data Quality and Normalization Services Market Drivers, Restraints, and Opportunities

Carrier data inconsistency remains a direct obstacle to centralized freight auditing. Global logistics operators lose margin when invoice reconciliation systems receive mismatched status codes, incomplete references, or poorly formatted shipment records, because those failures push large volumes of charges back into manual review. That cost exposure is pushing companies toward managed services for logistics data governance, especially when expansion into new regions introduces additional carrier formats and reporting requirements. Connected logistics models add further pressure because shared execution environments depend on cleaner event logic, aligned master data, and reliable partner-level data exchange.

Regional carriers with older proprietary systems still create one of the hardest integration problems in this market. Many rely on flat files, inconsistent templates, or older exchange methods that fit poorly with modern API-based environments. Network planners cannot always remove those partners just to simplify data integration, because local carriers often remain operationally important in specialized routes or regional distribution models. That leaves logistics teams balancing data compatibility against service coverage, cost, and local network efficiency. Digital twin in logistics initiatives can help model blind spots and operational gaps, but their output weakens quickly when the underlying transport, event, and milestone data remains inconsistent.

Opportunities in Logistics Data Quality and Normalization Services Market

- Generative mapping algorithms: Machine learning models can interpret obscure legacy text strings without requiring fully manual rule creation. Data teams shorten onboarding time for non-compliant regional transport partners and reduce the effort tied to EDI remediation.

- Edge-deployed normalizers: Translating telemetry closer to the sensor or device level reduces pressure on centralized cloud processing and shortens response time in fast-moving operating environments. This becomes more valuable as connected logistics networks expand and event latency starts to affect execution quality.

- Compliance-as-a-service layers: Cross-border shipping slows when each customs regime requires different field formats, document logic, and submission rules. Prebuilt compliance modules reduce local recoding work and help trade teams move shipments through regulatory checkpoints with fewer formatting errors.

Regional Analysis

Based on regional analysis, Logistics Data Quality and Normalization Services is segmented into North America, Latin America, Western Europe, Eastern Europe, East Asia, South Asia and Pacific, and Middle East and Africa across 40 plus countries.

.webp)

| Country | CAGR (2026 to 2036) |

|---|---|

| India | 12.8% |

| China | 11.9% |

| Singapore | 11.2% |

| Germany | 10.9% |

| Netherlands | 10.4% |

| Mexico | 10.1% |

| United States | 9.8% |

Source: Future Market Insights (FMI) analysis, based on proprietary forecasting model and primary research

Asia-Pacific Logistics Data Quality and Normalization Services Market Analysis

Asia-Pacific logistics networks are expanding across ports, domestic freight systems, and cross-border trade corridors, which is raising the value of clean and interoperable data. Many markets in the region are modernizing while core digital systems are still being built, so operators have more room to embed normalization rules, master data controls, and shared exchange formats earlier in the process. That reduces later dependence on manual correction and makes routing, visibility, customs processing, and warehouse execution more reliable. Demand for EDI mapping, shipment data cleansing, and partner-data alignment remains strongest where freight volumes are rising quickly and carrier fragmentation is still high. Customs procedures, domestic documentation practices, and port automation requirements continue to shape how these services are selected and deployed across the region.

- India: India’s freight network is adding digital integration layers as new carriers, regional operators, and logistics platforms come into the formal system. Demand for logistics data quality and normalization services in India is anticipated to rise at a CAGR of 12.8% through 2036, as transport operators need cleaner waybill data, aligned carrier records, and more reliable shipment-event formatting across fragmented domestic networks. Buyers are specifying automated normalization capabilities earlier in new integration contracts to avoid repeated manual correction once volumes scale. Vendors that can handle regional language variation, mixed documentation formats, and fast carrier onboarding will remain better placed in large manufacturing and retail logistics programs.

- China: China’s port and export logistics system operates at a scale where inconsistent manifest data, mismatched milestones, and unstable carrier references quickly become operating liabilities. Port automation, factory-to-port coordination, and international trade execution all depend on more reliable formatting and cleaner machine-readable shipment records. Industry analysis of logistics data quality and normalization services in China are expected to increase at a CAGR of 11.9% during the forecast period. Vendors that can support dense export volumes, mixed carrier environments, and tighter digital control across industrial corridors are better positioned in this market.

- Singapore: Singapore operates with very little tolerance for data inconsistency because port transfers, customs clearance, and forwarding activity all depend on clean event sequencing. Buyers in Singapore place strong emphasis on normalization services when shipment records, manifest fields, and handoff data need to move across maritime and inland systems without delay. Demand for logistics data quality and normalization services in Singapore is expected to grow at a CAGR of 11.2% through 2036. Providers that can support faster reconciliation and cleaner trade-data exchange are more likely to win business tied to high-throughput logistics flows.

FMI's report includes extensive coverage of the Asia-Pacific logistics data quality sector. It incorporates detailed analysis of Australia, Indonesia, Vietnam, Japan, South Korea, and the broader ASEAN region. A defining regional pattern is the combination of export-led logistics, transshipment activity, and cross-border e-commerce growth, all of which raise the value of standardized shipment data and interoperable freight records.

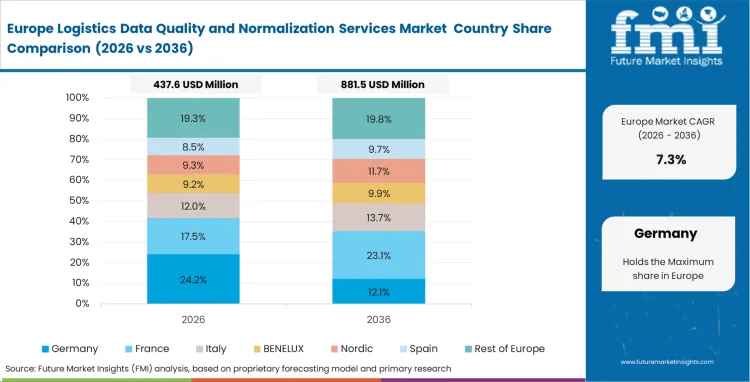

Western Europe Logistics Data Quality and Normalization Services Market Analysis

Western Europe remains one of the more compliance-sensitive regions for logistics data quality spending because cross-border freight movement depends on aligned records across customs, warehousing, transport management, and retail distribution systems. Buyers in the region are investing in normalization layers to reduce the cost of inconsistent field formats, duplicate shipment references, and incompatible carrier feeds moving across tightly regulated trade corridors. Implementing a standardized, normalized backbone enables facilities to deploy advanced supply chain analytics without compromising real-time logistics stability.

- Netherlands: The logistics data quality and normalization services market in the Netherlands is expected to grow at a CAGR of 10.4% through 2036, supported by the country’s role as a high-volume cross-border distribution and import handling hub. Buyers in the Netherlands place strong emphasis on clean customs references, shipment identifiers, and handoff records because data inconsistency can slow freight movement across tightly connected European trade lanes. Logistics providers also need better alignment between warehouse systems, carrier feeds, and border documentation to reduce avoidable exceptions. Vendors that can support faster translation across customs, warehousing, and transport workflows are better positioned in this market.

- Germany: Industrial supply chains in Germany depend on transport data that can move cleanly between suppliers, plants, warehouses, and ERP-linked planning systems. Buyers place normalization work high on the agenda because inconsistent shipment records and mismatched supplier references can disrupt inbound scheduling and weaken production control. Germany is forecast to register a CAGR of 10.9% in the logistics data quality and normalization services market through 2036. Vendors with stronger capability in supplier-data alignment, legacy-system cleanup, and industrial transport integration are better positioned in large manufacturing and cross-border logistics programs.

- United Kingdom: Border documentation and carrier-data alignment remain central to logistics execution in the United Kingdom, especially where freight flows move through customs-heavy trade lanes and multi-party forwarding networks. The market for logistics data quality and normalization services in the United Kingdom, if you are keeping it as a separate country note outside the corrected final CAGR set, is expected to grow at a CAGR of 10.6% through 2036. Buyers focus on cleaner shipment records, tariff-linked data fields, and more reliable status formatting because inconsistency at those points can delay clearance and weaken downstream planning. Providers with stronger capability in trade-data mapping and cross-border workflow integration are better placed in this market.

FMI's report includes extensive coverage of the Western Europe data normalization sector. It incorporates detailed analysis of France, Italy, Spain, and the broader European Union region. One of the clearest regional themes is the move toward more digital freight documentation and tighter traceability, which is raising the cost of poor-quality shipment and partner data across European trade lanes.

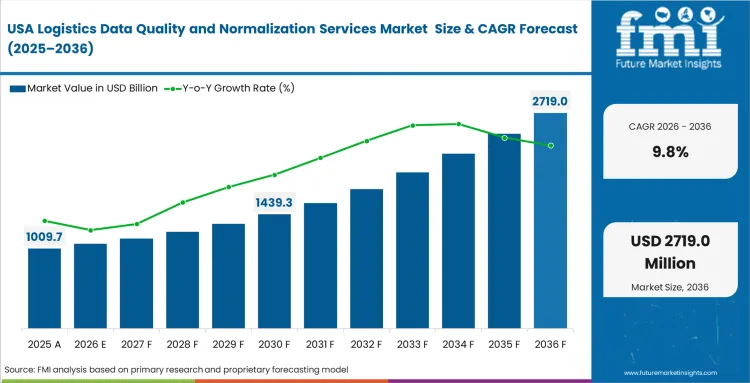

North America Logistics Data Quality and Normalization Services Market Analysis

North American demand is being shaped by retail distribution, cross-border freight coordination, and the need to connect large transport networks without carrying forward the data inconsistency that older systems created. Logistics operators across the region are investing in normalization and validation services because customer-facing delivery commitments, freight audit controls, and inventory planning now depend on cleaner shipment and carrier data. It has been estimated that the commercial pressure is strongest where high transaction volumes meet tight service expectations, especially in retail logistics and integrated cross-border supply chains.

- United States: The logistics data quality and normalization services market in the United States is expected to grow at a CAGR of 9.8% through 2036. Retail distribution, parcel delivery, and large fulfillment networks keep data-cleanup spending active across the United States. Buyers rely on stable carrier telemetry, consistent shipment milestones, and clean location references because weak data quality quickly affects routing, customer updates, and automated sorting performance. Vendors serving this market need to support cleaner event logic and more reliable carrier integration across high-volume delivery environments.

- Mexico: Manufacturing-linked freight flows and cross-border trade are increasing the need for cleaner shipment, customs, and partner data across Mexico. Logistics providers working between factory corridors, border gateways, and distribution networks place greater emphasis on normalized records because inconsistent data creates delays in documentation, handoffs, and downstream planning. Demand for logistics data quality and normalization services in Mexico is anticipated to rise at a CAGR of 10.1% through 2036. The strongest spending will remain tied to sectors where timing, border coordination, and supplier-data accuracy directly affect execution.

FMI's report includes comprehensive evaluation of the North American logistics data quality sector. It features specific analysis of the Canadian and Mexican industrial markets. A defining regional requirement is the coordination of cross-border supply chains, where cleaner trade data and more consistent shipment records directly improve execution reliability across the wider North American freight network.

Competitive Aligners for Market Players

Global consulting and IT service firms enter this space with a built-in advantage because they are already embedded in enterprise ERP rollouts, supply chain redesign programs, and large-scale systems integration work. Accenture, IBM, and Infosys typically win data-cleanup mandates by attaching them to broader modernization budgets rather than selling format-conversion tools on their own. Buyers searching for logistics master data cleanup support rarely shop for translation capability in isolation. They usually want governance layers that can pass clean records into analytics environments, transport applications, and wider data management platforms with less manual correction. That buying behavior narrows the opening for smaller specialists, which is why many of them concentrate on narrower use cases such as customs-code alignment, regional address matching, or carrier-specific file exceptions.

Buyer behavior is also changing. Enterprise procurement teams want open APIs, portable mapping logic, and exportable master records so cleaned data can move into independent audit tools, ERP estates, or first mile logistics delivery software without being trapped inside one provider’s stack. RFPs for logistics data cleansing now place more weight on modular translation layers and interoperability than they did a few years ago. As regulation tightens and multi-system coordination becomes harder to manage, proprietary script libraries still matter, but they no longer settle the decision on their own. Large integrators are being judged more on exception resolution, implementation quality, and consistency of output, while buyers continue to compare logistics data quality services with MDM software to see whether an outside partner adds real operating utility rather than another hosted layer.

Key Players in Logistics Data Quality and Normalization Services Market

- Accenture

- IBM

- Infosys

- Wipro

- Tata Consultancy Services

- Genpact

- OpenText

Scope of the Report

| Metric | Value |

|---|---|

| Quantitative Units | USD 1,770 million to USD 4,940 million, at a CAGR of 10.8% |

| Market Definition | Logistics Data Quality and Normalization Services refer to software-led and managed service capabilities that convert inconsistent supply chain data into usable and comparable records. These solutions standardize carrier codes, address fields, product identifiers, and transaction formats across fragmented logistics networks. They also cleanse data flowing through EDI and related exchange channels so downstream systems can process it with fewer errors. The result is more reliable routing, cleaner shipment visibility, and better customs documentation across cross-border operations. |

| Service type Segmentation | Data cleansing and enrichment, Master data harmonization, Address and location normalization, Carrier and event-code normalization, EDI and API mapping remediation |

| Delivery model Segmentation | Managed services, Project-based implementation, Platform-enabled service, Advisory and audit retainers |

| Data domain Segmentation | Shipment and tracking data, Product and SKU master data, Partner and supplier master data, Location and address master data, Customs and trade document data |

| Client type Segmentation | Large enterprises, Upper mid-market shippers, 3PLs and freight forwarders, SMEs |

| End-use focus Segmentation | Retail and e-commerce logistics, Manufacturing supply chains, Automotive logistics, Life sciences and cold chain, Cross-border trade and customs |

| Regions Covered | North America, Latin America, Western Europe, Eastern Europe, East Asia, South Asia and Pacific, Middle East and Africa |

| Countries Covered | United States, Canada, Brazil, Mexico, Germany, United Kingdom, France, Italy, Spain, China, Japan, South Korea, India, ASEAN, ANZ, GCC Countries, South Africa |

| Key Companies Profiled | Accenture, IBM, Infosys, Wipro, Tata Consultancy Services, Genpact, OpenText |

| Forecast Period | 2026 to 2036 |

| Approach | Cumulative contract values for enterprise master data governance implementations within transport sectors anchor baseline projections. |

Source: Future Market Insights (FMI) analysis, based on proprietary forecasting model and primary research

Logistics Data Quality and Normalization Services Market Analysis by Segments

Service type:

- Data cleansing and enrichment

- Master data harmonization

- Address and location normalization

- Carrier and event-code normalization

- EDI and API mapping remediation

Delivery model:

- Managed services

- Project-based implementation

- Platform-enabled service

- Advisory and audit retainers

Data domain:

- Shipment and tracking data

- Product and SKU master data

- Partner and supplier master data

- Location and address master data

- Customs and trade document data

Client type:

- Large enterprises

- Upper mid-market shippers

- 3PLs and freight forwarders

- SMEs

End-use focus:

- Retail and e-commerce logistics

- Manufacturing supply chains

- Automotive logistics

- Life sciences and cold chain

- Cross-border trade and customs

Region:

- North America

- Latin America

- Western Europe

- Eastern Europe

- East Asia

- South Asia and Pacific

- Middle East and Africa

Bibliography

- Božić, D., Živičnjak, M., Stanković, R., & Ignjatić, A. (2024). Impact of the Product Master Data Quality on the Logistics Process Performance. Logistics, 8(2), 43.

- Bureau of Transportation Statistics. (2025, March 20). TransBorder Freight Annual Report 2024. USA Department of Transportation.

- Council of the European Union. (2024, July 26). Commission Delegated Regulation establishing the eFTI common data set and eFTI data subsets in relation to Regulation (EU) 2020/1056.

- NICDC Logistics Data Services Limited. (2025). 10th Annual Report 2024-2025.

- Press Information Bureau. (2025, September 16). India marks three years of National Logistics Policy. Government of India.

This bibliography is provided for reader reference. The full FMI report contains the complete reference list with primary source documentation.

This Report Addresses

- Specific commercial penalties pushing global 3PLs to adopt automated EDI mapping remediation protocols.

- Strategic advantages gained by mid-market shippers utilizing managed services for continuous format translation maintenance.

- Catastrophic algorithmic routing failures prevented by deploying rigorous address and location normalization layers.

- Complex integration dynamics surrounding legacy ERP architectures within large enterprise freight networks.

- Proprietary translation script libraries protecting incumbent market share against generic machine learning competitors.

- Regional infrastructure mandates accelerating digital waybill standardization across emerging South Asian transport corridors.

- Severe margin destruction linked directly to manual exception handling during carrier event-code mistranslations.

- Post-Brexit regulatory requirements driving immediate uptake of intelligent customs document harmonization software.

Frequently Asked Questions

What are logistics data quality and normalization services?

Automated workflows standardizing disparate supply chain information, translating fragmented carrier codes and addresses into unified records for accurate routing.

What is the projected logistics data quality market size by 2036?

Revenue should reach USD 4.9 billion by 2036, driven by procurement directors eliminating manual exception handling across regional networks.

Why do shippers need data normalization across carriers and systems?

Unpredictable regional data structures disrupt algorithmic auditing. Harmonization engines prevent massive margin leakage when automated invoice reconciliation fails.

What was the baseline valuation recorded in 2025?

Base tracking indicates a USD 1.60 billion valuation, as shippers procure advanced cleansing tools to standardize incoming carrier telemetry.

Which data domains create the most logistics errors?

Shipment tracking data and address coordinates generate severe friction, frequently causing predictive arrival algorithms to fail catastrophically.

What is the difference between MDM software and outsourced normalization services?

External normalization specialists absorb translation shocks when carriers alter status codes, converting unpredictable IT repair costs into operational expenses.

How much do logistics data cleansing services typically cost?

Managed solutions become mathematically superior only when active carrier connections exceed thresholds that overwhelm internal developer capacity.

Which industries buy these services most often?

Heavy manufacturing and retail conglomerates fund massive master data overhauls to enable centralized algorithmic freight auditing globally.

How is eFTI changing freight data requirements in Europe?

Electronic freight transport information mandates require rapid translation of diverse customs codes into unified regulatory protocols.

How do customs and trade-data models affect service demand?

Automated port gates require absolute adherence to trade templates, forcing compliance officers to deploy advanced formatting layers.

What KPIs improve after a logistics data quality program?

Clean barcode metadata routes packages through high-speed conveyors automatically, maximizing mechanical throughput rates during peak holiday seasons.

Why do managed services lead the delivery model segment?

Internal developer teams frequently lack the proprietary translation scripts necessary to manage hundreds of volatile regional vendor endpoints.

How does India compare to China in regional growth?

India expands at 12.8% through unified national documentation, while China advances at 11.9% as port automation and export coordination raise demand for cleaner manifest and shipment data.

What structural friction slows enterprise implementation timelines?

Proprietary legacy systems utilized by niche regional carriers lack modern API connectivity, relying instead on erratic flat-file exchanges.

How do major integrators sustain competitive advantage?

Incumbents possess massive proprietary libraries of pre-configured mapping scripts that generic algorithmic models cannot easily infer or replicate.

What specific penalty forces urgent procurement decisions?

Finance directors face severe margin degradation when analysts must manually verify thousands of rejected freight charges individually.

Why is address standardization critical for mid-market shippers?

Engaging algorithmic pricing engines requires clean geographic inputs, granting smaller operators distinct agility advantages over larger, paralyzed rivals.

How do machine learning algorithms alter mapping protocols?

Generative models decipher obscure legacy text strings without manual configurations, accelerating onboarding processes for non-compliant regional transport partners.

What risk arises from unstandardized route coordinate data?

Failing to harmonize specific telemetry causes customer-facing tracking dashboards to display illogical transit events, destroying operational credibility.

Why do automotive supply chains require specialized cleansing?

Flawless format translation provides visibility into critical component movements, preventing catastrophic assembly line halts across complex vendor networks.

How does edge deployment benefit high-speed logistics facilities?

Translating raw telemetry directly at sensor levels reduces processing latency, maintaining synchronization within demanding mechanical sorting environments.

What tension exists between buyers and software vendors?

Shippers actively resist proprietary ecosystems, demanding modular translation layers capable of exporting master records into independent auditing tools.

How do environmental reporting mandates influence data requirements?

Operations managers face compliance failures if underlying shipment distance calculations rely on unstandardized geographic identifiers for emission tracking.

What advantage do platform-enabled services offer over project implementations?

Continuous automated translation layers update mapping rules dynamically, whereas static project implementations degrade rapidly when transport partners modify formats.

Table of Content

- Executive Summary

- Global Market Outlook

- Demand to side Trends

- Supply to side Trends

- Technology Roadmap Analysis

- Analysis and Recommendations

- Market Overview

- Market Coverage / Taxonomy

- Market Definition / Scope / Limitations

- Research Methodology

- Chapter Orientation

- Analytical Lens and Working Hypotheses

- Market Structure, Signals, and Trend Drivers

- Benchmarking and Cross-market Comparability

- Market Sizing, Forecasting, and Opportunity Mapping

- Research Design and Evidence Framework

- Desk Research Programme (Secondary Evidence)

- Company Annual and Sustainability Reports

- Peer-reviewed Journals and Academic Literature

- Corporate Websites, Product Literature, and Technical Notes

- Earnings Decks and Investor Briefings

- Statutory Filings and Regulatory Disclosures

- Technical White Papers and Standards Notes

- Trade Journals, Industry Magazines, and Analyst Briefs

- Conference Proceedings, Webinars, and Seminar Materials

- Government Statistics Portals and Public Data Releases

- Press Releases and Reputable Media Coverage

- Specialist Newsletters and Curated Briefings

- Sector Databases and Reference Repositories

- FMI Internal Proprietary Databases and Historical Market Datasets

- Subscription Datasets and Paid Sources

- Social Channels, Communities, and Digital Listening Inputs

- Additional Desk Sources

- Expert Input and Fieldwork (Primary Evidence)

- Primary Modes

- Qualitative Interviews and Expert Elicitation

- Quantitative Surveys and Structured Data Capture

- Blended Approach

- Why Primary Evidence is Used

- Field Techniques

- Interviews

- Surveys

- Focus Groups

- Observational and In-context Research

- Social and Community Interactions

- Stakeholder Universe Engaged

- C-suite Leaders

- Board Members

- Presidents and Vice Presidents

- R&D and Innovation Heads

- Technical Specialists

- Domain Subject-matter Experts

- Scientists

- Physicians and Other Healthcare Professionals

- Governance, Ethics, and Data Stewardship

- Research Ethics

- Data Integrity and Handling

- Primary Modes

- Tooling, Models, and Reference Databases

- Desk Research Programme (Secondary Evidence)

- Data Engineering and Model Build

- Data Acquisition and Ingestion

- Cleaning, Normalisation, and Verification

- Synthesis, Triangulation, and Analysis

- Quality Assurance and Audit Trail

- Market Background

- Market Dynamics

- Drivers

- Restraints

- Opportunity

- Trends

- Scenario Forecast

- Demand in Optimistic Scenario

- Demand in Likely Scenario

- Demand in Conservative Scenario

- Opportunity Map Analysis

- Product Life Cycle Analysis

- Supply Chain Analysis

- Investment Feasibility Matrix

- Value Chain Analysis

- PESTLE and Porter’s Analysis

- Regulatory Landscape

- Regional Parent Market Outlook

- Production and Consumption Statistics

- Import and Export Statistics

- Market Dynamics

- Global Market Analysis 2021 to 2025 and Forecast, 2026 to 2036

- Historical Market Size Value (USD Million) Analysis, 2021 to 2025

- Current and Future Market Size Value (USD Million) Projections, 2026 to 2036

- Y to o to Y Growth Trend Analysis

- Absolute $ Opportunity Analysis

- Global Market Pricing Analysis 2021 to 2025 and Forecast 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Service type

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Service type , 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Service type , 2026 to 2036

- Data cleansing and enrichment

- Master data harmonization

- Address and location normalization

- Carrier and event-code normalization

- EDI and API mapping remediation

- Data cleansing and enrichment

- Y to o to Y Growth Trend Analysis By Service type , 2021 to 2025

- Absolute $ Opportunity Analysis By Service type , 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Delivery model

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Delivery model, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Delivery model, 2026 to 2036

- Managed services

- Project-based implementation

- Platform-enabled service

- Advisory and audit retainers

- Managed services

- Y to o to Y Growth Trend Analysis By Delivery model, 2021 to 2025

- Absolute $ Opportunity Analysis By Delivery model, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Data domain

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Data domain, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Data domain, 2026 to 2036

- Shipment and tracking data

- Product and SKU master data

- Partner and supplier master data

- Location and address master data

- Customs and trade document data

- Shipment and tracking data

- Y to o to Y Growth Trend Analysis By Data domain, 2021 to 2025

- Absolute $ Opportunity Analysis By Data domain, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Client type

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Client type, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Client type, 2026 to 2036

- Large enterprises

- Upper mid-market shippers

- 3PLs and freight forwarders

- SMEs

- Large enterprises

- Y to o to Y Growth Trend Analysis By Client type, 2021 to 2025

- Absolute $ Opportunity Analysis By Client type, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By End Use

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By End Use, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By End Use, 2026 to 2036

- Retail and e-commerce logistics

- Manufacturing supply chains

- Automotive logistics

- Life sciences and cold chain

- Cross-border trade and customs

- Retail and e-commerce logistics

- Y to o to Y Growth Trend Analysis By End Use, 2021 to 2025

- Absolute $ Opportunity Analysis By End Use, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Region

- Introduction

- Historical Market Size Value (USD Million) Analysis By Region, 2021 to 2025

- Current Market Size Value (USD Million) Analysis and Forecast By Region, 2026 to 2036

- North America

- Latin America

- Western Europe

- Eastern Europe

- East Asia

- South Asia and Pacific

- Middle East & Africa

- Market Attractiveness Analysis By Region

- North America Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- USA

- Canada

- Mexico

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Key Takeaways

- Latin America Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Brazil

- Chile

- Rest of Latin America

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Key Takeaways

- Western Europe Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Germany

- UK

- Italy

- Spain

- France

- Nordic

- BENELUX

- Rest of Western Europe

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Key Takeaways

- Eastern Europe Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Russia

- Poland

- Hungary

- Balkan & Baltic

- Rest of Eastern Europe

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Key Takeaways

- East Asia Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- China

- Japan

- South Korea

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Key Takeaways

- South Asia and Pacific Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- India

- ASEAN

- Australia & New Zealand

- Rest of South Asia and Pacific

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Key Takeaways

- Middle East & Africa Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Kingdom of Saudi Arabia

- Other GCC Countries

- Turkiye

- South Africa

- Other African Union

- Rest of Middle East & Africa

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- By Country

- Market Attractiveness Analysis

- By Country

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Key Takeaways

- Key Countries Market Analysis

- USA

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Canada

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Mexico

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Brazil

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Chile

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Germany

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- UK

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Italy

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Spain

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- France

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- India

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- ASEAN

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Australia & New Zealand

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- China

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Japan

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- South Korea

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Russia

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Poland

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Hungary

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Kingdom of Saudi Arabia

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Turkiye

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- South Africa

- Pricing Analysis

- Market Share Analysis, 2025

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- USA

- Market Structure Analysis

- Competition Dashboard

- Competition Benchmarking

- Market Share Analysis of Top Players

- By Regional

- By Service type

- By Delivery model

- By Data domain

- By Client type

- By End Use

- Competition Analysis

- Competition Deep Dive

- Accenture

- Overview

- Product Portfolio

- Profitability by Market Segments (Product/Age /Sales Channel/Region)

- Sales Footprint

- Strategy Overview

- Marketing Strategy

- Product Strategy

- Channel Strategy

- IBM

- Infosys

- Wipro

- Tata Consultancy Services

- Genpact

- OpenText

- Accenture

- Competition Deep Dive

- Assumptions & Acronyms Used

List of Tables

- Table 1: Global Market Value (USD Million) Forecast by Region, 2021 to 2036

- Table 2: Global Market Value (USD Million) Forecast by Service type , 2021 to 2036

- Table 3: Global Market Value (USD Million) Forecast by Delivery model, 2021 to 2036

- Table 4: Global Market Value (USD Million) Forecast by Data domain, 2021 to 2036

- Table 5: Global Market Value (USD Million) Forecast by Client type, 2021 to 2036

- Table 6: Global Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 7: North America Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 8: North America Market Value (USD Million) Forecast by Service type , 2021 to 2036

- Table 9: North America Market Value (USD Million) Forecast by Delivery model, 2021 to 2036

- Table 10: North America Market Value (USD Million) Forecast by Data domain, 2021 to 2036

- Table 11: North America Market Value (USD Million) Forecast by Client type, 2021 to 2036

- Table 12: North America Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 13: Latin America Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 14: Latin America Market Value (USD Million) Forecast by Service type , 2021 to 2036

- Table 15: Latin America Market Value (USD Million) Forecast by Delivery model, 2021 to 2036

- Table 16: Latin America Market Value (USD Million) Forecast by Data domain, 2021 to 2036

- Table 17: Latin America Market Value (USD Million) Forecast by Client type, 2021 to 2036

- Table 18: Latin America Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 19: Western Europe Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 20: Western Europe Market Value (USD Million) Forecast by Service type , 2021 to 2036

- Table 21: Western Europe Market Value (USD Million) Forecast by Delivery model, 2021 to 2036

- Table 22: Western Europe Market Value (USD Million) Forecast by Data domain, 2021 to 2036

- Table 23: Western Europe Market Value (USD Million) Forecast by Client type, 2021 to 2036

- Table 24: Western Europe Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 25: Eastern Europe Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 26: Eastern Europe Market Value (USD Million) Forecast by Service type , 2021 to 2036

- Table 27: Eastern Europe Market Value (USD Million) Forecast by Delivery model, 2021 to 2036

- Table 28: Eastern Europe Market Value (USD Million) Forecast by Data domain, 2021 to 2036

- Table 29: Eastern Europe Market Value (USD Million) Forecast by Client type, 2021 to 2036

- Table 30: Eastern Europe Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 31: East Asia Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 32: East Asia Market Value (USD Million) Forecast by Service type , 2021 to 2036

- Table 33: East Asia Market Value (USD Million) Forecast by Delivery model, 2021 to 2036

- Table 34: East Asia Market Value (USD Million) Forecast by Data domain, 2021 to 2036

- Table 35: East Asia Market Value (USD Million) Forecast by Client type, 2021 to 2036

- Table 36: East Asia Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 37: South Asia and Pacific Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 38: South Asia and Pacific Market Value (USD Million) Forecast by Service type , 2021 to 2036

- Table 39: South Asia and Pacific Market Value (USD Million) Forecast by Delivery model, 2021 to 2036

- Table 40: South Asia and Pacific Market Value (USD Million) Forecast by Data domain, 2021 to 2036

- Table 41: South Asia and Pacific Market Value (USD Million) Forecast by Client type, 2021 to 2036

- Table 42: South Asia and Pacific Market Value (USD Million) Forecast by End Use, 2021 to 2036

- Table 43: Middle East & Africa Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 44: Middle East & Africa Market Value (USD Million) Forecast by Service type , 2021 to 2036

- Table 45: Middle East & Africa Market Value (USD Million) Forecast by Delivery model, 2021 to 2036

- Table 46: Middle East & Africa Market Value (USD Million) Forecast by Data domain, 2021 to 2036

- Table 47: Middle East & Africa Market Value (USD Million) Forecast by Client type, 2021 to 2036

- Table 48: Middle East & Africa Market Value (USD Million) Forecast by End Use, 2021 to 2036

List of Figures

- Figure 1: Global Market Pricing Analysis

- Figure 2: Global Market Value (USD Million) Forecast 2021-2036

- Figure 3: Global Market Value Share and BPS Analysis by Service type , 2026 and 2036

- Figure 4: Global Market Y-o-Y Growth Comparison by Service type , 2026-2036

- Figure 5: Global Market Attractiveness Analysis by Service type

- Figure 6: Global Market Value Share and BPS Analysis by Delivery model, 2026 and 2036

- Figure 7: Global Market Y-o-Y Growth Comparison by Delivery model, 2026-2036

- Figure 8: Global Market Attractiveness Analysis by Delivery model

- Figure 9: Global Market Value Share and BPS Analysis by Data domain, 2026 and 2036

- Figure 10: Global Market Y-o-Y Growth Comparison by Data domain, 2026-2036

- Figure 11: Global Market Attractiveness Analysis by Data domain

- Figure 12: Global Market Value Share and BPS Analysis by Client type, 2026 and 2036

- Figure 13: Global Market Y-o-Y Growth Comparison by Client type, 2026-2036

- Figure 14: Global Market Attractiveness Analysis by Client type

- Figure 15: Global Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 16: Global Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 17: Global Market Attractiveness Analysis by End Use

- Figure 18: Global Market Value (USD Million) Share and BPS Analysis by Region, 2026 and 2036

- Figure 19: Global Market Y-o-Y Growth Comparison by Region, 2026-2036

- Figure 20: Global Market Attractiveness Analysis by Region

- Figure 21: North America Market Incremental Dollar Opportunity, 2026-2036

- Figure 22: Latin America Market Incremental Dollar Opportunity, 2026-2036

- Figure 23: Western Europe Market Incremental Dollar Opportunity, 2026-2036

- Figure 24: Eastern Europe Market Incremental Dollar Opportunity, 2026-2036

- Figure 25: East Asia Market Incremental Dollar Opportunity, 2026-2036

- Figure 26: South Asia and Pacific Market Incremental Dollar Opportunity, 2026-2036

- Figure 27: Middle East & Africa Market Incremental Dollar Opportunity, 2026-2036

- Figure 28: North America Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 29: North America Market Value Share and BPS Analysis by Service type , 2026 and 2036

- Figure 30: North America Market Y-o-Y Growth Comparison by Service type , 2026-2036

- Figure 31: North America Market Attractiveness Analysis by Service type

- Figure 32: North America Market Value Share and BPS Analysis by Delivery model, 2026 and 2036

- Figure 33: North America Market Y-o-Y Growth Comparison by Delivery model, 2026-2036

- Figure 34: North America Market Attractiveness Analysis by Delivery model

- Figure 35: North America Market Value Share and BPS Analysis by Data domain, 2026 and 2036

- Figure 36: North America Market Y-o-Y Growth Comparison by Data domain, 2026-2036

- Figure 37: North America Market Attractiveness Analysis by Data domain

- Figure 38: North America Market Value Share and BPS Analysis by Client type, 2026 and 2036

- Figure 39: North America Market Y-o-Y Growth Comparison by Client type, 2026-2036

- Figure 40: North America Market Attractiveness Analysis by Client type

- Figure 41: North America Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 42: North America Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 43: North America Market Attractiveness Analysis by End Use

- Figure 44: Latin America Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 45: Latin America Market Value Share and BPS Analysis by Service type , 2026 and 2036

- Figure 46: Latin America Market Y-o-Y Growth Comparison by Service type , 2026-2036

- Figure 47: Latin America Market Attractiveness Analysis by Service type

- Figure 48: Latin America Market Value Share and BPS Analysis by Delivery model, 2026 and 2036

- Figure 49: Latin America Market Y-o-Y Growth Comparison by Delivery model, 2026-2036

- Figure 50: Latin America Market Attractiveness Analysis by Delivery model

- Figure 51: Latin America Market Value Share and BPS Analysis by Data domain, 2026 and 2036

- Figure 52: Latin America Market Y-o-Y Growth Comparison by Data domain, 2026-2036

- Figure 53: Latin America Market Attractiveness Analysis by Data domain

- Figure 54: Latin America Market Value Share and BPS Analysis by Client type, 2026 and 2036

- Figure 55: Latin America Market Y-o-Y Growth Comparison by Client type, 2026-2036

- Figure 56: Latin America Market Attractiveness Analysis by Client type

- Figure 57: Latin America Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 58: Latin America Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 59: Latin America Market Attractiveness Analysis by End Use

- Figure 60: Western Europe Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 61: Western Europe Market Value Share and BPS Analysis by Service type , 2026 and 2036

- Figure 62: Western Europe Market Y-o-Y Growth Comparison by Service type , 2026-2036

- Figure 63: Western Europe Market Attractiveness Analysis by Service type

- Figure 64: Western Europe Market Value Share and BPS Analysis by Delivery model, 2026 and 2036

- Figure 65: Western Europe Market Y-o-Y Growth Comparison by Delivery model, 2026-2036

- Figure 66: Western Europe Market Attractiveness Analysis by Delivery model

- Figure 67: Western Europe Market Value Share and BPS Analysis by Data domain, 2026 and 2036

- Figure 68: Western Europe Market Y-o-Y Growth Comparison by Data domain, 2026-2036

- Figure 69: Western Europe Market Attractiveness Analysis by Data domain

- Figure 70: Western Europe Market Value Share and BPS Analysis by Client type, 2026 and 2036

- Figure 71: Western Europe Market Y-o-Y Growth Comparison by Client type, 2026-2036

- Figure 72: Western Europe Market Attractiveness Analysis by Client type

- Figure 73: Western Europe Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 74: Western Europe Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 75: Western Europe Market Attractiveness Analysis by End Use

- Figure 76: Eastern Europe Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 77: Eastern Europe Market Value Share and BPS Analysis by Service type , 2026 and 2036

- Figure 78: Eastern Europe Market Y-o-Y Growth Comparison by Service type , 2026-2036

- Figure 79: Eastern Europe Market Attractiveness Analysis by Service type

- Figure 80: Eastern Europe Market Value Share and BPS Analysis by Delivery model, 2026 and 2036

- Figure 81: Eastern Europe Market Y-o-Y Growth Comparison by Delivery model, 2026-2036

- Figure 82: Eastern Europe Market Attractiveness Analysis by Delivery model

- Figure 83: Eastern Europe Market Value Share and BPS Analysis by Data domain, 2026 and 2036

- Figure 84: Eastern Europe Market Y-o-Y Growth Comparison by Data domain, 2026-2036

- Figure 85: Eastern Europe Market Attractiveness Analysis by Data domain

- Figure 86: Eastern Europe Market Value Share and BPS Analysis by Client type, 2026 and 2036

- Figure 87: Eastern Europe Market Y-o-Y Growth Comparison by Client type, 2026-2036

- Figure 88: Eastern Europe Market Attractiveness Analysis by Client type

- Figure 89: Eastern Europe Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 90: Eastern Europe Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 91: Eastern Europe Market Attractiveness Analysis by End Use

- Figure 92: East Asia Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 93: East Asia Market Value Share and BPS Analysis by Service type , 2026 and 2036

- Figure 94: East Asia Market Y-o-Y Growth Comparison by Service type , 2026-2036

- Figure 95: East Asia Market Attractiveness Analysis by Service type

- Figure 96: East Asia Market Value Share and BPS Analysis by Delivery model, 2026 and 2036

- Figure 97: East Asia Market Y-o-Y Growth Comparison by Delivery model, 2026-2036

- Figure 98: East Asia Market Attractiveness Analysis by Delivery model

- Figure 99: East Asia Market Value Share and BPS Analysis by Data domain, 2026 and 2036

- Figure 100: East Asia Market Y-o-Y Growth Comparison by Data domain, 2026-2036

- Figure 101: East Asia Market Attractiveness Analysis by Data domain

- Figure 102: East Asia Market Value Share and BPS Analysis by Client type, 2026 and 2036

- Figure 103: East Asia Market Y-o-Y Growth Comparison by Client type, 2026-2036

- Figure 104: East Asia Market Attractiveness Analysis by Client type

- Figure 105: East Asia Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 106: East Asia Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 107: East Asia Market Attractiveness Analysis by End Use

- Figure 108: South Asia and Pacific Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 109: South Asia and Pacific Market Value Share and BPS Analysis by Service type , 2026 and 2036

- Figure 110: South Asia and Pacific Market Y-o-Y Growth Comparison by Service type , 2026-2036

- Figure 111: South Asia and Pacific Market Attractiveness Analysis by Service type

- Figure 112: South Asia and Pacific Market Value Share and BPS Analysis by Delivery model, 2026 and 2036

- Figure 113: South Asia and Pacific Market Y-o-Y Growth Comparison by Delivery model, 2026-2036

- Figure 114: South Asia and Pacific Market Attractiveness Analysis by Delivery model

- Figure 115: South Asia and Pacific Market Value Share and BPS Analysis by Data domain, 2026 and 2036

- Figure 116: South Asia and Pacific Market Y-o-Y Growth Comparison by Data domain, 2026-2036

- Figure 117: South Asia and Pacific Market Attractiveness Analysis by Data domain

- Figure 118: South Asia and Pacific Market Value Share and BPS Analysis by Client type, 2026 and 2036

- Figure 119: South Asia and Pacific Market Y-o-Y Growth Comparison by Client type, 2026-2036

- Figure 120: South Asia and Pacific Market Attractiveness Analysis by Client type

- Figure 121: South Asia and Pacific Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 122: South Asia and Pacific Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 123: South Asia and Pacific Market Attractiveness Analysis by End Use

- Figure 124: Middle East & Africa Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 125: Middle East & Africa Market Value Share and BPS Analysis by Service type , 2026 and 2036

- Figure 126: Middle East & Africa Market Y-o-Y Growth Comparison by Service type , 2026-2036

- Figure 127: Middle East & Africa Market Attractiveness Analysis by Service type

- Figure 128: Middle East & Africa Market Value Share and BPS Analysis by Delivery model, 2026 and 2036

- Figure 129: Middle East & Africa Market Y-o-Y Growth Comparison by Delivery model, 2026-2036

- Figure 130: Middle East & Africa Market Attractiveness Analysis by Delivery model

- Figure 131: Middle East & Africa Market Value Share and BPS Analysis by Data domain, 2026 and 2036

- Figure 132: Middle East & Africa Market Y-o-Y Growth Comparison by Data domain, 2026-2036

- Figure 133: Middle East & Africa Market Attractiveness Analysis by Data domain

- Figure 134: Middle East & Africa Market Value Share and BPS Analysis by Client type, 2026 and 2036

- Figure 135: Middle East & Africa Market Y-o-Y Growth Comparison by Client type, 2026-2036

- Figure 136: Middle East & Africa Market Attractiveness Analysis by Client type

- Figure 137: Middle East & Africa Market Value Share and BPS Analysis by End Use, 2026 and 2036

- Figure 138: Middle East & Africa Market Y-o-Y Growth Comparison by End Use, 2026-2036

- Figure 139: Middle East & Africa Market Attractiveness Analysis by End Use

- Figure 140: Global Market - Tier Structure Analysis

- Figure 141: Global Market - Company Share Analysis