AI Inference Hardware Performance Benchmarking Test Systems Market Size, Market Forecast and Outlook By FMI

Summary of the AI Inference Hardware Performance Benchmarking Test Systems Market

- Demand and Growth Drivers

- Wider inference deployment across data centers and edge systems is increasing the need to measure latency and power efficiency before hardware moves into production.

- Full-stack validation is gaining importance as infrastructure operators look for test systems that reflect live inference behavior under realistic workloads.

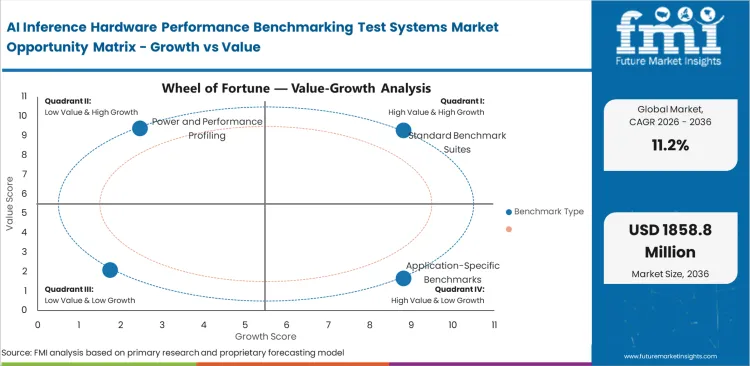

- Product and Segment View

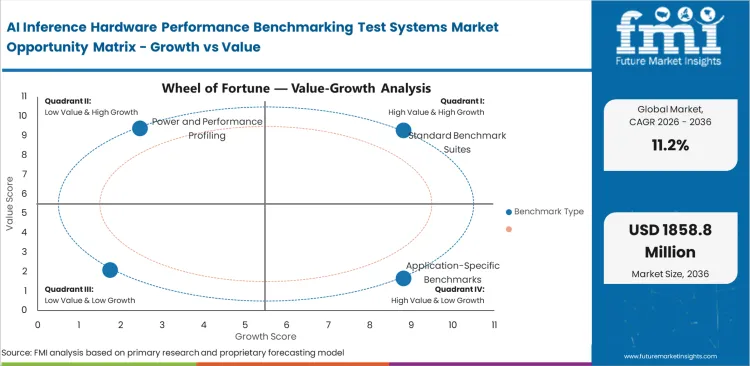

- Standard benchmark suites are expected to lead the segment because they support comparable and repeatable evaluation across architectures and software stacks.

- Benchmark activity is expanding into infrastructure aware environments where workload emulation and telemetry support deeper performance analysis.

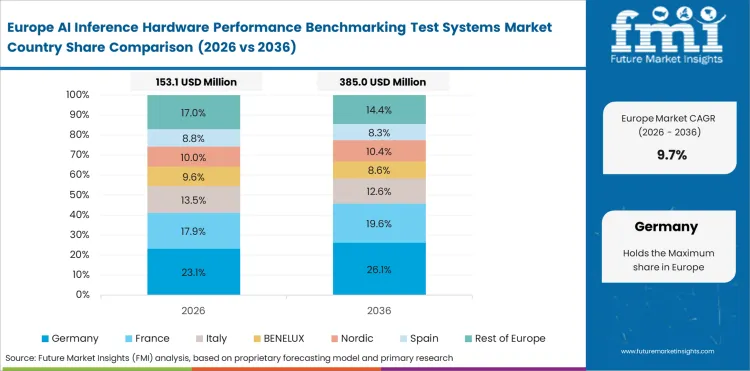

- Geography and Competitive Outlook

- China is likely to be a key growth market due to domestic accelerator programs and strong deployment activity across cloud and embedded inference environments.

- Brazil is expected to record solid growth supported by applied research activity and pilot-scale validation work.

- Companies with stronger benchmark coverage and realistic workload validation capability are expected to strengthen their presence over the forecast period.

- Analyst Opinion

- , Principal Consultant at FMI says, “Demand is moving toward benchmark systems that reflect real inference behavior. Companies that can combine repeatable benchmarking with workload realism are likely to strengthen their position over the forecast period.”

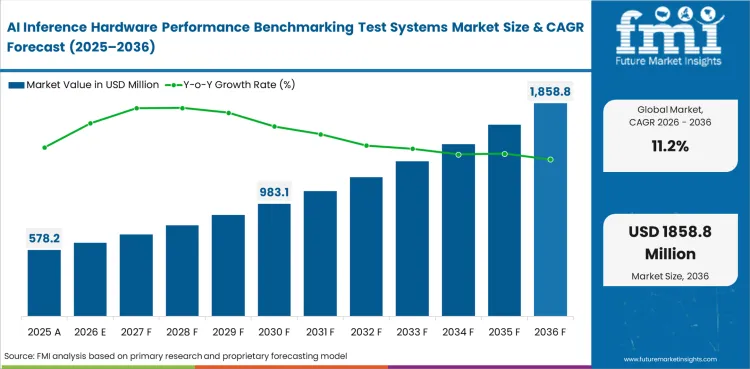

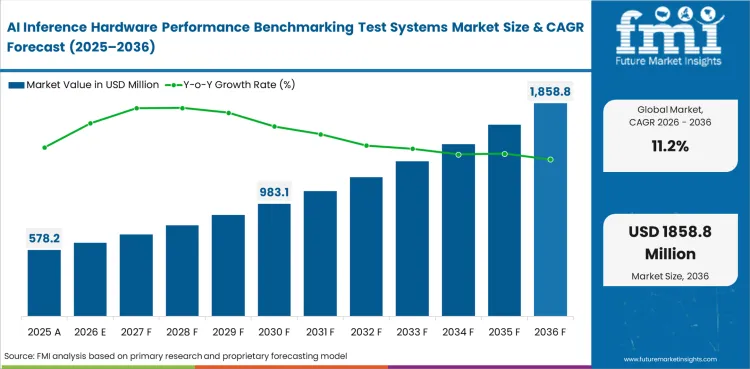

- AI Inference Hardware Performance Benchmarking Test Systems Market Value Analysis

- The market is moving from a benchmark reporting category into a more established part of AI infrastructure validation spending.

- Demand is rising as inference deployment expands across cloud systems and edge hardware, which increases the need to measure latency and power efficiency under realistic workloads.

- Adoption is supported by the need to compare GPUs, CPUs and NPUs with repeatable benchmark methods before production rollout.

- Spending is gaining support from the push for test systems that connect benchmark output with full-stack inference behavior across compute and software layers.

AI Inference Hardware Performance Benchmarking Test Systems Market Definition

The AI inference hardware performance benchmarking test systems market includes hardware platforms, software suites, workload harnesses, telemetry stacks, and validation environments used to measure the inference performance of CPUs, GPUs, NPUs, TPUs, FPGAs, and custom AI accelerators under standardized or application-aligned conditions.

AI Inference Hardware Performance Benchmarking Test Systems Market Inclusions

Included within scope are standard benchmark suites, application-specific benchmark environments, power and performance profiling tools, latency and SLA validation harnesses, workload replay environments, inference emulation platforms, telemetry-linked software stacks, and infrastructure-aware benchmark systems used for AI inference hardware qualification.

AI Inference Hardware Performance Benchmarking Test Systems Market Exclusions

Excluded from scope are pure AI model training benchmarks, generic server stress tools without inference workload awareness, ordinary datacenter traffic generators that do not model inference behavior, and broad-purpose electronics testers without AI accelerator performance validation capability.

AI Inference Hardware Performance Benchmarking Test Systems Market Research Methodology

- Primary Research: FMI assesses supplier positioning and benchmark scope through structured analysis of benchmark frameworks and official product disclosures tied to inference validation.

- Desk Research: The study uses primary industry sources including MLCommons benchmark material and public benchmark documentation tied directly to AI inference hardware evaluation.

- Market Sizing and Forecasting: The market is sized through a bottom-up allocation of specialist benchmarking and inference validation demand across cloud and edge AI hardware environments.

- Data Validation and Update Cycle: Forecast assumptions are cross-checked against benchmark framework updates and recent supplier launches across 2025 to 2026.

Why is the AI Inference Hardware Performance Benchmarking Test Systems Market Growing?

- AI inference deployment is expanding across cloud infrastructure and edge hardware, which increases the need to measure latency and power efficiency before systems move into production.

- Broader model coverage in benchmark programs is increasing the value of structured performance comparison across GPUs and custom accelerators.

- Full-stack inference validation is gaining importance as infrastructure operators look for benchmark systems that reflect live workload behavior across compute and networking layers.

AI inference benchmarking is becoming more important in deployment planning as more workloads move into cloud and edge environments. Hardware platforms need objective comparison before broader rollout. This need is expanding across cloud systems and embedded AI environments.

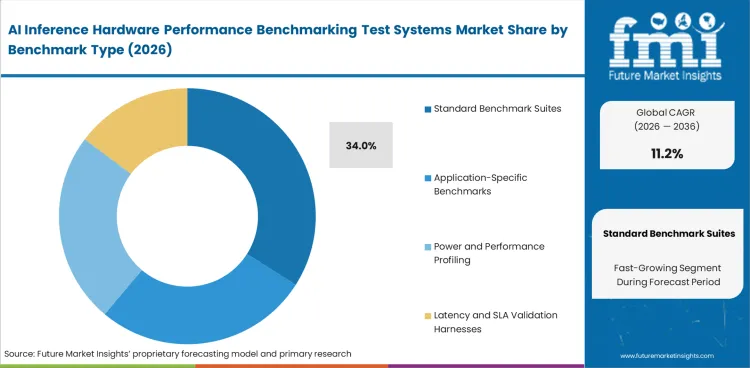

Market Segmentation Analysis

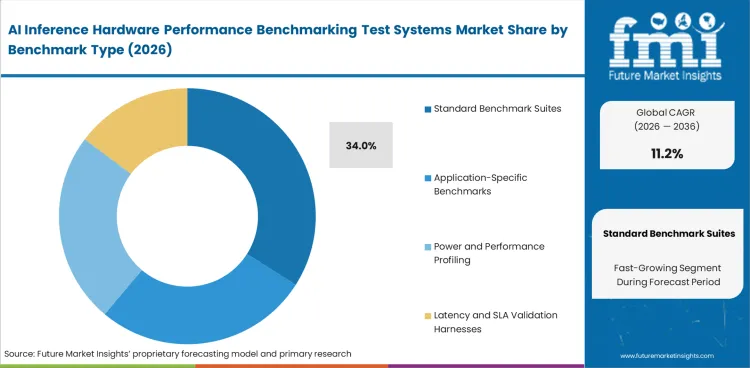

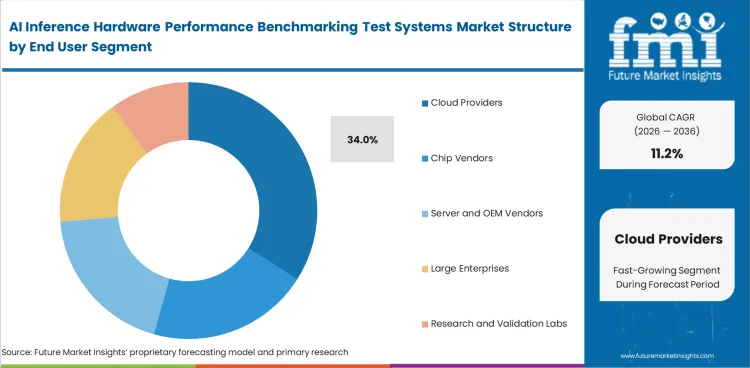

- Standard benchmark suites hold 34.0% of the market share, and these tools are used to compare AI inference hardware across repeatable and architecture-neutral test conditions.

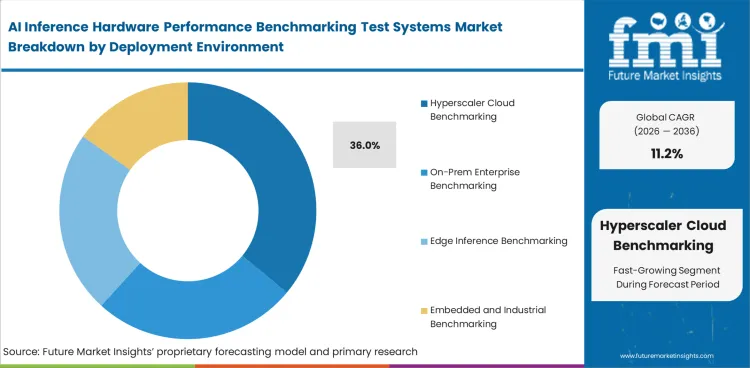

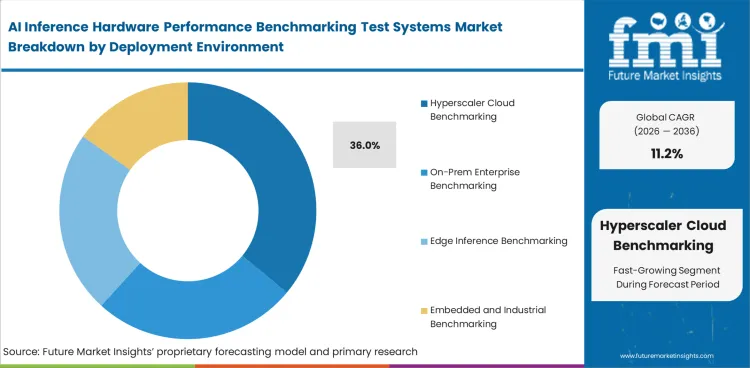

- Hyperscaler cloud benchmarking accounts for the largest share of demand at 36.0%, with large cloud environments requiring deeper validation of latency, throughput, and power efficiency before production deployment.

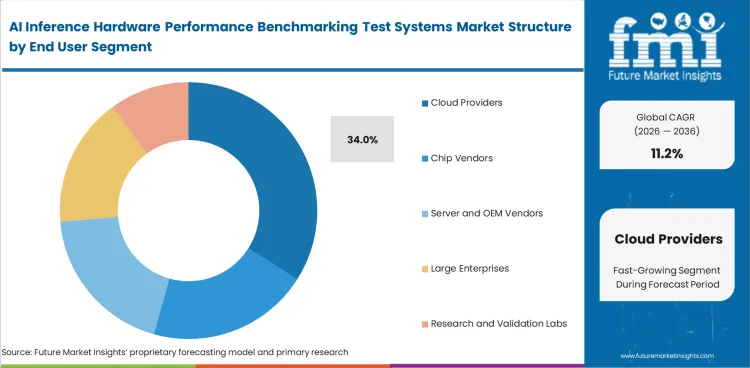

- Cloud providers lead end-user demand with 34.0% share, which reflects how benchmark systems are used for capacity planning, instance design, and infrastructure optimization.

The AI inference hardware performance benchmarking test systems market is segmented by Benchmark Type, Deployment Environment, End User, Hardware Under Test, and Region. Benchmark Type includes Standard Benchmark Suites, Application-Specific Benchmarks, Power or Performance Profiling, and Latency or SLA Validation Harnesses. Deployment Environment includes Hyperscaler Cloud Benchmarking, On-Prem Enterprise, Edge Inference, and Embedded or Industrial. End User includes Cloud Providers, Chip Vendors, Server or OEM Vendors, and Large Enterprises. Hardware Under Test includes GPUs, CPUs, NPUs and TPUs, FPGAs, and Custom ASIC Accelerators. Region includes North America, Latin America, Europe, East Asia, South Asia and Pacific, and Middle East and Africa.

Insights into the Standard Benchmark Suites Segment

- Standard benchmark suites are expected to account for 34.0% share in 2026 because they support comparable and repeatable measurement across platforms.

- These suites stay central to the market because public benchmark visibility and internal evaluation depend on consistent test conditions.

Insights into the Application-Specific Benchmarks Segment

- Application specific benchmarks are expected to account for 28.0% share in 2026 because they align testing more closely with domain workloads and model behavior.

- These benchmarks support deeper tuning in production oriented inference environments where real workload fit has higher importance.

Insights into the Power or Performance Profiling Segment

- Power or performance profiling is expected to account for 22.0% share in 2026. This segment is important because operators need clearer visibility into efficiency and cost per inference.

- Demand in this segment is supported by rising focus on performance per watt under realistic load conditions.

Insights into the Hyperscaler Cloud Benchmarking Segment

- Hyperscaler cloud benchmarking is expected to account for 36.0% share in 2026 because cloud scale inference environments require rigorous hardware comparison under large deployment loads.

- Benchmark intensity is highest in this segment because scale increases the cost impact of throughput and infrastructure efficiency.

Insights into the Cloud Providers Segment

- Cloud providers are expected to account for 34.0% share in 2026. This segment leads because providers use benchmark systems to guide instance design and platform planning.

- Demand in this segment is firm because benchmark visibility has direct value in service positioning and infrastructure efficiency.

AI Inference Hardware Performance Benchmarking Test Systems Market Drivers, Restraints, and Opportunities

- Expanding inference deployment across cloud and edge environments is increasing the volume and depth of benchmarking work for AI hardware.

- Complex validation environments can raise setup cost and infrastructure tuning requirements.

- Workload-realistic emulation and infrastructure-aware benchmarking are taking a larger role in inference hardware validation.

AI inference platforms are moving into wider production use across data centers and embedded systems. Market growth is driven by rapid deployment of inference workloads across these settings. MLCommons says MLPerf Inference exists because hardware and software combinations make reproducible assessment across architectures challenging. This is increasing demand for systems that can measure latency and accuracy under controlled benchmark conditions.

Input Cost and Integration Pressure

This market faces a restraint from setup cost and workflow complexity. Inference benchmarking environments often need benchmark suite alignment and infrastructure visibility across multiple system layers. MLCommons notes that the large number of hardware and software combinations makes representative benchmarking difficult. Configuration and integration effort can stay high for advanced deployments. This can slow adoption in smaller labs and enterprise environments with tighter technical capacity.

Opportunity in Power and Efficiency Benchmarking

MLCommons maintains a dedicated power benchmarking effort to enable reporting and comparison of energy consumption and performance across benchmarked systems. This gives power focused test systems more commercial value. Inference cost and rack efficiency become stronger decision factors.

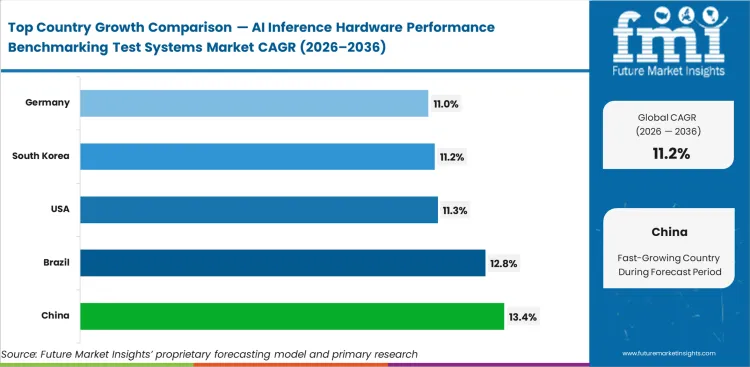

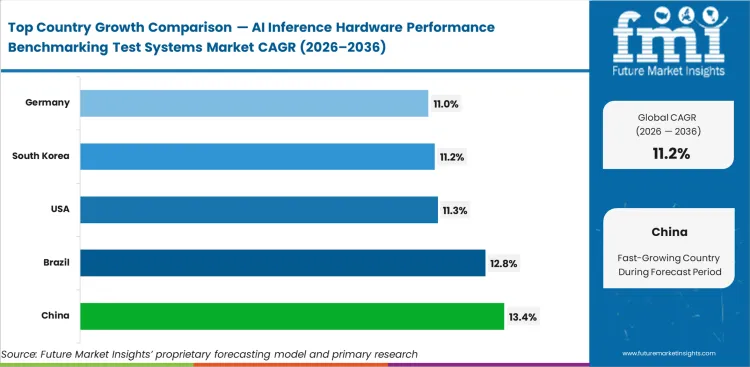

AI Inference Hardware Performance Benchmarking Test Systems Market CAGR Analysis by Country

.webp)

| Country |

CAGR |

| China |

13.4% |

| Brazil |

12.8% |

| United States |

11.3% |

| South Korea |

11.2% |

| Germany |

11.0% |

Source: FMI analysis based on primary research and proprietary forecasting model.

AI Inference Hardware Performance Benchmarking Test Systems Market Analysis By Country

- China is expected to lead major country growth with a 13.4% CAGR through 2036. Brazil is projected to expand at a 12.8% CAGR.

- The United States is likely to record 11.3% CAGR during the same period.

- South Korea is forecast to post 11.2% CAGR, and Germany is likely to grow at 11.0% through 2036.

- The global market is projected to rise at a CAGR of 11.2% over the forecast period.

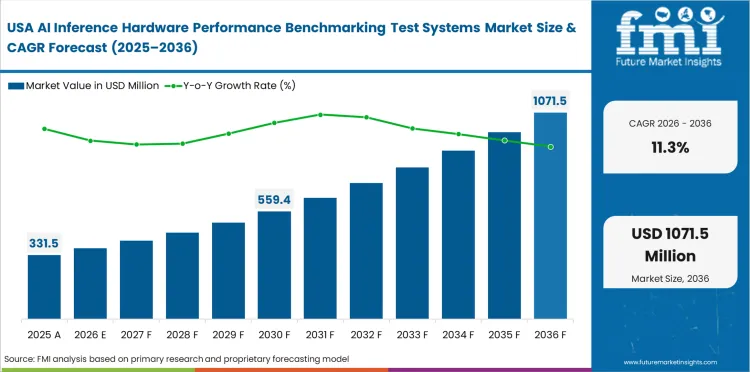

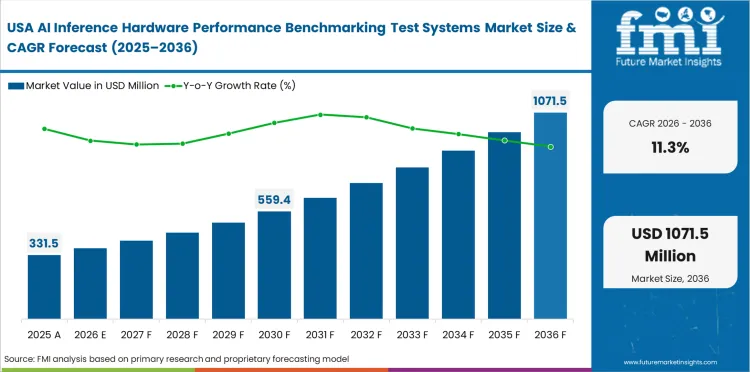

Demand Outlook for AI Inference Hardware Performance Benchmarking Test Systems Market in the United States

The USA AI inference hardware performance benchmarking test systems market is projected to grow at a CAGR of 11.3% by 2036. Demand is supported by platform refresh cycles and active vendor evaluation across cloud and enterprise inference infrastructure. Public benchmark visibility and infrastructure scale validation are increasing the need for systems that measure throughput and efficiency under realistic workload conditions.

- Platform refresh cycles are increasing benchmark activity across inference hardware programs.

- Vendor evaluation keeps repeatable performance comparison important in this market.

- Infrastructure-scale validation is supporting deeper benchmark system use.

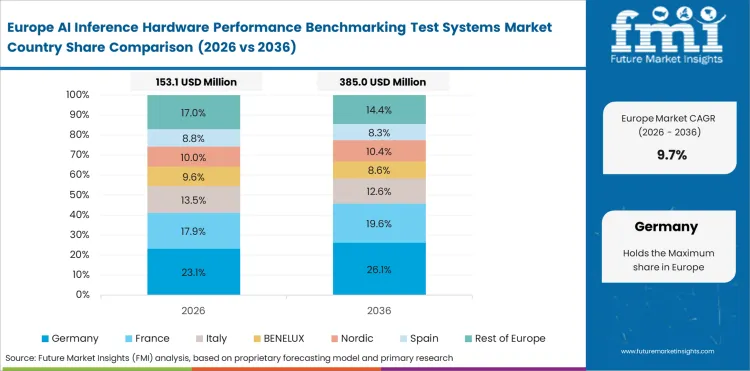

Opportunity Analysis of AI Inference Hardware Performance Benchmarking Test Systems Market in Germany

Germany is projected to grow at a CAGR of 11.0% through 2036. Demand is firm because advanced performance measurement capability and established hardware research activity support serious benchmarking work across inference platforms. The market benefits from industrial AI adoption, which raises the value of systems that can compare accelerators under stable and repeatable conditions.

- Advanced measurement capability supports stronger benchmark intensity in Germany.

- Hardware research activity keeps inference evaluation demand on a solid base.

- Industrial AI use is increasing the value of stable performance comparison.

In-Depth Analysis of AI Inference Hardware Performance Benchmarking Test Systems Market in South Korea

South Korea is expected to grow at a CAGR of 11.2% by 2036. Growth is driven by expanding technology markets and strong attention to testing accuracy across hardware sectors. This creates steady demand for premium benchmark systems that can verify performance consistency and infrastructure readiness before wider deployment.

- Precision standards support repeatable benchmark system demand.

- Expanding technology markets are widening inference validation activity.

- Testing accuracy remains central to hardware evaluation in South Korea.

Sales Analysis of AI Inference Hardware Performance Benchmarking Test Systems Market in China

China is projected to grow at a CAGR of 13.4% from 2026 to 2036. This market leads major country growth because domestic accelerator programs and large-scale deployments are expanding quickly across cloud and embedded inference environments. Benchmark demand is rising as operators and vendors need clearer comparison across architectures and power efficiency.

- Domestic accelerator programs support a wider local benchmarking base.

- Large-scale deployments are increasing the need for objective performance comparison.

- Power and workload validation are gaining more value across inference rollouts.

Future Outlook for AI Inference Hardware Performance Benchmarking Test Systems Market in Brazil

Brazil is projected to grow at a CAGR of 12.8% through 2036. Demand is gaining support from applied research and pilot production activity. This creates structured benchmark adoption across emerging inference programs. The market is smaller than China or the United States in scale. Its growth rate shows rising interest in controlled hardware comparison and validation discipline.

- Applied research activity is supporting local benchmark demand.

- Pilot production programs are increasing the need for hardware comparison.

- Validation discipline is improving across emerging inference deployments.

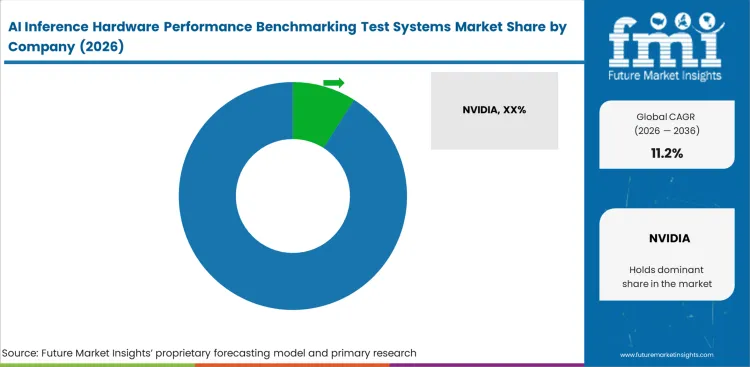

Competitive Landscape and Strategic Positioning

- The market remains moderately fragmented with a mix of benchmark framework participants and specialist infrastructure validation suppliers.

- Leading companies compete on infrastructure visibility and the ability to measure inference performance under production-like conditions.

- Specialist providers can still build strong positions where customers need inference-specific emulation or deeper telemetry aligned with live deployment behavior.

Competition in this market depends less on headline scale and more on technical fit inside AI inference validation workflows. Suppliers need to support workload diversity and repeatable performance analysis across accelerators and full-stack infrastructure. NVIDIA, AMD, Keysight Technologies, AWS, Google Cloud, Microsoft Azure, Dell Technologies, and Supermicro stay visible.

Key Companies in the AI Inference Hardware Performance Benchmarking Test Systems Market

- Key global companies leading the AI inference hardware performance benchmarking test systems market include NVIDIA, AMD, Intel, Qualcomm, Arm, Keysight Technologies, Dell Technologies, and Supermicro.

- Amazon Web Services, Google Cloud, and Microsoft Azure are important market participants because large cloud platforms use benchmarking to guide instance design and inference infrastructure optimization.

- MLCommons’ MLPerf Inference framework continues to shape visibility and performance comparison across hardware vendors. Specialist validation players such as Keysight Technologies are gaining relevance too.

Competitive Benchmarking: AI Inference Hardware Performance Benchmarking Test Systems Market

| Company |

Public Benchmark Visibility |

Workload-Realistic Validation |

Inference Infrastructure Depth |

Geographic Footprint |

| NVIDIA |

High |

High |

Strong |

Global |

| Intel |

High |

Medium |

Strong |

Global |

| AMD |

High |

Medium |

Strong |

Global |

| Keysight Technologies |

Medium |

High |

Strong |

Global |

| Amazon Web Services |

Medium |

High |

Strong |

Global |

| Google Cloud |

Medium |

High |

Strong |

Global |

| Microsoft Azure |

Medium |

High |

Strong |

Global |

| Dell Technologies |

Medium |

Medium |

Strong |

Global |

| Supermicro |

Medium |

Medium |

Strong |

Global |

| Qualcomm |

Medium |

Medium |

Moderate |

Global |

Key Developments in the AI Inference Hardware Performance Benchmarking Test Systems Market

- On April 2026, AMD highlighted its MLPerf Inference v6.0 results for Instinct MI355X systems and said the platform surpassed 1 million tokens per second in distributed inference.

- On March 2026, Keysight Technologies launched AI Inference Builder, an emulation and analytics platform designed to validate and optimize inference-focused AI infrastructure at scale.

Key Players in the AI Inference Hardware Performance Benchmarking Test Systems Market

- NVIDIA

- Intel

- AMD

- Qualcomm

- Arm

- Google Cloud

- Amazon Web Services

- Microsoft Azure

- Dell Technologies

- Supermicro

Emerging Players / Specialists

Scope of the Report

| Parameter |

Details |

| Quantitative Units |

USD 578.2 million to USD 1,671.6 million, at a CAGR of 11.2% from 2026 to 2036 |

| Market Definition |

Systems used to benchmark and validate AI inference hardware performance across latency and related production-oriented metrics under standardized or workload-aligned test conditions. |

| Regions Covered |

North America, Latin America, Europe, East Asia, South Asia and Pacific, Middle East and Africa. |

| Countries Covered |

China, Brazil, United States, South Korea, Germany, and additional countries. |

| Key Companies Profiled |

NVIDIA, Intel, AMD, Qualcomm, Arm, Google Cloud, Amazon Web Services, Microsoft Azure, Dell Technologies, Supermicro, and Keysight Technologies. |

| Forecast Period |

2026 to 2036. |

| Approach |

Bottom-up specialist benchmarking and validation system sizing cross-checked against inference workload expansion and public supplier developments. |

AI Inference Hardware Performance Benchmarking Test Systems Market by Segments

AI Inference Hardware Performance Benchmarking Test Systems Market by Benchmark Type

- Standard Benchmark Suites

- Application-Specific Benchmarks

- Power and Performance Profiling

- Latency and SLA Validation Harnesses

AI Inference Hardware Performance Benchmarking Test Systems Market by Hardware Under Test

- GPUs

- CPUs

- NPUs and TPUs

- FPGAs

- Custom ASIC Accelerators

AI Inference Hardware Performance Benchmarking Test Systems Market by Metric Focus

- Throughput

- Latency

- Power Efficiency

- Accuracy Retention

- Cost per Inference

- Thermal Stability

AI Inference Hardware Performance Benchmarking Test Systems Market by Deployment Environment

- Hyperscaler Cloud Benchmarking

- On-Prem Enterprise Benchmarking

- Edge Inference Benchmarking

- Embedded and Industrial Benchmarking

AI Inference Hardware Performance Benchmarking Test Systems Market by Model Workload

- Large Language Models

- Vision and Vision-Language Models

- Recommender Systems

- Speech and Audio Models

- Multimodal Generative Models

AI Inference Hardware Performance Benchmarking Test Systems Market by Validation Scope

- Chip-Level Benchmarking

- Board-Level Validation

- Server-Level Qualification

- Rack and Cluster Benchmarking

- Full-Stack Inference Infrastructure Validation

AI Inference Hardware Performance Benchmarking Test Systems Market by End User

- Cloud Providers

- Chip Vendors

- Server and OEM Vendors

- Large Enterprises

- Research and Validation Labs

AI Inference Hardware Performance Benchmarking Test Systems Market by Sales Channel

- Direct Sales

- System Integrators

- Software Subscriptions

- Managed Benchmarking Services

- Validation Contracts

AI Inference Hardware Performance Benchmarking Test Systems Market by Region

- North America

- Europe

- Germany

- United Kingdom

- France

- Rest of Europe

- East Asia

- South Asia

- Southeast Asia

- Singapore

- Rest of Southeast Asia

Research Sources and Bibliography

- Keysight Technologies. (2026, March 17). Keysight launches AI inference emulation platform to validate and optimize AI infrastructure.

- AMD. (2026, April 1). MLPerf 6.0: AMD Instinct™ MI355X GPUs surpass 1M tokens/sec, power new workloads and demonstrate distributed inference.

- Intel. (2025, May 19). Intel Gaudi 3 expands availability to drive AI innovation at scale.

This Report Addresses

- What is the current and future size of the AI inference hardware performance benchmarking test systems market?

- How fast is the market expected to grow between 2026 and 2036?

- Which benchmark type is likely to lead the market by 2026?

- Which deployment environment is expected to account for the highest demand by 2026?

- What factors are driving market demand globally?

- How are benchmark standardization and workload realism influencing system demand?

- Why are cloud providers expected to remain a major demand center?

- Which countries are projected to show the fastest growth through 2036?

- What is driving expansion in China, Brazil, and the United States?

- Who are the key companies active in the market?

- How does FMI estimate and validate the market forecast?

Frequently Asked Questions

What is the global market value in 2026?

The market is estimated at USD 578.2 million in 2026.

How large is the market expected to be by 2036?

The market is projected to reach USD 1,671.6 million by 2036.

What is the CAGR from 2026 to 2036?

The market is expected to grow at a CAGR of 11.2% over the forecast period.

Which benchmark type leads demand?

Standard benchmark suites are projected to lead with 34.0% share in 2026.

Which deployment environment leads demand?

Hyperscaler cloud benchmarking is projected to hold 36.0% share in 2026.

Which customer group leads demand?

Cloud providers are expected to account for 34.0% of demand in 2026.

Which countries show the fastest growth?

China, Brazil, the United States, South Korea, and Germany rank among the faster-growing country markets in this category.

.webp)