Healthcare Data De-Identification Assurance Market

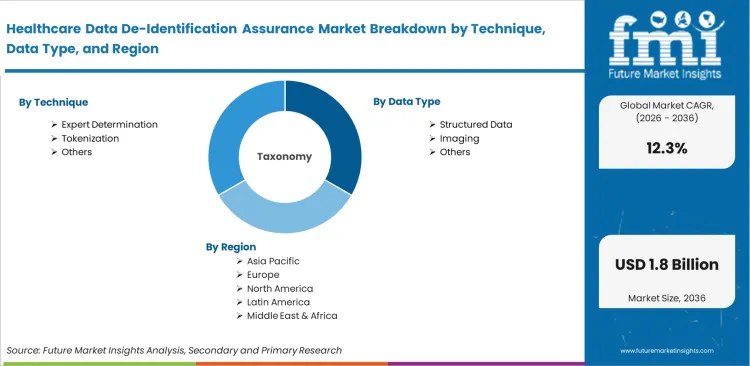

The Healthcare Data De-Identification Assurance Market Is Segmented By Technique (Expert Determination, Safe Harbor, Tokenization, Synthetic Data, Hybrid Methods), Data Type (Structured Data, Clinical Text, Imaging, Claims Data, Multi-Modal Data), Deployment (Cloud, On-Premise, Hybrid), Buyer Type (Providers, Life Sciences, Payers, Research Institutes, Registries), Assurance Layer (Software, Expert Services, Risk Scoring, Audit Evidence, Monitoring), And Region. Forecast For 2026 To 2036.

Historical Data Covered: 2016 to 2024 | Base Year: 2025 | Estimated Year: 2026 | Forecast Period: 2027 to 2036

Healthcare Data De-Identification Assurance Market Size, Market Forecast and Outlook By FMI

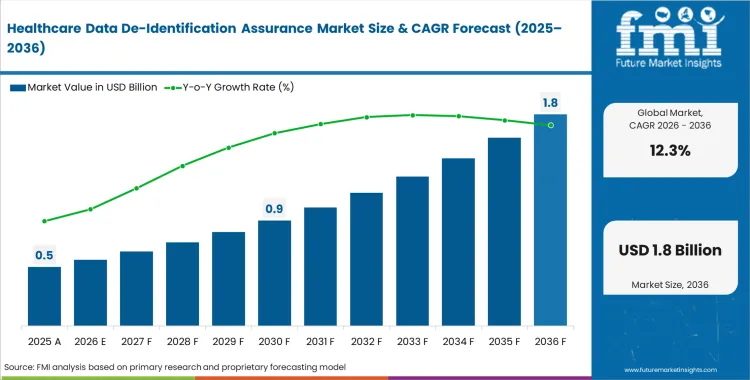

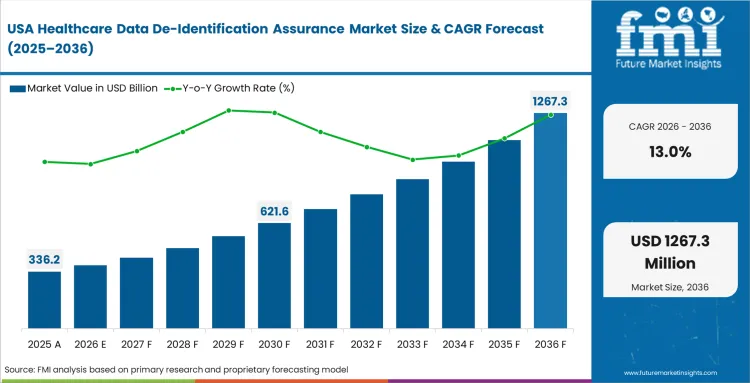

The healthcare data de-identification assurance market was valued at USD 0.4 billion in 2025. Revenue is expected to reach USD 0.5 billion in 2026, growing at a CAGR of 12.3% through 2036. The market is projected to reach USD 1.6 billion by 2036, as clinical research increasingly requires proof of privacy rather than basic field removal.

Summary of Healthcare Data De-Identification Assurance Market

- Healthcare Data De-Identification Assurance Market Definition

- This sector provides the formal verification and risk scoring needed to prove that healthcare datasets have been sufficiently anonymized for secondary use. It connects basic data masking to legally defensible HIPAA de-identification by providing statistical evidence and independent audit trails.

- Demand Drivers in the Market

- Secondary data monetization requirements force digital transformation in healthcare market executives to provide verifiable privacy certificates to data buyers.

- The need for de-identification for NIH data sharing compels R&D directors to anonymize patient-level data for public disclosure without risking participant privacy.

- Machine learning training needs push data scientists to protect diagnostic image privacy for AI training using high-dimensional assurance protocols.

- Key Segments Analyzed in the FMI Report

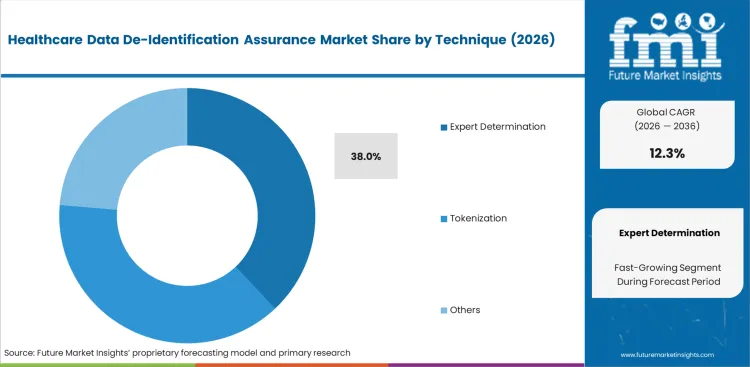

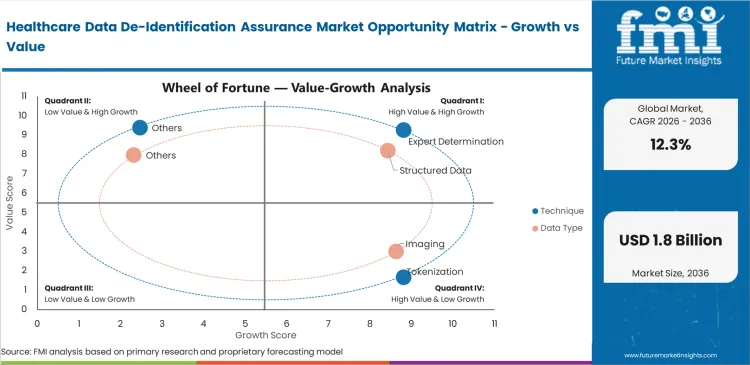

- Expert Determination: 38.0% share in 2026, driven by the safe harbor vs expert determination shift toward mathematical risk modeling.

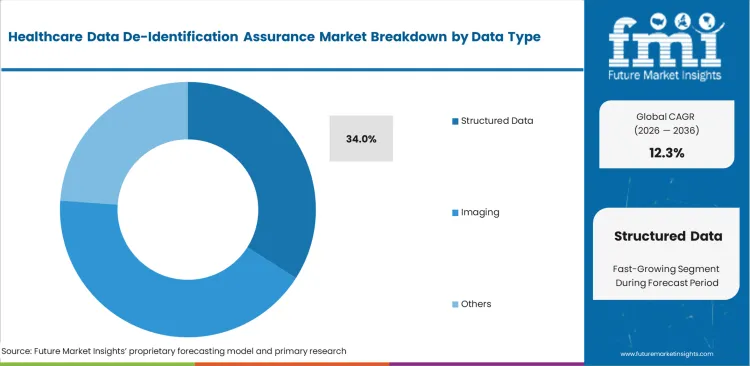

- Structured Data: 34.0% share in 2026, as EHR de-identification platform interoperability increases the volume of exchangeable clinical fields.

- Cloud: 44.0% share in 2026, reflecting the need for scalable medical imaging software validation in distributed networks.

- Providers: 32.0% share in 2026, as healthcare providers de-identification software demand increases for population health analytics.

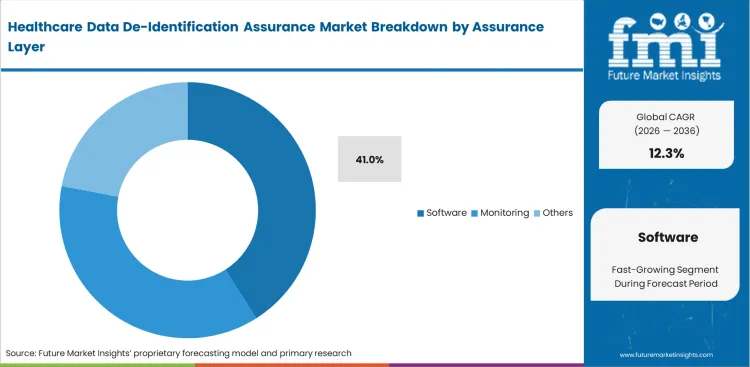

- Software: 41.0% share in 2026, as imaging interoperability middleware and hospital PHI redaction automation replace manual reviews.

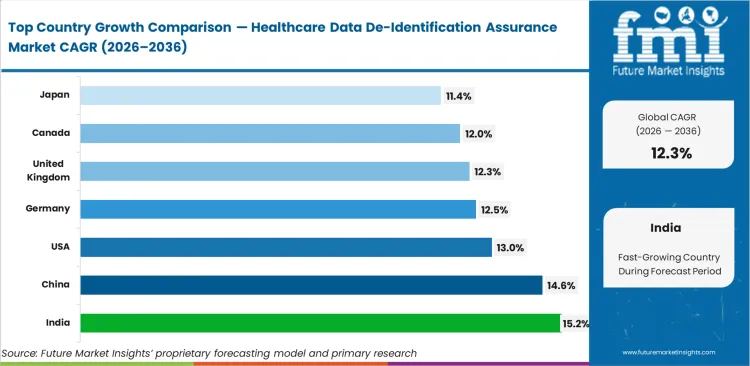

- India: 15.2% CAGR, fueled by the India healthcare anonymization market expansion and digitized public health records.

- Analyst Opinion at FMI

- Sabyasachi Ghosh, Principal Analyst, Healthcare, at FMI, points out, "Healthcare organizations are navigating an incentive misalignment where the technical ability to share data has far outpaced the statistical ability to maintain data utility after de-identification. While many assume that removing names and dates is sufficient, the reality is that re-identification risk in healthcare AI remains high due to simple linkage. We are seeing the assurance layer shift from a one-time 'seal of approval' to a continuous requirement, especially as health data tokenization and healthcare interoperability solutions become the standard for large-scale medical research."

- Strategic Implications / Executive Takeaways

- Privacy Officers should implement a healthcare anonymization software stack to replace manual 'point-in-time' audits for data streams.

- Data Engineers at life science firms are required to find an alternative to manual PHI redaction to avoid research delays in pharma RWD de-identification.

- C-suite executives at provider organizations need a shortlist of healthcare de-identification vendors to speed up value extraction from electronic health records in the U.S.

- Methodology

- Primary research highlights the specific roles of Data Privacy Officers and Medical Informatics Leads in defining how to validate de-identification quality.

- Desk research utilizes electronic medical records certification registries to map the penetration of a de-identification software for hospitals.

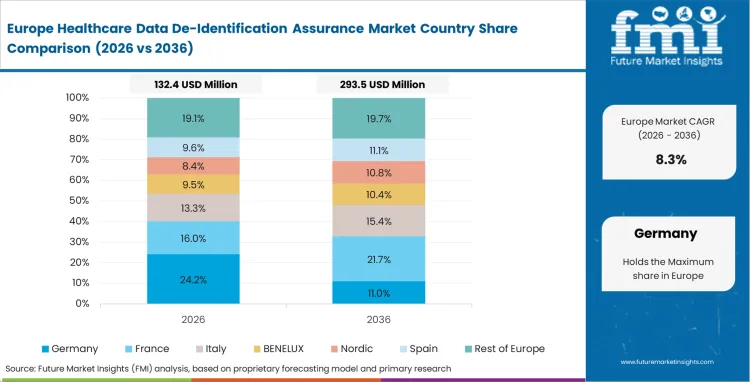

- Market-sizing anchors on the volume of secondary use requests in the Germany health data de-identification market.

- Data validation is achieved through tracking procurement cycles in global digital health and healthcare de-identification RFP activity.

Chief Information Security Officers at health systems are evaluating healthcare de-identification software as they balance compliance demands with the need to preserve data utility. Adoption of privacy enhancing technology has moved from a defensive legal posture to an offensive strategy for privacy-preserving health data sharing. Organizations realize that the commercial stakes of a re-identification event include the total loss of secondary data licensing revenue, making healthcare de-identification trends shift toward continuous risk monitoring. Healthcare privacy engineering is now being managed separately from de-identification so audit trails stay clear and unbiased.

Life sciences firms reach a turning point when they move from project-based anonymization to a company-wide data pipeline built on a patient data de-identification platform. Once research heads mandate automated real world evidence solutions for observational studies, the assurance layer becomes a permanent infrastructure requirement. This transition from manual expert review to a robust healthcare de-identification audit trail defines the next decade of market behavior.

India leads at 15.2%, while China tracks at 14.6% on the back of stringent personal information protection laws. The US healthcare de-identification assurance market is set to grow at 13.0%, followed by Germany at 12.5% and United Kingdom at 12.3%. Canada is projected to reach 12.0% and Japan is forecast to expand at 11.4%. Structural divergence is widening between those favoring the safe harbor de-identification healthcare approach and those mandating expert determination HIPAA vendor services.

Healthcare Data De-Identification Assurance Market Definition

What is healthcare data de-identification assurance?

Functional boundaries involve the independent validation and mathematical certification of data privacy protocols applied to protected health information. This is not the act of masking data, but the forensic assurance provided by de-identification assurance services that a resulting dataset carries a negligible risk of re-identification. Verification includes checking for membership inference and linkage attacks within high-dimensional clinical datasets.

Healthcare Data De-Identification Assurance Market Inclusions

Scope covers clinical data anonymization software, third-party expert services, and clinical data provenance management tools. It includes automated monitoring for drift in re-identification risk when new longitudinal data is appended. The analysis covers specialized DICOM de-identification software and integration layers used with healthcare business intelligence systems.

Healthcare Data De-Identification Assurance Market Exclusions

General cybersecurity tools like firewalls and basic access controls are excluded as they do not address medical data anonymization specifically. Standard data masking for software testing that does not involve clinical research or de-identification for FDA real world data is outside this functional scope. Hardware-based trusted execution environments are considered infrastructure rather than a dedicated assurance layer.

Healthcare Data De-Identification Assurance Market Research Methodology

- Primary Research: Interviews with Privacy Officers, Clinical Data Architects, and a HIPAA expert determination service provider.

- Desk Research: Review of healthcare master data management registries, NIST privacy frameworks, and a checklist for HIPAA de-identification software.

- Market-Sizing and Forecasting: Analysis of clinical trial data management service volume and secondary data licensing transaction frequency.

- Data Validation and Update Cycle: Cross-verification against privacy-tech venture capital flow and healthcare anonymization software pricing trends.

Segmental Analysis

Healthcare Data De-Identification Assurance Market Analysis By Technique

Displacement of traditional methods is accelerating as artificial intelligence in healthcare increases the risk of re-identification attacks that simple redaction cannot prevent. Expert determination holds 38.0% share, reflecting stronger preference among Privacy Officers for mathematical risk modeling over rule-based approaches. Clinical Data Architects are also weighing de-identification against synthetic data in healthcare as they try to preserve research utility without carrying forward the risks tied to original patient records. Decisions around tokenization and anonymization now sit at the center of secure research environment design, since overly rigid techniques can result in data-swamping and leave datasets with little practical research value.

- Risk-Calibration Lock-In: Once a Privacy Officer validates an expert determination model, switching techniques requires a total re-baselining of the risk threshold. Compliance leads treat this re-validation cycle as a structural cost that favors an established expert determination HIPAA vendor.

- Utility-Privacy Trade-Off: Healthcare IT outsourcing firms utilize health data tokenization to link disparate datasets while maintaining privacy assurance. Data Scientists face a consequence where high-security tokenization can break temporal relationships if the assurance layer is not properly tuned.

- Synthetic Verification Cycle: Synthetic data for healthcare privacy adoption follows a phase where researchers validate the statistical distribution of the fake data against the real cohort. R&D directors gain a commercial advantage by utilizing a healthcare synthetic data platform to bypass patient consent for subsequent analysis.

Healthcare Data De-Identification Assurance Market Analysis By Data Type

Data decisions are becoming more difficult as the focus shifts from structured data to unstructured clinical notes. Structured data accounts for 34.0% of the market, while healthcare cloud systems are increasingly being used to process clinical note de-identification through NLP. Clinical informatics leads know these tools must catch hidden identifiers, such as a surgery date tied to a rare disease. Radiology is becoming harder to manage because face recognition can now work on 3D skull reconstructions. Hospitals that delay DICOM de-identification may face compliance issues across stored imaging data.

- Unstructured-Text Friction: Can de-identified clinical notes be re-identified? Yes, if the software cannot distinguish between a patient's address and a medical procedure with similar phonetic patterns. Informatics Directors must use secondary assurance layers to audit their clinical text de-identification tool performance.

- Pixel-Level Anonymization: How do hospitals de-identify imaging data for AI? It depends on the removal of burnt-in patient details from DICOM headers and pixel data. Biomedical Engineers at hospital systems face consequences where improper radiology de-identification during PACS migration leads to the rejection of data.

- Multi-Modal Linkage Risk: Claims data de-identification combined with EHR data creates a re-identification risk that traditional platforms cannot quantify. Bioinformaticians must use privacy-preserving linkage healthcare protocols to certify that the intersection of these high-dimensional datasets does not create a unique 'fingerprint'.

Healthcare Data De-Identification Assurance Market Analysis By Assurance Layer

The assurance layer is moving from manual consulting to continuous software monitoring. Software holds a 41.0% share because it can score risk in real time as data enters clinical AI governance systems. Security operations managers know a one-time audit is not enough when underlying data keeps changing. The audit trail often matters as much as the de-identification itself because it gives organizations a record to show regulators later. Buyers that choose only basic anonymization software may struggle to prove compliance in the future.

- Automated Risk Triggers: Software-led assurance layers utilize automated triggers to alert Privacy Officers when a dataset's re-identification risk crosses a statistical threshold. Operations Managers avoid the hidden costs of manual re-auditing by maintaining a constant 'green' status on their privacy dashboard.

- Audit-Trail Durability: Audit evidence modules provide a cryptographically signed record of every transformation applied to a dataset. Compliance Auditors gain the ability to prove 'due diligence' to federal agencies, providing an ROI of healthcare de-identification automation in terms of legal risk mitigation.

- Expert-Service Validation: De-identification assurance services remain a requirement for novel datasets that do not fit into established radiology structured reporting automation templates. Consultancy directors must qualify their statistical methods against established frameworks to ensure their 'expert' status is recognized.

Healthcare Data De-Identification Assurance Market Drivers, Restraints, and Opportunities

Commercial stakes for delayed data sharing are forcing Healthcare Providers to act on healthcare de-identification trends now. The pressure is coming from the need to feed healthcare AI computer vision models with vast quantities of verified data. Chief Data Officers realize that every month of delay in qualifying a dataset for research costs them potential revenue, prompting them to seek best healthcare de-identification tools.

Fundamental structural friction slowing adoption is the perceived trade-off between privacy and data utility. Researchers fear that rigorous healthcare anonymization tools will 'blanket' too much information, making the dataset useless for granular analysis. This persists because the math behind 'differential privacy' is often counter-intuitive to medical researchers, leading to internal organizational friction between the privacy team and the clinical team.

- Synthetic-Clinical Crossovers: Healthcare synthetic data vs de-identified data for AI evaluation allows research organizations to bypass many de-identification assurance hurdles while maintaining statistical validity for training.

- Stream Assurance For IOT: Providing continuous de-identification for FHIR de-identification of IoT data streams opens new markets in remote patient monitoring and wearable analytics.

- Cross-Border Compliance Mapping: Automated assurance layers that can 'translate' HIPAA requirements into GDPR-equivalent risk scores enable easier global collaboration for UK clinical data anonymization market projects.

Regional Analysis

The geographical trajectory of the healthcare data de-identification assurance market is defined by a distinct shift from manual privacy compliance to automated, high-precision risk certification across forty-plus countries. This global expansion is anchored by the necessity for privacy-preserving health data sharing within localized regulatory frameworks that demand mathematically verifiable results.

.webp)

| Country | CAGR (2026 to 2036) |

|---|---|

| India | 15.2% |

| China | 14.6% |

| United States | 13.0% |

| Germany | 12.5% |

| United Kingdom | 12.3% |

| Canada | 12.0% |

| Japan | 11.4% |

Source: Future Market Insights (FMI) analysis, based on proprietary forecasting model and primary research

North America Healthcare Data De-Identification Assurance Market Analysis

In the United States, privacy expectations have moved beyond basic guidance. Health systems are now expected to show stronger evidence that de-identification standards have been met. The region leads globally because many providers have built established operations for using electronic health records in research partnerships. Large hospital buyers are also moving past simple name removal and looking for de-identification platforms that can support and document each research export. These organizations want data that remains useful for long-term research while still meeting HIPAA expert determination standards. Automated risk-scoring tools are helping them expand data-sharing activity without adding more manual work for privacy teams..

- United States: The USA market is growing at 13.0% CAGR, supported by the OCR issuing clearer technical guidance on the expert determination method. Privacy Officers at academic medical centers must provide statistical proof of anonymization, as federal research grants require this documentation. The necessity for robust audit trails has driven the adoption of automated software that can handle complex multi-modal datasets. Large-scale health systems are increasingly viewing de-identification as a core infrastructure requirement rather than a peripheral compliance task. To maintain their competitive edge, these organizations are investing in high-end assurance layers that provide real-time monitoring of re-identification risks.

- Canada: Data Architects at Canadian research hubs must implement localized healthcare anonymization tools that satisfy both provincial health acts and federal PIPEDA requirements. Canada is projected to grow at 12.0% CAGR through 2036, driven largely by provincial health data laws in places like Ontario that require de-identification to be completed locally before data crosses provincial or national borders. This structural requirement ensures that patient trust is maintained while local AI innovation thrives within secure environments. The Canadian providers as a result are increasingly seeking vendors who offer specialized support for bilingual clinical text and unique regional data formats.

As per FMI’s assessments, hospital administrators are moving beyond basic redaction to implement comprehensive de-identification assurance services that satisfy the most rigorous federal audit requirements. The maturity of this region in the end is evidenced by the seamless integration of privacy risk scoring into the daily operational workflows of Tier-1 medical centers.

Asia-Pacific Healthcare Data De-Identification Assurance Market Analysis

Infrastructure-led digitization across India and China is currently generating the most extensive patient data pools in the world, though accessing them requires sophisticated new assurance frameworks. A structural gate has been created by China’s PIPL, requiring that imaging AI data privacy software prove training data cannot be reversed to individual citizens. Governments in the area are actively promoting standardized de-identification protocols to facilitate cross-border research collaborations and pharmaceutical trials. As clinical data becomes a primary national asset, the focus has shifted toward building indigenous assurance technologies that can scale with massive population health initiatives.

- India: A projected growth trajectory is highlighted by a 15.2% CAGR for the India healthcare anonymization market as the National Digital Health Mission creates a requirement for automated assurance at scale. IT Directors at healthcare outsourcing firms gain a massive commercial advantage by being the first to offer top HIPAA de-identification vendors level services for Western clients. The rapid adoption of electronic health records in tier-2 cities is further broadening the demand for scalable, cost-effective anonymization platforms. Strategic investments in privacy-enhancing technologies are allowing Indian tech firms to pivot from basic data entry to high-value medical informatics.

- China: Operations Managers at Chinese biotech firms must navigate a practitioner reality where 'privacy' is often a prerequisite for clinical trial permits. China’s healthcare data de-identification assurance market is projected to grow at a CAGR of 14.6%, driven by government-backed health data platforms and stricter requirements around privacy-preserving technologies. This stringent regulatory environment is forcing vendors to innovate in the areas of synthetic data and secure multi-party computation. Competitive dynamics are shifting as domestic champions develop assurance layers that are deeply integrated with national health cloud infrastructures.

- Japan: Informatics leads at Japanese research institutes are prioritizing hybrid assurance methods that combine medical imaging software masking with strict access-control audits. Japan’s healthcare data de-identification assurance market is likely to advance at an 11.4% CAGR through 2036, supported by growing demand for secure data sharing in elderly care and clinical research. The country’s commitment to precision medicine relies heavily on the ability to securely share genomic and clinical data across institutional boundaries. As a result, Japanese vendors are focusing on high-accuracy NLP tools for de-identifying traditional medical records.

FMI analyses that developing indigenous healthcare anonymization tools has become a core focus for IT leaders across India and China to support massive public health initiatives. These nations increasingly mandate that any artificial intelligence in healthcare development must be anchored by mathematically proven privacy protocols to ensure long-term public trust. This trajectory ensures that regional data assets are protected against emerging re-identification threats while maximizing their utility for global pharmaceutical trials.

Europe Healthcare Data De-Identification Assurance Market Analysis

In Europe, GDPR rules shape how the market develops because the legal difference between anonymization and pseudonymization has direct commercial consequences for organizations sharing health data. A clinical trial data management service rethink has been forced across the continent by the European Medicines Agency (EMA) setting a high bar for de-identifying risk management plans. European healthcare providers are increasingly adopting decentralized data architectures to minimize the risk of large-scale re-identification events. This structural shift requires assurance layers that can operate across disparate jurisdictions while maintaining a unified audit trail. The push for European 'Data Spaces' is further accelerating the demand for standardized risk-scoring models that can be universally accepted by national regulators.

- Germany: Germany’s health data de-identification assurance market is expected to grow at a 12.5% CAGR through 2036, as the Digital Healthcare Act permits secondary use of insurance data when the assurance layer meets BSI standards. Chief Medical Officers face a competitive positioning challenge where their participation in 'European Data Spaces' depends on their healthcare de-identification software maturity. The German market is characterized by a strong preference for on-premise solutions that offer maximum control over the data transformation process. By integrating de-identification into the core EHR workflow, German providers are effectively reducing the administrative friction associated with data sharing.

- United Kingdom: Data Scientists at UK-based life science startups must design their healthcare cloud infrastructure to meet 'Gold Standard' anonymization protocols to win NHS contracts. The UK clinical data anonymization market is likely to advance at a 12.3% CAGR through 2036, supported by the NHS Transformation Directorate’s push for trusted research environments with assurance built into the platform. This specific rate reflects the ambitious goal of the UK to become a global hub for genomic and clinical AI research. These research environments are designed to provide high-utility data to researchers while ensuring that patient identities remain mathematically protected. The UK health trusts as a result are seeking automated redaction tools that can handle the complexities of diverse clinical narratives.

FMI assesses, the ongoing evolution of the healthcare cloud infrastructure enables these institutions to maintain localized control over sensitive records while participating in continent-wide research pools. By adopting standardized risk-scoring models, German and British researchers are successfully reducing the legal friction associated with secondary data utilization. This structural commitment to 'Privacy by Design' positions Europe as a leader in ethically grounded medical informatics and long-term genomic studies.

Competitive Aligners for Market Players

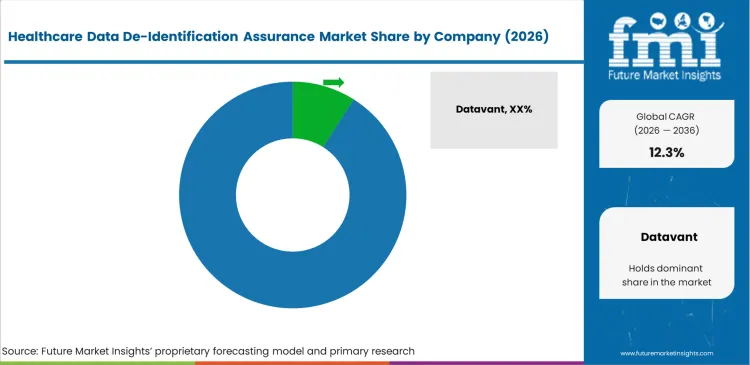

Concentration in this sector is currently moderate at the technology tier but highly fragmented at the expert service tier. Incumbent providers like Datavant and IQVIA Privacy Analytics have built significant moats through their proprietary health data tokenization ecosystems and deep healthcare IT outsourcing relationships. These players do not just provide software; they provide a 'network effect' where data from different providers can be joined because it has been assured through the same engine.

TripleBlind are attempting to disrupt this by offering healthcare PETs (privacy-enhancing technologies) that avoid de-identification altogether by keeping data behind a firewall. However, incumbents maintain an advantage through their vast 'qualification libraries', the statistical evidence they have built over thousands of successful regulatory submissions. For a new entrant, replicating this library of 'proven-safe' models is a greater barrier than the actual coding of the clinical data anonymization software.

Buyer power is increasingly consolidated among large pharmaceutical companies and hospital consortia that are tired of 'vendor lock-in.' These entities are pushing for open-standard risk scoring and want to know how to choose a healthcare de-identification vendor that allows them to move data between different platforms without losing the audit trail. Competitive battlegrounds are shifting toward who can provide the best 'utility-retention', proving that their assurance layer protects privacy without destroying the clinical value of the dataset.

Key Players in Healthcare Data De-Identification Assurance Market

- Datavant

- IQVIA Privacy Analytics

- John Snow Labs

- MDClone

- Privacert

- TripleBlind

- Duality Technologies

- Enveil

Scope of the Report

| Metric | Value |

|---|---|

| Quantitative Units | USD 0.5 billion in 2026 to USD 1.6 billion in 2036, at a CAGR of 12.3% |

| Market Definition | Functional validation and statistical verification of anonymization techniques applied to healthcare data. The scope includes risk-scoring software and expert determination services required for research and secondary data use. |

| Segmentation | Technique, Data type, Deployment, Buyer type, Assurance layer, and Region |

| Regions Covered | North America, Latin America, Europe, East Asia, South Asia & Pacific, Middle East & Africa |

| Countries Covered | United States, Canada, Brazil, Mexico, Germany, United Kingdom, France, Italy, Spain, Russia, China, Japan, South Korea, India, ASEAN, ANZ, GCC, South Africa |

| Key Companies Profiled | Datavant, IQVIA, John Snow Labs, MDClone, Privacert, TripleBlind, Duality Technologies |

| Forecast Period | 2026 to 2036 |

| Approach | Bottom-up and top-down valuation based on clinical research volume and secondary data licensing transactions. |

Source: Future Market Insights (FMI) analysis, based on proprietary forecasting model and primary research

Segments

Technique:

- Expert determination

- Safe harbor

- Tokenization

- Synthetic data

- Hybrid methods

Data Type:

- Structured data

- Clinical text

- Imaging

- Claims data

- Multi-modal data

Deployment:

- Cloud

- On-premise

- Hybrid

Buyer Type:

- Providers

- Life sciences

- Payers

- Research institutes

- Registries

- Assurance Layer

- Software

- Expert services

- Risk scoring

- Audit evidence

- Monitoring

Regions:

- Asia Pacific

- India

- China

- Japan

- South Korea

- Indonesia

- Australia & New Zealand

- ASEAN

- Rest of Asia Pacific

- Europe

- Germany

- Italy

- France

- United Kingdom

- Spain

- Benelux

- Nordics

- Central & Eastern Europe

- Rest of Europe

- North America

- United States

- Canada

- Mexico

- Latin America

- Brazil

- Argentina

- Chile

- Rest of Latin America

- Middle East & Africa

- Kingdom of Saudi Arabia

- United Arab Emirates

- South Africa

- Turkey

- Rest of Middle East & Africa

Bibliography

- European Medicines Agency. (2025, April). Anonymisation of personal data and assessment of commercially confidential information during the preparation and redaction of risk management plans (body and annexes 4 and 6): Regulatory Guideline.

- Narayan, S. M., Kohli, N., & Martin, M. M. (2025, March). Addressing contemporary threats in anonymised healthcare data using privacy engineering. NPJ Digital Medicine, 8, Article 145.

- IQVIA. (2025, September). Privacy-forward approaches to data-driven insights for medical specialty and provider associations.

- Kondylakis, H., et al. (2024, May). Documenting the de-identification process of clinical and imaging data for AI for health imaging projects. Insights into Imaging, 15, 130.

- National Institute of Standards and Technology. (2024, December). Genomic data cybersecurity and privacy frameworks profile (NIST IR 8467, 2nd public draft).

- USA Food and Drug Administration. (2024, July). Real-world data: Assessing electronic health records and medical claims data to support regulatory decision-making for drug and biological products: Guidance for industry.

- This bibliography is provided for reader reference. The full FMI report contains the complete reference list with primary source documentation.

This Report Addresses

- How Expert Determination has surpassed Safe Harbor as the dominant technique for high-risk research data.

- The mechanism behind the 15.2% growth rate in India’s de-identification assurance sector.

- Why Privacy Officers at hospital systems are demanding continuous risk scoring over point-in-time audits.

- The structural lock-in created by stability-testing and risk-validation cycles in life science research.

- The operational friction between data scientists wanting high utility and privacy teams wanting zero risk.

- How imaging de-identification software is evolving to handle 3D facial reconstruction threats.

- The commercial impact of secondary data monetization on the adoption of automated assurance layers.

- Competitive positioning of tokenization networks versus decentralized privacy-preserving computation startups.

Frequently Asked Questions

What is healthcare data de-identification assurance?

It is the functional validation and statistical verification of anonymization techniques applied to healthcare data to ensure negligible re-identification risk.

What is the projected CAGR for this market through 2036?

Revenue expansion for the healthcare data de-identification assurance market is set to occur at a 12.3% CAGR from 2026 to 2036.

Which technique holds the leading share in de-identification assurance?

Expert determination leads the Technique dimension with 38.0% share as organizations shift toward mathematical risk modeling.

Why is the Providers segment leading in buyer type?

Providers account for 32.0% share because they sit at the source of patient data and face direct pressure to share records for research safely.

What is the primary driver for the Indian market's high growth rate?

The India healthcare anonymization market is growing at 15.2% due to the National Digital Health Mission’s goal of creating a unified, secure digital health stack.

How is HIPAA expert determination different from safe harbor?

While safe harbor relies on a prescriptive list of 18 identifiers to redact, expert determination uses statistical risk modeling to maintain data utility after de-identification.

Why is de-identified health data still risky?

High-dimensional datasets can often be re-linked to individuals through external demographic or public records, creating a persistent re-identification risk in healthcare AI.

What is the significance of the 41.0% share held by software?

Software dominance signals a move toward automated risk scoring and continuous monitoring of re-identification risk as new data is appended to studies.

How is synthetic data for healthcare privacy changing the landscape?

Synthetic data for healthcare privacy allows for the creation of statistically identical cohorts that do not contain real patient information, bypassing many traditional assurance hurdles.

What commercial consequence do organizations face if they delay adoption?

Organizations that delay adoption lose significant revenue from data licensing and pharmaceutical partnerships while risking exclusion from international research networks.

Why is imaging data considered a high-risk frontier?

Imaging data is susceptible to re-identification through 3D reconstructions of skull features, requiring specialized DICOM de-identification software to ensure anatomical privacy.

What is the role of 'audit evidence' in the assurance layer?

Audit evidence provides a cryptographically signed record of all de-identification steps taken, serving as a legal safe harbor for regulatory compliance.

How do tokenization methods sustain market share?

Tokenization allows for the linking of patient records across disparate databases without revealing patient identity, making it foundational for longitudinal research.

What is the non-obvious observation regarding 'expert' reviews?

A non-obvious observation is that expert determination is increasingly performed by software algorithms rather than human experts, necessitating new models for algorithm assurance.

How does the UK NHS transformation affect the market?

The UK's shift toward Trusted Research Environments bakes de-identification assurance into the national health data architecture, supporting the UK clinical data anonymization market.

What is the impact of HIPAA Safe Harbor rules on market structure?

Safe Harbor rules provide a simple checklist but often result in low data utility, pushing researchers toward the more flexible expert determination method.

How do life science firms use de-identification assurance for clinical trials?

Life science firms use these tools to anonymize patient-level trial results for public disclosure and to meet rigorous regulatory transparency mandates.

What friction exists between research leads and privacy teams?

Friction arises from the utility-privacy trade-off, where research leads require granular details while privacy teams prioritize heavy masking and risk reduction.

What is the competitive advantage of incumbents like Datavant?

Incumbents benefit from network effects where their tokenization standards are used by thousands of sites, creating high barriers for new entrants.

How is cloud deployment influencing the market?

Cloud deployment allows for the scalable processing of massive clinical datasets and enables federated assurance across multiple hospital systems simultaneously.

What does the 11.4% CAGR in Japan signify?

Japan's growth is tied to its aging population and the resulting surge in geriatric research data, requiring high-volume medical data anonymization.

What is the end-state for the market in 2036?

By 2036, de-identification assurance will be a silent, automated foundational component of 'Privacy by Design' in all medical informatics.

Table of Content

- Executive Summary

- Global Market Outlook

- Demand to side Trends

- Supply to side Trends

- Technology Roadmap Analysis

- Analysis and Recommendations

- Market Overview

- Market Coverage / Taxonomy

- Research Methodology

- Chapter Orientation

- Analytical Lens and Working Hypotheses

- Market Structure, Signals, and Trend Drivers

- Benchmarking and Cross-market Comparability

- Market Sizing, Forecasting, and Opportunity Mapping

- Research Design and Evidence Framework

- Desk Research Programme (Secondary Evidence)

- Company Annual and Sustainability Reports

- Peer-reviewed Journals and Academic Literature

- Corporate Websites, Product Literature, and Technical Notes

- Earnings Decks and Investor Briefings

- Statutory Filings and Regulatory Disclosures

- Technical White Papers and Standards Notes

- Trade Journals, Industry Magazines, and Analyst Briefs

- Conference Proceedings, Webinars, and Seminar Materials

- Government Statistics Portals and Public Data Releases

- Press Releases and Reputable Media Coverage

- Specialist Newsletters and Curated Briefings

- Sector Databases and Reference Repositories

- FMI Internal Proprietary Databases and Historical Market Datasets

- Subscription Datasets and Paid Sources

- Social Channels, Communities, and Digital Listening Inputs

- Additional Desk Sources

- Expert Input and Fieldwork (Primary Evidence)

- Primary Modes

- Qualitative Interviews and Expert Elicitation

- Observational and In-context Research

- Social and Community Interactions

- Stakeholder Universe Engaged

- C-suite Leaders

- Board Members

- Presidents and Vice Presidents

- R&D and Innovation Heads

- Technical Specialists

- Domain Subject-matter Experts

- Scientists

- Physicians and Other Healthcare Professionals

- Governance, Ethics, and Data Stewardship

- Research Ethics

- Data Integrity and Handling

- Primary Modes

- Tooling, Models, and Reference Databases

- Desk Research Programme (Secondary Evidence)

- Data Engineering and Model Build

- Data Acquisition and Ingestion

- Cleaning, Normalisation, and Verification

- Synthesis, Triangulation, and Analysis

- Quality Assurance and Audit Trail

- Market Background

- Market Dynamics

- Drivers

- Restraints

- Opportunity

- Trends

- Scenario Forecast

- Demand in Optimistic Scenario

- Demand in Likely Scenario

- Demand in Conservative Scenario

- Opportunity Map Analysis

- Product Life Cycle Analysis

- Supply Chain Analysis

- Investment Feasibility Matrix

- Value Chain Analysis

- PESTLE and Porter’s Analysis

- Regulatory Landscape

- Regional Parent Market Outlook

- Production and Consumption Statistics

- Import and Export Statistics

- Market Dynamics

- Global Market Analysis 2021 to 2025 and Forecast, 2026 to 2036

- Historical Market Size Value (USD Million) Analysis, 2021 to 2025

- Current and Future Market Size Value (USD Million) Projections, 2026 to 2036

- Y to o to Y Growth Trend Analysis

- Absolute $ Opportunity Analysis

- Global Market Pricing Analysis 2021 to 2025 and Forecast 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Technique

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Technique , 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Technique , 2026 to 2036

- Expert Determination

- Tokenization

- Others

- Expert Determination

- Y to o to Y Growth Trend Analysis By Technique , 2021 to 2025

- Absolute $ Opportunity Analysis By Technique , 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Data Type

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Data Type, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Data Type, 2026 to 2036

- Structured Data

- Imaging

- Others

- Structured Data

- Y to o to Y Growth Trend Analysis By Data Type, 2021 to 2025

- Absolute $ Opportunity Analysis By Data Type, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Assurance Layer

- Introduction / Key Findings

- Historical Market Size Value (USD Million) Analysis By Assurance Layer, 2021 to 2025

- Current and Future Market Size Value (USD Million) Analysis and Forecast By Assurance Layer, 2026 to 2036

- Software

- Monitoring

- Others

- Software

- Y to o to Y Growth Trend Analysis By Assurance Layer, 2021 to 2025

- Absolute $ Opportunity Analysis By Assurance Layer, 2026 to 2036

- Global Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Region

- Introduction

- Historical Market Size Value (USD Million) Analysis By Region, 2021 to 2025

- Current Market Size Value (USD Million) Analysis and Forecast By Region, 2026 to 2036

- North America

- Latin America

- Western Europe

- Eastern Europe

- East Asia

- South Asia and Pacific

- Middle East & Africa

- Market Attractiveness Analysis By Region

- North America Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- USA

- Canada

- Mexico

- By Technique

- By Data Type

- By Assurance Layer

- By Country

- Market Attractiveness Analysis

- By Country

- By Technique

- By Data Type

- By Assurance Layer

- Key Takeaways

- Latin America Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Brazil

- Chile

- Rest of Latin America

- By Technique

- By Data Type

- By Assurance Layer

- By Country

- Market Attractiveness Analysis

- By Country

- By Technique

- By Data Type

- By Assurance Layer

- Key Takeaways

- Western Europe Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Germany

- UK

- Italy

- Spain

- France

- Nordic

- BENELUX

- Rest of Western Europe

- By Technique

- By Data Type

- By Assurance Layer

- By Country

- Market Attractiveness Analysis

- By Country

- By Technique

- By Data Type

- By Assurance Layer

- Key Takeaways

- Eastern Europe Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Russia

- Poland

- Hungary

- Balkan & Baltic

- Rest of Eastern Europe

- By Technique

- By Data Type

- By Assurance Layer

- By Country

- Market Attractiveness Analysis

- By Country

- By Technique

- By Data Type

- By Assurance Layer

- Key Takeaways

- East Asia Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- China

- Japan

- South Korea

- By Technique

- By Data Type

- By Assurance Layer

- By Country

- Market Attractiveness Analysis

- By Country

- By Technique

- By Data Type

- By Assurance Layer

- Key Takeaways

- South Asia and Pacific Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- India

- ASEAN

- Australia & New Zealand

- Rest of South Asia and Pacific

- By Technique

- By Data Type

- By Assurance Layer

- By Country

- Market Attractiveness Analysis

- By Country

- By Technique

- By Data Type

- By Assurance Layer

- Key Takeaways

- Middle East & Africa Market Analysis 2021 to 2025 and Forecast 2026 to 2036, By Country

- Historical Market Size Value (USD Million) Trend Analysis By Market Taxonomy, 2021 to 2025

- Market Size Value (USD Million) Forecast By Market Taxonomy, 2026 to 2036

- By Country

- Kingdom of Saudi Arabia

- Other GCC Countries

- Turkiye

- South Africa

- Other African Union

- Rest of Middle East & Africa

- By Technique

- By Data Type

- By Assurance Layer

- By Country

- Market Attractiveness Analysis

- By Country

- By Technique

- By Data Type

- By Assurance Layer

- Key Takeaways

- Key Countries Market Analysis

- USA

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Canada

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Mexico

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Brazil

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Chile

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Germany

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- UK

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Italy

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Spain

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- France

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- India

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- ASEAN

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Australia & New Zealand

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- China

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Japan

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- South Korea

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Russia

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Poland

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Hungary

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Kingdom of Saudi Arabia

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- Turkiye

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- South Africa

- Pricing Analysis

- Market Share Analysis, 2025

- By Technique

- By Data Type

- By Assurance Layer

- USA

- Market Structure Analysis

- Competition Dashboard

- Competition Benchmarking

- Market Share Analysis of Top Players

- By Regional

- By Technique

- By Data Type

- By Assurance Layer

- Competition Analysis

- Competition Deep Dive

- Datavant

- Overview

- Product Portfolio

- Profitability by Market Segments (Product/Age /Sales Channel/Region)

- Sales Footprint

- Strategy Overview

- Marketing Strategy

- Product Strategy

- Channel Strategy

- IQVIA Privacy Analytics

- John Snow Labs

- MDClone

- Privacert

- TripleBlind

- Datavant

- Competition Deep Dive

- Assumptions & Acronyms Used

List of Tables

- Table 1: Global Market Value (USD Million) Forecast by Region, 2021 to 2036

- Table 2: Global Market Value (USD Million) Forecast by Technique , 2021 to 2036

- Table 3: Global Market Value (USD Million) Forecast by Data Type, 2021 to 2036

- Table 4: Global Market Value (USD Million) Forecast by Assurance Layer, 2021 to 2036

- Table 5: North America Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 6: North America Market Value (USD Million) Forecast by Technique , 2021 to 2036

- Table 7: North America Market Value (USD Million) Forecast by Data Type, 2021 to 2036

- Table 8: North America Market Value (USD Million) Forecast by Assurance Layer, 2021 to 2036

- Table 9: Latin America Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 10: Latin America Market Value (USD Million) Forecast by Technique , 2021 to 2036

- Table 11: Latin America Market Value (USD Million) Forecast by Data Type, 2021 to 2036

- Table 12: Latin America Market Value (USD Million) Forecast by Assurance Layer, 2021 to 2036

- Table 13: Western Europe Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 14: Western Europe Market Value (USD Million) Forecast by Technique , 2021 to 2036

- Table 15: Western Europe Market Value (USD Million) Forecast by Data Type, 2021 to 2036

- Table 16: Western Europe Market Value (USD Million) Forecast by Assurance Layer, 2021 to 2036

- Table 17: Eastern Europe Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 18: Eastern Europe Market Value (USD Million) Forecast by Technique , 2021 to 2036

- Table 19: Eastern Europe Market Value (USD Million) Forecast by Data Type, 2021 to 2036

- Table 20: Eastern Europe Market Value (USD Million) Forecast by Assurance Layer, 2021 to 2036

- Table 21: East Asia Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 22: East Asia Market Value (USD Million) Forecast by Technique , 2021 to 2036

- Table 23: East Asia Market Value (USD Million) Forecast by Data Type, 2021 to 2036

- Table 24: East Asia Market Value (USD Million) Forecast by Assurance Layer, 2021 to 2036

- Table 25: South Asia and Pacific Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 26: South Asia and Pacific Market Value (USD Million) Forecast by Technique , 2021 to 2036

- Table 27: South Asia and Pacific Market Value (USD Million) Forecast by Data Type, 2021 to 2036

- Table 28: South Asia and Pacific Market Value (USD Million) Forecast by Assurance Layer, 2021 to 2036

- Table 29: Middle East & Africa Market Value (USD Million) Forecast by Country, 2021 to 2036

- Table 30: Middle East & Africa Market Value (USD Million) Forecast by Technique , 2021 to 2036

- Table 31: Middle East & Africa Market Value (USD Million) Forecast by Data Type, 2021 to 2036

- Table 32: Middle East & Africa Market Value (USD Million) Forecast by Assurance Layer, 2021 to 2036

List of Figures

- Figure 1: Global Market Pricing Analysis

- Figure 2: Global Market Value (USD Million) Forecast 2021-2036

- Figure 3: Global Market Value Share and BPS Analysis by Technique , 2026 and 2036

- Figure 4: Global Market Y-o-Y Growth Comparison by Technique , 2026-2036

- Figure 5: Global Market Attractiveness Analysis by Technique

- Figure 6: Global Market Value Share and BPS Analysis by Data Type, 2026 and 2036

- Figure 7: Global Market Y-o-Y Growth Comparison by Data Type, 2026-2036

- Figure 8: Global Market Attractiveness Analysis by Data Type

- Figure 9: Global Market Value Share and BPS Analysis by Assurance Layer, 2026 and 2036

- Figure 10: Global Market Y-o-Y Growth Comparison by Assurance Layer, 2026-2036

- Figure 11: Global Market Attractiveness Analysis by Assurance Layer

- Figure 12: Global Market Value (USD Million) Share and BPS Analysis by Region, 2026 and 2036

- Figure 13: Global Market Y-o-Y Growth Comparison by Region, 2026-2036

- Figure 14: Global Market Attractiveness Analysis by Region

- Figure 15: North America Market Incremental Dollar Opportunity, 2026-2036

- Figure 16: Latin America Market Incremental Dollar Opportunity, 2026-2036

- Figure 17: Western Europe Market Incremental Dollar Opportunity, 2026-2036

- Figure 18: Eastern Europe Market Incremental Dollar Opportunity, 2026-2036

- Figure 19: East Asia Market Incremental Dollar Opportunity, 2026-2036

- Figure 20: South Asia and Pacific Market Incremental Dollar Opportunity, 2026-2036

- Figure 21: Middle East & Africa Market Incremental Dollar Opportunity, 2026-2036

- Figure 22: North America Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 23: North America Market Value Share and BPS Analysis by Technique , 2026 and 2036

- Figure 24: North America Market Y-o-Y Growth Comparison by Technique , 2026-2036

- Figure 25: North America Market Attractiveness Analysis by Technique

- Figure 26: North America Market Value Share and BPS Analysis by Data Type, 2026 and 2036

- Figure 27: North America Market Y-o-Y Growth Comparison by Data Type, 2026-2036

- Figure 28: North America Market Attractiveness Analysis by Data Type

- Figure 29: North America Market Value Share and BPS Analysis by Assurance Layer, 2026 and 2036

- Figure 30: North America Market Y-o-Y Growth Comparison by Assurance Layer, 2026-2036

- Figure 31: North America Market Attractiveness Analysis by Assurance Layer

- Figure 32: Latin America Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 33: Latin America Market Value Share and BPS Analysis by Technique , 2026 and 2036

- Figure 34: Latin America Market Y-o-Y Growth Comparison by Technique , 2026-2036

- Figure 35: Latin America Market Attractiveness Analysis by Technique

- Figure 36: Latin America Market Value Share and BPS Analysis by Data Type, 2026 and 2036

- Figure 37: Latin America Market Y-o-Y Growth Comparison by Data Type, 2026-2036

- Figure 38: Latin America Market Attractiveness Analysis by Data Type

- Figure 39: Latin America Market Value Share and BPS Analysis by Assurance Layer, 2026 and 2036

- Figure 40: Latin America Market Y-o-Y Growth Comparison by Assurance Layer, 2026-2036

- Figure 41: Latin America Market Attractiveness Analysis by Assurance Layer

- Figure 42: Western Europe Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 43: Western Europe Market Value Share and BPS Analysis by Technique , 2026 and 2036

- Figure 44: Western Europe Market Y-o-Y Growth Comparison by Technique , 2026-2036

- Figure 45: Western Europe Market Attractiveness Analysis by Technique

- Figure 46: Western Europe Market Value Share and BPS Analysis by Data Type, 2026 and 2036

- Figure 47: Western Europe Market Y-o-Y Growth Comparison by Data Type, 2026-2036

- Figure 48: Western Europe Market Attractiveness Analysis by Data Type

- Figure 49: Western Europe Market Value Share and BPS Analysis by Assurance Layer, 2026 and 2036

- Figure 50: Western Europe Market Y-o-Y Growth Comparison by Assurance Layer, 2026-2036

- Figure 51: Western Europe Market Attractiveness Analysis by Assurance Layer

- Figure 52: Eastern Europe Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 53: Eastern Europe Market Value Share and BPS Analysis by Technique , 2026 and 2036

- Figure 54: Eastern Europe Market Y-o-Y Growth Comparison by Technique , 2026-2036

- Figure 55: Eastern Europe Market Attractiveness Analysis by Technique

- Figure 56: Eastern Europe Market Value Share and BPS Analysis by Data Type, 2026 and 2036

- Figure 57: Eastern Europe Market Y-o-Y Growth Comparison by Data Type, 2026-2036

- Figure 58: Eastern Europe Market Attractiveness Analysis by Data Type

- Figure 59: Eastern Europe Market Value Share and BPS Analysis by Assurance Layer, 2026 and 2036

- Figure 60: Eastern Europe Market Y-o-Y Growth Comparison by Assurance Layer, 2026-2036

- Figure 61: Eastern Europe Market Attractiveness Analysis by Assurance Layer

- Figure 62: East Asia Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 63: East Asia Market Value Share and BPS Analysis by Technique , 2026 and 2036

- Figure 64: East Asia Market Y-o-Y Growth Comparison by Technique , 2026-2036

- Figure 65: East Asia Market Attractiveness Analysis by Technique

- Figure 66: East Asia Market Value Share and BPS Analysis by Data Type, 2026 and 2036

- Figure 67: East Asia Market Y-o-Y Growth Comparison by Data Type, 2026-2036

- Figure 68: East Asia Market Attractiveness Analysis by Data Type

- Figure 69: East Asia Market Value Share and BPS Analysis by Assurance Layer, 2026 and 2036

- Figure 70: East Asia Market Y-o-Y Growth Comparison by Assurance Layer, 2026-2036

- Figure 71: East Asia Market Attractiveness Analysis by Assurance Layer

- Figure 72: South Asia and Pacific Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 73: South Asia and Pacific Market Value Share and BPS Analysis by Technique , 2026 and 2036

- Figure 74: South Asia and Pacific Market Y-o-Y Growth Comparison by Technique , 2026-2036

- Figure 75: South Asia and Pacific Market Attractiveness Analysis by Technique

- Figure 76: South Asia and Pacific Market Value Share and BPS Analysis by Data Type, 2026 and 2036

- Figure 77: South Asia and Pacific Market Y-o-Y Growth Comparison by Data Type, 2026-2036

- Figure 78: South Asia and Pacific Market Attractiveness Analysis by Data Type

- Figure 79: South Asia and Pacific Market Value Share and BPS Analysis by Assurance Layer, 2026 and 2036

- Figure 80: South Asia and Pacific Market Y-o-Y Growth Comparison by Assurance Layer, 2026-2036

- Figure 81: South Asia and Pacific Market Attractiveness Analysis by Assurance Layer

- Figure 82: Middle East & Africa Market Value Share and BPS Analysis by Country, 2026 and 2036

- Figure 83: Middle East & Africa Market Value Share and BPS Analysis by Technique , 2026 and 2036

- Figure 84: Middle East & Africa Market Y-o-Y Growth Comparison by Technique , 2026-2036

- Figure 85: Middle East & Africa Market Attractiveness Analysis by Technique

- Figure 86: Middle East & Africa Market Value Share and BPS Analysis by Data Type, 2026 and 2036

- Figure 87: Middle East & Africa Market Y-o-Y Growth Comparison by Data Type, 2026-2036

- Figure 88: Middle East & Africa Market Attractiveness Analysis by Data Type

- Figure 89: Middle East & Africa Market Value Share and BPS Analysis by Assurance Layer, 2026 and 2036

- Figure 90: Middle East & Africa Market Y-o-Y Growth Comparison by Assurance Layer, 2026-2036

- Figure 91: Middle East & Africa Market Attractiveness Analysis by Assurance Layer

- Figure 92: Global Market - Tier Structure Analysis

- Figure 93: Global Market - Company Share Analysis